Important

You are viewing documentation for an older version of Confluent Platform. For the latest, click here.

Tutorial: Multi-Region Clusters¶

Overview¶

This tutorial describes the Multi-Region Clusters capability that is built directly into Confluent Server.

Multi-Region Clusters allow customers to run a single Apache Kafka® cluster across multiple datacenters. Often referred to as a stretch cluster, Multi-Region Clusters replicate data between datacenters across regional availability zones. You can choose how to replicate data, synchronously or asynchronously, on a per Kafka topic basis. It provides good durability guarantees and makes disaster recovery (DR) much easier.

Benefits:

- Supports multi-site deployments of synchronous and asynchronous replication between datacenters

- Consumers can leverage data locality for reading Kafka data, which means better performance and lower cost

- Ordering of Kafka messages is preserved across datacenters

- Consumer offsets are preserved

- In event of a disaster in a datacenter, new leaders are automatically elected in the other datacenter for the topics configured for synchronous replication, and applications proceed without interruption, achieving very low RTOs and RPO=0 for those topics.

Concepts¶

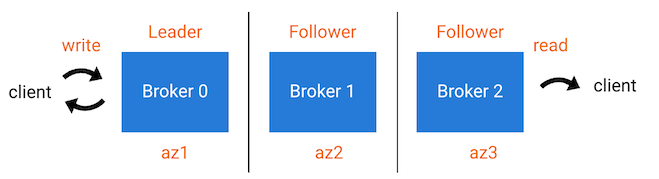

Replicas are brokers assigned to a topic-partition, and they can be a

Leader, Follower, or Observer. A Leader is the broker/replica

accepting producer messages. A Follower is a broker/replica that can

join an ISR list and participate in the calculation of the high

watermark (used by the leader when acknowledging messages back to the

producer).

An ISR list (in-sync replicas) includes brokers that have a given

topic-partition. The data is copied from the leader to every member of

the ISR before the producer gets an acknowledgement. The followers in an

ISR can become the leader if the current leader fails.

An Observer is a broker/replica that also has a copy of data for a given

topic-partition, and consumers are allowed to read from them even though the

Observer isn’t the leader–this is known as “Follower Fetching”. However, the

data is copied asynchronously from the leader such that a producer doesn’t wait

on observers to get back an acknowledgement. By default, observers don’t

participate in the ISR list and can’t become the leader if the current leader

fails, but if a user manually changes leader assignment then they can

participate in the ISR list.

Configuration¶

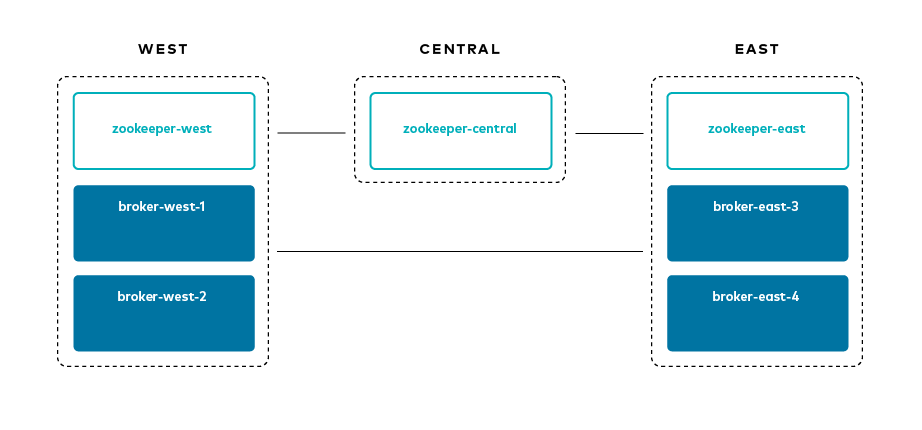

The scenario for this tutorial is as follows:

- Three regions:

west,central, andeast - Broker naming convention:

broker-[region]-[broker_id]

Here are some relevant configuration parameters:

Broker¶

You can find full broker configurations in the docker-compose.yml file. The most important configuration parameters include:

broker.rack: identifies the location of the broker. For the demo, it represents a region, eithereastorwestreplica.selector.class=org.apache.kafka.common.replica.RackAwareReplicaSelector: allows clients to read from followers (in contrast, clients are typically only allowed to read from leaders)confluent.log.placement.constraints: sets the default replica placement constraint configuration for newly created topics.

Client¶

client.rack: identifies the location of the client. For the demo, it represents a region, eithereastorwestreplication.factor: at the topic level, replication factor is mutually exclusive to replica placement constraints, so for Kafka Streams applications, setreplication.factor=-1to let replica placement constraints take precedencemin.insync.replicas: durability guarantees are driven by replica placement andmin.insync.replicas. The number of followers in each region should be sufficient to meetmin.insync.replicas, for example, ifmin.insync.replicas=3, thenwestshould have 3 replicas andeastshould have 3 replicas.

Topic¶

--replica-placement <path-to-replica-placement-policy-json>: at topic creation, this argument defines the replica placement policy for a given topic

Download and run the tutorial¶

Clone the confluentinc/examples GitHub repo by running the following command:

git clone https://github.com/confluentinc/examples cd examples git checkout 5.5.15-post

Go to the directory with the Multi-Region Clusters by running the following command:

cd multiregion

If you want to manually step through this tutorial, which is advised for new users who want to gain familiarity with Multi-Region Clusters, skip ahead to the next section. Alternatively, you can run the full tutorial end-to-end with the following script, which automates all the steps in the tutorial:

./scripts/start.sh

Startup¶

Run the following command:

docker-compose up -d

You should see the following Docker containers with

docker-compose ps:Name Command State Ports ---------------------------------------------------------------------------------------------------------------- broker-east-3 /etc/confluent/docker/run Up 0.0.0.0:8093->8093/tcp, 9092/tcp, 0.0.0.0:9093->9093/tcp broker-east-4 /etc/confluent/docker/run Up 0.0.0.0:8094->8094/tcp, 9092/tcp, 0.0.0.0:9094->9094/tcp broker-west-1 /etc/confluent/docker/run Up 0.0.0.0:8091->8091/tcp, 0.0.0.0:9091->9091/tcp, 9092/tcp broker-west-2 /etc/confluent/docker/run Up 0.0.0.0:8092->8092/tcp, 0.0.0.0:9092->9092/tcp zookeeper-central /etc/confluent/docker/run Up 2181/tcp, 0.0.0.0:2182->2182/tcp, 2888/tcp, 3888/tcp zookeeper-east /etc/confluent/docker/run Up 2181/tcp, 0.0.0.0:2183->2183/tcp, 2888/tcp, 3888/tcp zookeeper-west /etc/confluent/docker/run Up 0.0.0.0:2181->2181/tcp, 2888/tcp, 3888/tcp

Inject latency and packet loss¶

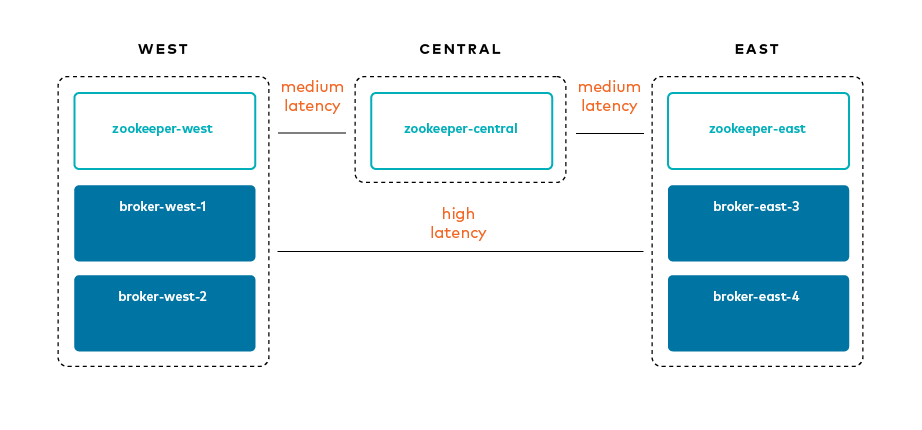

This demo injects latency between the regions and packet loss to simulate the WAN link. It uses Pumba.

Run the Dockerized Pumba script latency_docker.sh:

./scripts/latency_docker.sh

Verify you see the following Docker containers by running the following command:

docker container ls --filter "name=pumba"

You should see:

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 652fcf244c4d gaiaadm/pumba:0.6.4 "/pumba netem --dura…" 9 seconds ago Up 8 seconds pumba-loss-east-west 5590c230aef1 gaiaadm/pumba:0.6.4 "/pumba netem --dura…" 9 seconds ago Up 8 seconds pumba-loss-west-east e60c3a0210e7 gaiaadm/pumba:0.6.4 "/pumba netem --dura…" 9 seconds ago Up 8 seconds pumba-high-latency-west-east d3c1faf97ba5 gaiaadm/pumba:0.6.4 "/pumba netem --dura…" 9 seconds ago Up 8 seconds pumba-medium-latency-central

View the IP addresses used by Docker for the demo:

docker inspect -f '{{.Name}} - {{range .NetworkSettings.Networks}}{{.IPAddress}}{{end}}' $(docker ps -aq)

Replica Placement¶

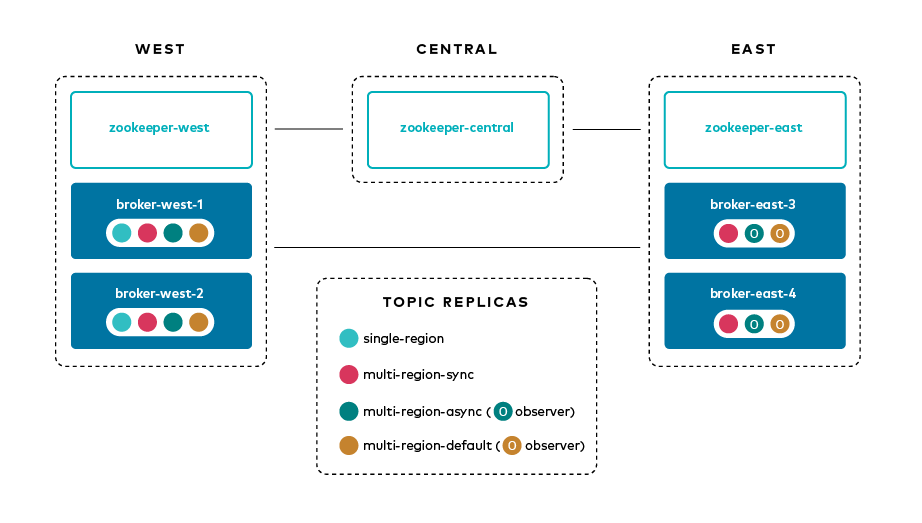

This tutorial demonstrates the principles of Multi-Region Clusters through various topics.

Each topic has a replica placement policy that specifies a set of matching

constraints (for example, count and rack for replicas and

observers). The replica placement policy file is defined with the argument

--replica-placement <path-to-replica-placement-policy-json> mentioned

earlier (these files are in the config directory). Each placement

also has an associated minimum count that guarantees a

certain spread of replicas throughout the cluster.

In this tutorial, you will create the following topics. You could create all the topics by running the script create-topics.sh, but we will step through each topic creation to demonstrate the required arguments.

| Topic name | Leader | Followers (sync replicas) | Observers (async replicas) | ISR list | Use default placement contraints |

|---|---|---|---|---|---|

| single-region | 1x west | 1x west | n/a | {1,2} | no |

| multi-region-sync | 1x west | 1x west, 2x east | n/a | {1,2,3,4} | no |

| multi-region-async | 1x west | 1x west | 2x east | {1,2} | no |

| multi-region-default | 1x west | 1x west | 2x east | {1,2} | yes |

Create the Kafka topic

single-region.docker-compose exec broker-west-1 kafka-topics --create \ --bootstrap-server broker-west-1:19091 \ --topic single-region \ --partitions 1 \ --replica-placement /etc/kafka/demo/placement-single-region.json \ --config min.insync.replicas=1

Here is the topic’s replica placement policy placement-single-region.json:

{ "version": 1, "replicas": [ { "count": 2, "constraints": { "rack": "west" } } ] }Create the Kafka topic

multi-region-sync.docker-compose exec broker-west-1 kafka-topics --create \ --bootstrap-server broker-west-1:19091 \ --topic multi-region-sync \ --partitions 1 \ --replica-placement /etc/kafka/demo/placement-multi-region-sync.json \ --config min.insync.replicas=1

Here is the topic’s replica placement policy placement-multi-region-sync.json:

{ "version": 1, "replicas": [ { "count": 2, "constraints": { "rack": "west" } }, { "count": 2, "constraints": { "rack": "east" } } ] }Create the Kafka topic

multi-region-async.docker-compose exec broker-west-1 kafka-topics --create \ --bootstrap-server broker-west-1:19091 \ --topic multi-region-async \ --partitions 1 \ --replica-placement /etc/kafka/demo/placement-multi-region-async.json \ --config min.insync.replicas=1

Here is the topic’s replica placement policy placement-multi-region-async.json:

{ "version": 1, "replicas": [ { "count": 2, "constraints": { "rack": "west" } } ], "observers": [ { "count": 2, "constraints": { "rack": "east" } } ] }Create the Kafka topic

multi-region-default. Note that the--replica-placementargument is not used in order to demonstrate the default placement constraints.docker-compose exec broker-west-1 kafka-topics \ --create \ --bootstrap-server broker-west-1:19091 \ --topic multi-region-default \ --config min.insync.replicas=1

View the topic replica placement by running the script describe-topics.sh:

./scripts/describe-topics.sh

You should see output similar to the following:

==> Describe topic single-region Topic: single-region PartitionCount: 1 ReplicationFactor: 2 Configs: min.insync.replicas=1,confluent.placement.constraints={"version":1,"replicas":[{"count":2,"constraints":{"rack":"west"}}],"observers":[]} Topic: single-region Partition: 0 Leader: 2 Replicas: 2,1 Isr: 2,1 Offline: ==> Describe topic multi-region-sync Topic: multi-region-sync PartitionCount: 1 ReplicationFactor: 4 Configs: min.insync.replicas=1,confluent.placement.constraints={"version":1,"replicas":[{"count":2,"constraints":{"rack":"west"}},{"count":2,"constraints":{"rack":"east"}}],"observers":[]} Topic: multi-region-sync Partition: 0 Leader: 1 Replicas: 1,2,3,4 Isr: 1,2,3,4 Offline: ==> Describe topic multi-region-async Topic: multi-region-async PartitionCount: 1 ReplicationFactor: 4 Configs: min.insync.replicas=1,confluent.placement.constraints={"version":1,"replicas":[{"count":2,"constraints":{"rack":"west"}}],"observers":[{"count":2,"constraints":{"rack":"east"}}]} Topic: multi-region-async Partition: 0 Leader: 2 Replicas: 2,1,3,4 Isr: 2,1 Offline: Observers: 3,4 ==> Describe topic multi-region-default Topic: multi-region-default PartitionCount: 1 ReplicationFactor: 4 Configs: min.insync.replicas=1,confluent.placement.constraints={"version":1,"replicas":[{"count":2,"constraints":{"rack":"west"}}],"observers":[{"count":2,"constraints":{"rack":"east"}}]} Topic: multi-region-default Partition: 0 Leader: 2 Replicas: 2,1,3,4 Isr: 2,1 Offline: Observers: 3,4Observe the following:

- The

multi-region-asyncandmulti-region-defaulttopics have replicas acrosswestandeastregions, but only 1 and 2 are in the ISR, and 3 and 4 are observers.

- The

Client Performance¶

Producer¶

Run the producer perf test script run-producer.sh:

./scripts/run-producer.sh

Verify that you see performance results similar to the following:

==> Produce: Single-region Replication (topic: single-region) 5000 records sent, 240.453977 records/sec (1.15 MB/sec), 10766.48 ms avg latency, 17045.00 ms max latency, 11668 ms 50th, 16596 ms 95th, 16941 ms 99th, 17036 ms 99.9th. ==> Produce: Multi-region Sync Replication (topic: multi-region-sync) 100 records sent, 2.145923 records/sec (0.01 MB/sec), 34018.18 ms avg latency, 45705.00 ms max latency, 34772 ms 50th, 44815 ms 95th, 45705 ms 99th, 45705 ms 99.9th. ==> Produce: Multi-region Async Replication to Observers (topic: multi-region-async) 5000 records sent, 228.258388 records/sec (1.09 MB/sec), 11296.69 ms avg latency, 18325.00 ms max latency, 11866 ms 50th, 17937 ms 95th, 18238 ms 99th, 18316 ms 99.9th.

Observe the following:

- In the first and third cases, the

single-regionandmulti-region-asynctopics have nearly the same throughput performance (for examples,1.15 MB/secand1.09 MB/sec, respectively, in the previous example), because only the replicas in thewestregion need to acknowledge. - In the second case for the

multi-region-synctopic, due to the poor network bandwidth between theeastandwestregions and to an ISR made up of brokers in both regions, it took a big throughput hit (for example,0.01 MB/secin the previous example). This is because the producer is waiting for anackfrom all members of the ISR before continuing, including those inwestandeast. - The observers in the third case for topic

multi-region-asyncdidn’t affect the overall producer throughput because thewestregion is sending anackback to the producer after it has been replicated twice in thewestregion, and it is not waiting for the async copy to theeastregion. - This example doesn’t produce to

multi-region-defaultbecause the behavior is the same asmulti-region-asyncsince the configuration is the same.

- In the first and third cases, the

Consumer¶

Run the consumer perf test script run-consumer.sh, where the consumer is in

east:./scripts/run-consumer.sh

Verify that you see performance results similar to the following:

==> Consume from east: Multi-region Async Replication reading from Leader in west (topic: multi-region-async) start.time, end.time, data.consumed.in.MB, MB.sec, data.consumed.in.nMsg, nMsg.sec, rebalance.time.ms, fetch.time.ms, fetch.MB.sec, fetch.nMsg.sec 2019-09-25 17:10:27:266, 2019-09-25 17:10:53:683, 23.8419, 0.9025, 5000, 189.2721, 1569431435702, -1569431409285, -0.0000, -0.0000 ==> Consume from east: Multi-region Async Replication reading from Observer in east (topic: multi-region-async) start.time, end.time, data.consumed.in.MB, MB.sec, data.consumed.in.nMsg, nMsg.sec, rebalance.time.ms, fetch.time.ms, fetch.MB.sec, fetch.nMsg.sec 2019-09-25 17:10:56:844, 2019-09-25 17:11:02:902, 23.8419, 3.9356, 5000, 825.3549, 1569431461383, -1569431455325, -0.0000, -0.0000

Observe the following:

- In the first scenario, the consumer running in

eastreads from the leader inwestand is impacted by the low bandwidth betweeneastandwest–the throughput of the throughput is lower in this case (for example,0.9025MB per sec in the previous example). - In the second scenario, the consumer running in

eastreads from the follower that is also ineast–the throughput of the consumner is higher in this case (for example,3.9356MBps in the previous example). - This example doesn’t consume from

multi-region-defaultas the behavior should be the same asmulti-region-asyncsince the configuration is the same.

- In the first scenario, the consumer running in

Monitoring¶

In Confluent Server there are a few JMX metrics you should monitor for determining the health and state of a topic partition. The tutorial describes the following JMX metrics. For a description of other relevant JMX metrics, see Metrics.

ReplicasCount- In JMX the full object name iskafka.cluster:type=Partition,name=ReplicasCount,topic=<topic-name>,partition=<partition-id>. It reports the number of replicas (sync replicas and observers) assigned to the topic partition.InSyncReplicasCount- In JMX the full object name iskafka.cluster:type=Partition,name=InSyncReplicasCount,topic=<topic-name>,partition=<partition-id>. It reports the number of replicas in the ISR.CaughtUpReplicasCount- In JMX the full object name iskafka.cluster:type=Partition,name=CaughtUpReplicasCount,topic=<topic-name>,partition=<partition-id>. It reports the number of replicas that are consider caught up to the topic partition leader. Note that this may be greater than the size of the ISR as observers may be caught up but are not part of ISR.

There is a script you can run to collect the JMX metrics from the command line, but the general form is:

docker-compose exec broker-west-1 kafka-run-class kafka.tools.JmxTool --jmx-url service:jmx:rmi:///jndi/rmi://localhost:8091/jmxrmi --object-name kafka.cluster:type=Partition,name=<METRIC>,topic=<TOPIC>,partition=0 --one-time true

Run the script jmx_metrics.sh to get the JMX metrics for

ReplicasCount,InSyncReplicasCount, andCaughtUpReplicasCountfrom each of the brokers:./scripts/jmx_metrics.sh

Verify you see output similar to the following:

==> Monitor ReplicasCount single-region: 2 multi-region-sync: 4 multi-region-async: 4 multi-region-default: 4 ==> Monitor InSyncReplicasCount single-region: 2 multi-region-sync: 4 multi-region-async: 2 multi-region-default: 2 ==> Monitor CaughtUpReplicasCount single-region: 2 multi-region-sync: 4 multi-region-async: 4 multi-region-default: 4

Failover and Failback¶

Fail Region¶

In this section, you will simulate a region failure by bringing down the west region.

Run the following command to stop the Docker containers corresponding to the

westregion:docker-compose stop broker-west-1 broker-west-2 zookeeper-west

Verify the new topic replica placement by running the script describe-topics.sh:

./scripts/describe-topics.sh

You should see output similar to the following:

==> Describe topic single-region Topic: single-region PartitionCount: 1 ReplicationFactor: 2 Configs: min.insync.replicas=1,confluent.placement.constraints={"version":1,"replicas":[{"count":2,"constraints":{"rack":"west"}}],"observers":[]} Topic: single-region Partition: 0 Leader: none Replicas: 2,1 Isr: 1 Offline: 2,1 ==> Describe topic multi-region-sync Topic: multi-region-sync PartitionCount: 1 ReplicationFactor: 4 Configs: min.insync.replicas=1,confluent.placement.constraints={"version":1,"replicas":[{"count":2,"constraints":{"rack":"west"}},{"count":2,"constraints":{"rack":"east"}}],"observers":[]} Topic: multi-region-sync Partition: 0 Leader: 3 Replicas: 1,2,3,4 Isr: 3,4 Offline: 1,2 ==> Describe topic multi-region-async Topic: multi-region-async PartitionCount: 1 ReplicationFactor: 4 Configs: min.insync.replicas=1,confluent.placement.constraints={"version":1,"replicas":[{"count":2,"constraints":{"rack":"west"}}],"observers":[{"count":2,"constraints":{"rack":"east"}}]} Topic: multi-region-async Partition: 0 Leader: none Replicas: 2,1,3,4 Isr: 1 Offline: 2,1 Observers: 3,4 ==> Describe topic multi-region-default Topic: multi-region-default PartitionCount: 1 ReplicationFactor: 4 Configs: min.insync.replicas=1,confluent.placement.constraints={"version":1,"replicas":[{"count":2,"constraints":{"rack":"west"}}],"observers":[{"count":2,"constraints":{"rack":"east"}}]} Topic: multi-region-default Partition: 0 Leader: none Replicas: 2,1,3,4 Isr: 1 Offline: 2,1 Observers: 3,4Observe the following:

- In the first scenario, the

single-regiontopic has no leader, because it had only two replicas in the ISR, both of which were in thewestregion and are now down. - In the second scenario, the

multi-region-synctopic automatically elected a new leader ineast(for example, replica 3 in the previous output). Clients can failover to those replicas in theeastregion. - In the last two scenarios, the

multi-region-asyncandmulti-region-defaulttopics have no leader, because they had only two replicas in the ISR, both of which were in thewestregion and are now down. The observers in theeastregion are not eligible to become leaders automatically because they were not in the ISR.

- In the first scenario, the

Failover Observers¶

To explicitly fail over the observers in the multi-region-async and

multi-region-default topics to the east region, complete the following

steps:

Trigger unclean leader election (note:

uncleanleader election may result in data loss):docker-compose exec broker-east-4 kafka-leader-election --bootstrap-server broker-east-4:19094 --election-type UNCLEAN --topic multi-region-async --partition 0 docker-compose exec broker-east-4 kafka-leader-election --bootstrap-server broker-east-4:19094 --election-type UNCLEAN --topic multi-region-default --partition 0

Describe the topics again with the script describe-topics.sh.

./scripts/describe-topics.sh

You should see output similar to the following:

... ==> Describe topic multi-region-async Topic: multi-region-async PartitionCount: 1 ReplicationFactor: 4 Configs: min.insync.replicas=1,confluent.placement.constraints={"version":1,"replicas":[{"count":2,"constraints":{"rack":"west"}}],"observers":[{"count":2,"constraints":{"rack":"east"}}]} Topic: multi-region-async Partition: 0 Leader: 3 Replicas: 2,1,3,4 Isr: 3,4 Offline: 2,1 Observers: 3,4 ==> Describe topic multi-region-default Topic: multi-region-default PartitionCount: 1 ReplicationFactor: 4 Configs: min.insync.replicas=1,confluent.placement.constraints={"version":1,"replicas":[{"count":2,"constraints":{"rack":"west"}}],"observers":[{"count":2,"constraints":{"rack":"east"}}]} Topic: multi-region-default Partition: 0 Leader: 3 Replicas: 2,1,3,4 Isr: 3,4 Offline: 2,1 Observers: 3,4Observe the following:

- The topics

multi-region-asyncandmulti-region-defaulthave leaders again (for example, replica 3 in the previous output) - The topics

multi-region-asyncandmulti-region-defaulthad observers that are now in the ISR list (for example, replicas 3,4 in the previous output)

- The topics

Permanent Failover¶

At this point in the example, if the brokers in the west region come back

online, the leaders for the multi-region-async and multi-region-default

topics will automatically be elected back to a replica in west–that is,

replica 1 or 2. This may be desirable in some circumstances, but if you don’t

want the leaders to automatically failback to the west region, change the

topic placement constraints configuration and replica assignment by completing

the following steps:

For the topic

multi-region-default, view a modified replica placement policy placement-multi-region-default-reverse.json:{ "version": 1, "replicas": [ { "count": 2, "constraints": { "rack": "east" } } ], "observers": [ { "count": 2, "constraints": { "rack": "west" } } ] }Change the replica placement constraints configuration and replica assignment for

multi-region-default, by running the script permanent-fallback.sh../scripts/permanent-fallback.sh

The script uses

kafka-configsto change the replica placement policy and then it runsconfluent-rebalancerto move the replicas.echo -e "\n==> Switching replica placement constraints for multi-region-default\n" docker-compose exec broker-east-3 kafka-configs \ --bootstrap-server broker-east-3:19093 \ --alter \ --topic multi-region-default \ --replica-placement /etc/kafka/demo/placement-multi-region-default-reverse.json echo -e "\n==> Running Confluent Rebalancer on multi-region-default\n" docker-compose exec broker-east-3 confluent-rebalancer execute \ --metrics-bootstrap-server broker-east-3:19093 \ --bootstrap-server broker-east-3:19093 \ --replica-placement-only \ --topics multi-region-default \ --force \ --throttle 10000000 docker-compose exec broker-east-3 confluent-rebalancer finish \ --bootstrap-server broker-east-3:19093

Describe the topics again with the script describe-topics.sh.

./scripts/describe-topics.sh

You should see output similar to the following:

... ==> Describe topic multi-region-default Topic: multi-region-default PartitionCount: 1 ReplicationFactor: 4 Configs: min.insync.replicas=1,confluent.placement.constraints={"version":1,"replicas":[{"count":2,"constraints":{"rack":"east"}}],"observers":[{"count":2,"constraints":{"rack":"west"}}]} Topic: multi-region-async Partition: 0 Leader: 3 Replicas: 3,4,2,1 Isr: 3,4 Offline: 2,1 Observers: 2,1 ...Observe the following:

- For topic

multi-region-default, replicas 2 and 1, which were previously sync replicas, are now observers and are still offline - For topic

multi-region-default, replicas 3 and 4, which were previously observers, are now sync replicas.

- For topic

Failback¶

Now you will bring region west back online.

Run the following command to bring the

westregion back online:docker-compose start broker-west-1 broker-west-2 zookeeper-west

Wait for 5 minutes–the default duration for

leader.imbalance.check.interval.seconds–until the leadership election restores the preferred replicas. You can also trigger it withdocker-compose exec broker-east-4 kafka-leader-election --bootstrap-server broker-east-4:19094 --election-type PREFERRED --all-topic-partitions.Verify the new topic replica placement is restored with the script describe-topics.sh.

./scripts/describe-topics.sh

You should see output similar to the following:

Topic: single-region PartitionCount: 1 ReplicationFactor: 2 Configs: min.insync.replicas=1,confluent.placement.constraints={"version":1,"replicas":[{"count":2,"constraints":{"rack":"west"}}],"observers":[]} Topic: single-region Partition: 0 Leader: 2 Replicas: 2,1 Isr: 1,2 Offline: ==> Describe topic multi-region-sync Topic: multi-region-sync PartitionCount: 1 ReplicationFactor: 4 Configs: min.insync.replicas=1,confluent.placement.constraints={"version":1,"replicas":[{"count":2,"constraints":{"rack":"west"}},{"count":2,"constraints":{"rack":"east"}}],"observers":[]} Topic: multi-region-sync Partition: 0 Leader: 1 Replicas: 1,2,3,4 Isr: 3,4,2,1 Offline: ==> Describe topic multi-region-async Topic: multi-region-async PartitionCount: 1 ReplicationFactor: 4 Configs: min.insync.replicas=1,confluent.placement.constraints={"version":1,"replicas":[{"count":2,"constraints":{"rack":"west"}}],"observers":[{"count":2,"constraints":{"rack":"east"}}]} Topic: multi-region-async Partition: 0 Leader: 2 Replicas: 2,1,3,4 Isr: 2,1 Offline: Observers: 3,4 ==> Describe topic multi-region-default Topic: multi-region-default PartitionCount: 1 ReplicationFactor: 4 Configs: min.insync.replicas=1,confluent.placement.constraints={"version":1,"replicas":[{"count":2,"constraints":{"rack":"east"}}],"observers":[{"count":2,"constraints":{"rack":"west"}}]} Topic: multi-region-async Partition: 0 Leader: 3 Replicas: 3,4,2,1 Isr: 3,4 Offline: Observers: 2,1Observe the following:

- All topics have leaders again, in particular

single-regionwhich lost its leader when thewestregion failed. - The leaders for

multi-region-syncandmulti-region-asyncare restored to thewestregion. If they are not, then wait a full 5 minutes (duration ofleader.imbalance.check.interval.seconds). - The leader for

multi-region-defaultstayed in theeastregion because you performed a permanent failover.

- All topics have leaders again, in particular

Note

On failback from a failover to observers, any data that wasn’t replicated to observers will be lost because logs are truncated before catching up and joining the ISR.

Stop the Tutorial¶

To stop the demo environment and all Docker containers, run the following command:

./scripts/stop.sh

Troubleshooting¶

Containers fail to ping each other¶

If containers fail to ping each other (for example, failures you may see when running the script validate_connectivity.sh), complete the following steps:

Stop the demo.

./scripts/stop.sh

Clean up the Docker environment.

for c in $(docker container ls -q --filter "name=pumba"); do docker container stop "$c" && docker container rm "$c"; done docker-compose down -v --remove-orphans # More aggressive cleanup docker volume prune

Restart the demo.

./scripts/start.sh

If the containers still fail to ping each other, restart Docker and run again.

Pumba is overloading the Docker inter-container network¶

If Pumba is overloading the Docker inter-container network, complete the following steps:

- Tweak the Pumba settings in latency_docker.sh.

- Re-test in your environment.