Configure Authorization with Confluent for Kubernetes

Whereas authentication verifies the identity of users and applications, authorization allows you to define the actions they are permitted to take once connected.

Confluent for Kubernetes supports the following authorization mechanisms:

No Authorization

Confluent Role Based Access Control (RBAC) authorization, with a dependency on LDAP server

We recommend you use Confluent Role Based Access Control (RBAC) for production deployments. With RBAC, CFK will automate the entire secure setup to adhere to production best practices.

Confluent Role Based Access Control (RBAC)

Confluent for Kubernetes supports Role-Based Access Control (RBAC). RBAC is powered by Confluent’s Metadata Service (MDS), which integrates with an LDAP server and acts as the central authority for authorization and authentication data. RBAC leverages role bindings to determine which users and groups can access specific resources and what actions can be performed within those resources (roles).

Confluent provides audit logs, that record the runtime decisions of the permission checks that occur as users/applications attempt to take actions that are protected by ACLs and RBAC.

Confluent components also authorize access to Kafka using RBAC. There are a set of principals and RoleBindings required for the Confluent components to function, and these will be automatically generated as part of the automated deployment.

Note

RBAC with CFK can be enabled for new installations. You can not upgrade an existing cluster and enable it with RBAC.

This comprehensive security guide walks you through an end-to-end setup of role-based access control (RBAC) for Confluent with CFK. We recommend you take the CustomResource spec and steps outlined there and adjust for your environment.

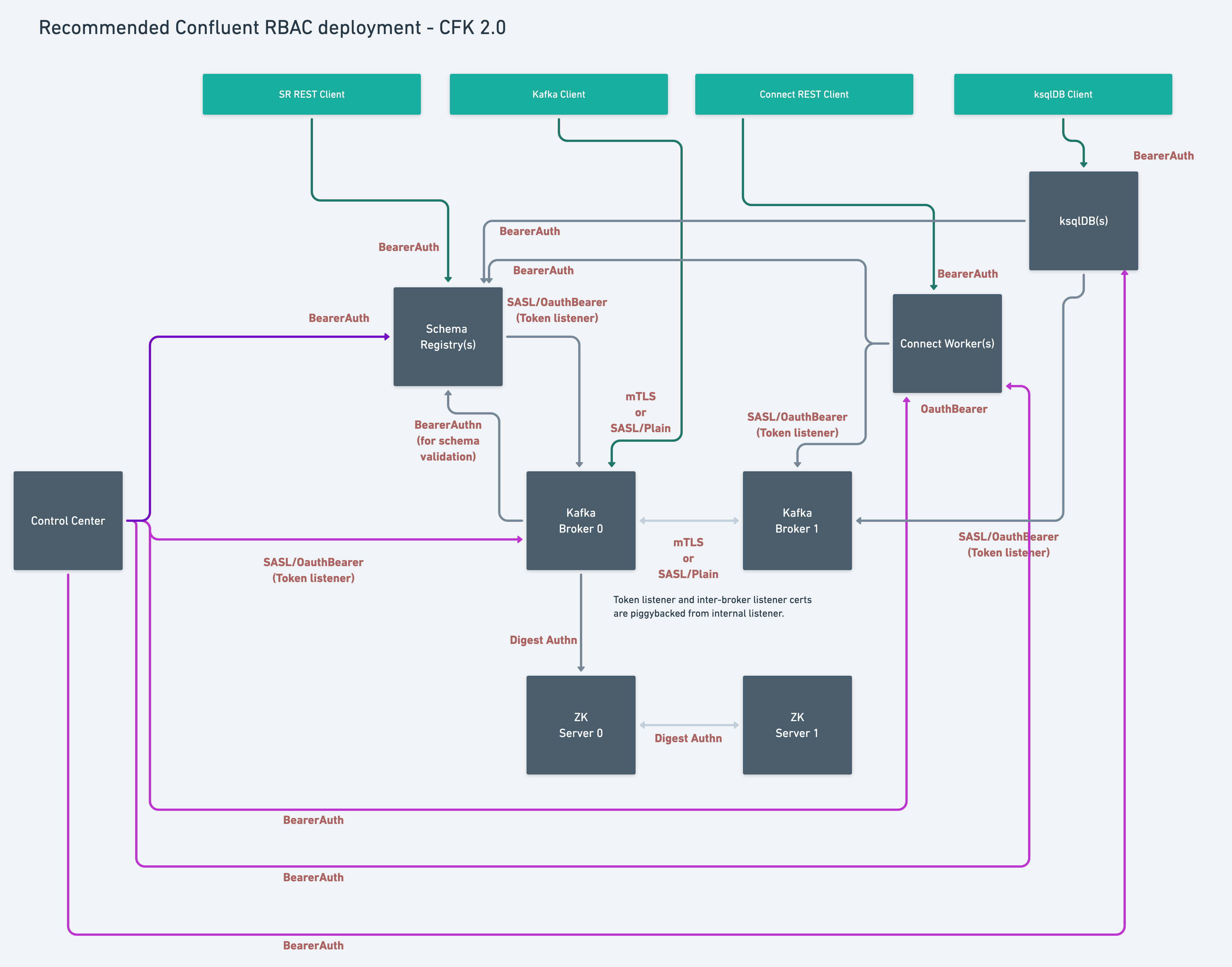

When you deploy with RBAC enabled, Confluent for Kubernetes automates the complete security setup. Here’s the end state architecture:

LDAP Server Requirements

The following are the requirements for enabling and using RBAC with CFK:

You must have an LDAP server that Confluent Platform can use for authentication.

Confluent REST API is automatically enabled for RBAC and cannot be disabled when RBAC is enabled.

Use the Kafka bootstrap endpoint (same as the MDS endpoint) to access Confluent REST API.

You must create the user principals in LDAP that will be used by Confluent Platform components. These are the default user principals:

Kafka:

kafka/kafka-secretConfluent REST API:

erp/erp-secretConfluent Control Center (Legacy):

c3/c3-secretksqlDB:

ksql/ksql-secretSchema Registry:

sr/sr-secretReplicator:

replicator/replicator-secretConnect:

connect/connect-secret

You have created the LDAP user/password for a user who has a minimum of LDAP read-only permissions to allow Metadata Service (MDS) to query LDAP about other users. For example, you’d create a user

mdswith passwordDeveloper!You must create a user for Confluent REST API in LDAP and provide the username and password.

Automated creation of rolebindings for Confluent Platform component principals

Confluent for Kubernetes automatically creates all required role bindings for Confluent Platform components as ConfluentRoleBinding custom resources (CRs).

Grant roles to a Confluent Control Center (Legacy) user to be able to administer Confluent Platform

Control Center (Legacy) users require separate roles for each Confluent Platform component and resource they wish to view and administer in the Control Center (Legacy) UI. Grant explicit permissions to the users as shown below.

In the following example, the testadmin principal is used as a Control Center (Legacy) UI user.

Grant permission to view and administer Confluent Platform components

This set of rolebinding CRs represent the permissions needed: https://github.com/confluentinc/confluent-kubernetes-examples/blob/master/security/production-secure-deploy/controlcenter-testadmin-rolebindings.yaml

kubectl apply -f https://raw.githubusercontent.com/confluentinc/confluent-kubernetes-examples/master/security/production-secure-deploy/controlcenter-testadmin-rolebindings.yaml

kubectl get confluentrolebinding

Troubleshooting: Verify MDS configuration

Log into MDS to verify the correct configuration and to get the Kafka cluster ID. You need the Kafka cluster ID for component role bindings.

Replace https://<kafka_bootstrap_endpoint> in the below commands with the value you set in your config file ($VALUES_FILE) for global.dependencies.mds.endpoint.

Log into MDS as the Kafka super user as below:

confluent login \ --url https://<kafka_bootstrap_endpoint> \ --ca-cert-path <path-to-cacerts.pem>

You need to pass the

--ca-cert-pathflag if:You have configured MDS to serve HTTPS traffic (

kafka.spec.dependencies.mds.tls.enabled: true)The CA used to issue the MDS certificates is not trusted by system where you are running these commands.

Provide the Kafka username and password when prompted, in this example,

kafkaandkafka-secret.You get a response to confirm a successful login.

Verify that the advertised listeners are correctly configured using the following command:

curl -ik \ -u '<kafka-user>:<kafka-user-password>' \ https://<kafka_bootstrap_endpoint>/security/1.0/activenodes/https

Get the Kafka cluster ID using one of the following commands:

confluent cluster describe --url https://<kafka_bootstrap_endpoint>

curl -ik \ https://<kafka_bootstrap_endpoint>/v1/metadata/id