Quick Start for Confluent Platform

Use this quick start to get up and running locally with Confluent Platform and its main components using Docker containers.

Ready to build?

Sign up for Confluent Cloud with your cloud marketplace account and unlock $1000 in free credits: AWS Marketplace, Google Cloud Marketplace, or Microsoft Azure Marketplace.

In this quick start, you create Apache Kafka® topics, and use Kafka Connect to generate mock data to those topics. You then go to Confluent Control Center to monitor your data.

Tip

These steps use Docker, but there are other ways to install Confluent Platform. For an example of how to download and configure a multi-broker cluster locally, without Docker, see Tutorial: Set Up a Multi-Broker Kafka Cluster. You can also use a TAR or ZIP Archive, or package managers like

systemd, Kubernetes, and Ansible. For more information, see Install Confluent Platform On-Premises.If you are interested in a fully managed cloud-native service for Apache Kafka®, sign up for Confluent Cloud and get started for free using the Confluent Cloud Quick Start.

Prerequisites

To run this quick start, you will need Docker and Docker Compose installed on a computer with a supported Operating System. Make sure Docker is running. For full prerequisites, expand the Detailed prerequisites section that follows.

Detailed prerequisites

A connection to the internet.

Operating System currently supported by Confluent Platform.

Note

You can run the Confluent Platform Quick Start on Windows if you are running Docker Desktop for Windows on WSL 2. For more information, see How to Run Confluent on Windows in Minutes.

Docker version 1.11 or later is installed and running.

On Mac: Docker memory is allocated minimally at 6 GB (Mac). When using Docker Desktop for Mac, the default Docker memory allocation is 2 GB. Change the default allocation to 6 GB in the Docker Desktop app by navigating to Preferences > Resources > Advanced.

Step 1: Download and start Confluent Platform

In this step, you start by downloading a Docker compose file. The docker-compose.yml file sets ports and Docker environment variables such as the replication factor and listener properties for Confluent Platform and its components. To learn more about the settings in this file, see Docker Image Configuration Reference for Confluent Platform. In addition, the Confluent Platform image specified in the file uses the new Kafka-based KRaft metadata service, which has many benefits. As of Confluent Platform 7.5, ZooKeeper is deprecated for new deployments. For more information, see KRaft Overview for Confluent Platform.

Download or copy the contents of the Confluent Platform all-in-one Docker Compose file, for example:

wget https://raw.githubusercontent.com/confluentinc/cp-all-in-one/7.9.7-post/cp-all-in-one/docker-compose.ymlNote

To use Apache ZooKeeper as the metadata service for your Kafka cluster, download the Confluent Platform ZooKeeper all-in-one Docker Compose file.

Start the Confluent Platform stack with the

-doption to run in detached mode:docker compose up -d

Note

If you using an Docker Compose V1, you need to use a dash in the

docker composecommands. For example:docker-compose up -d

To learn more, see Migrate to Compose V2.

Each Confluent Platform component starts in a separate container. Your output should resemble the following. Your output may vary slightly from these examples depending on your operating system.

✔ Container flink-jobmanager Started 5.9s ✔ Container ksql-datagen Started 10.7s ✔ Container rest-proxy Started 7.8s ✔ Container ksqldb-cli Started 10.3s ✔ Container control-center Started 10.7s ✔ Container ksqldb-server Started 9.1s ✔ Container connect Started 7.6s ✔ Container schema-registry Started 6.4s ✔ Container broker Started 5.7s ✔ Container flink-taskmanager Started 2.9s ✔ Container flink-sql-client Started

Verify that the services are up and running:

docker compose ps

Your output should resemble:

NAME IMAGE COMMAND SERVICE CREATED STATUS PORTS broker confluentinc/cp-server:7.9.0 "/etc/confluent/dock…" broker 2 minutes ago Up 2 minutes 0.0.0.0:9092->9092/tcp, 0.0.0.0:9101->9101/tcp connect cnfldemos/cp-server-connect-datagen:0.6.4-7.6.0 "/etc/confluent/dock…" connect 13 days ago Up 2 minutes 0.0.0.0:8083->8083/tcp, 9092/tcp control-center confluentinc/cp-enterprise-control-center:7.9.0 "/etc/confluent/dock…" control-center 13 days ago Up 2 minutes 0.0.0.0:9021->9021/tcp flink-jobmanager cnfldemos/flink-kafka:1.19.1-scala_2.12-java17 "/docker-entrypoint.…" flink-jobmanager 2 minutes ago Up 2 minutes 6123/tcp, 8081/tcp, 0.0.0.0:9081->9081/tcp flink-sql-client cnfldemos/flink-sql-client-kafka:1.19.1-scala_2.12-java17 "/docker-entrypoint.…" flink-sql-client 2 minutes ago Up 2 minutes 6123/tcp, 8081/tcp flink-taskmanager cnfldemos/flink-kafka:1.19.1-scala_2.12-java17 "/docker-entrypoint.…" flink-taskmanager 2 minutes ago Up 2 minutes 6123/tcp, 8081/tcp ksql-datagen confluentinc/ksqldb-examples:7.9.0 "bash -c 'echo Waiti…" ksql-datagen 13 days ago Up 2 minutes ksqldb-cli confluentinc/cp-ksqldb-cli:7.9.0 "/bin/sh" ksqldb-cli 13 days ago Up 2 minutes ksqldb-server confluentinc/cp-ksqldb-server:7.9.0 "/etc/confluent/dock…" ksqldb-server 13 days ago Up 2 minutes 0.0.0.0:8088->8088/tcp rest-proxy confluentinc/cp-kafka-rest:7.9.0 "/etc/confluent/dock…" rest-proxy 13 days ago Up 2 minutes 0.0.0.0:8082->8082/tcp schema-registry confluentinc/cp-schema-registry:7.9.0 "/etc/confluent/dock…" schema-registry 13 days ago Up 2 minutes 0.0.0.0:8081->8081/tcp

After a few minutes, if the state of any component isn’t Up, run the

docker-compose up -dcommand again, or trydocker-compose restart <image-name>, for example:docker compose restart control-center

Step 2: Create Kafka topics for storing your data

In Confluent Platform, real-time streaming events are stored in a topic, which is an append-only log, and the fundamental unit of organization for Kafka. To learn more about Kafka basics, see Kafka Introduction.

In this step, you create two topics by using Control Center (Legacy) for Confluent Platform. Control Center provides the features for building and monitoring production data pipelines and event streaming applications.

The topics are named pageviews and users. In later steps, you create connectors that produce data to these topics.

Create the pageviews topic

Confluent Control Center enables creating topics in the UI with a few clicks.

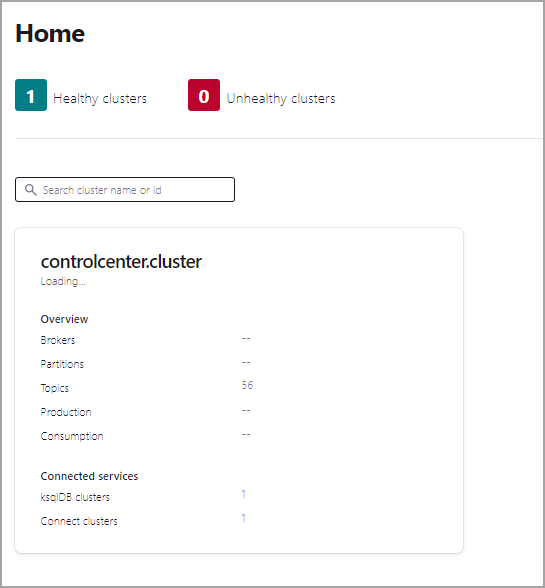

Navigate to Control Center at http://localhost:9021. It may take a minute or two for Control Center to start and load.

Click the controlcenter.cluster tile.

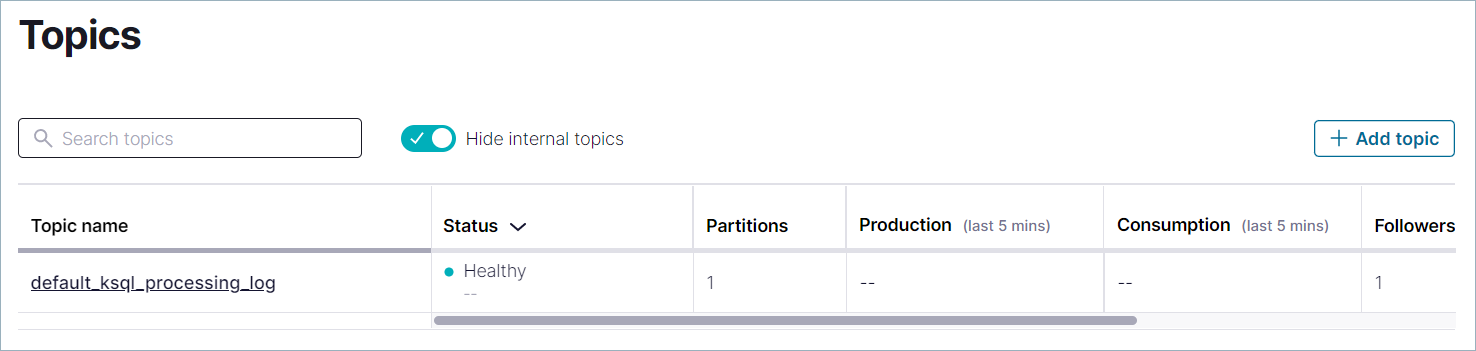

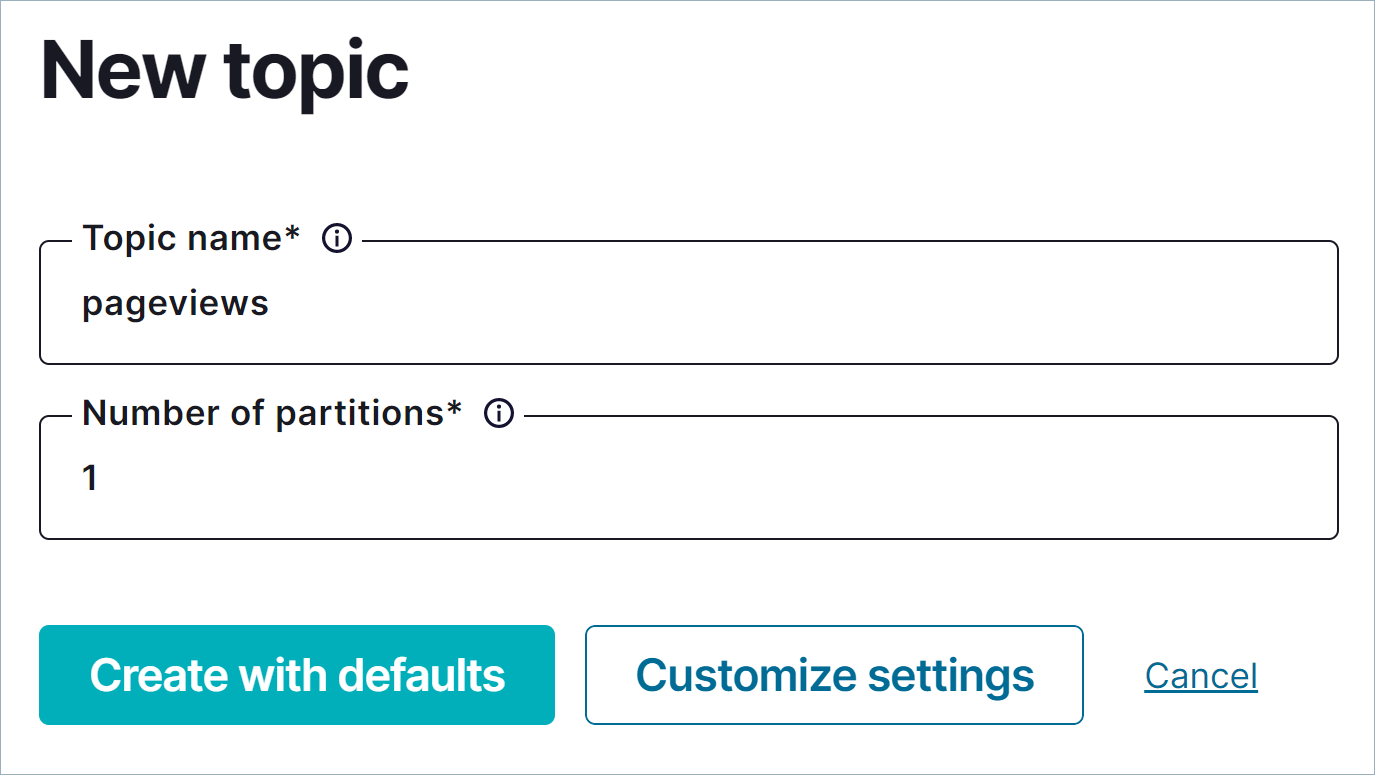

In the navigation menu, click Topics to open the topics list. Click + Add topic to start creating the

pageviewstopic.

In the Topic name field, enter

pageviewsand click Create with defaults. Topic names are case-sensitive.

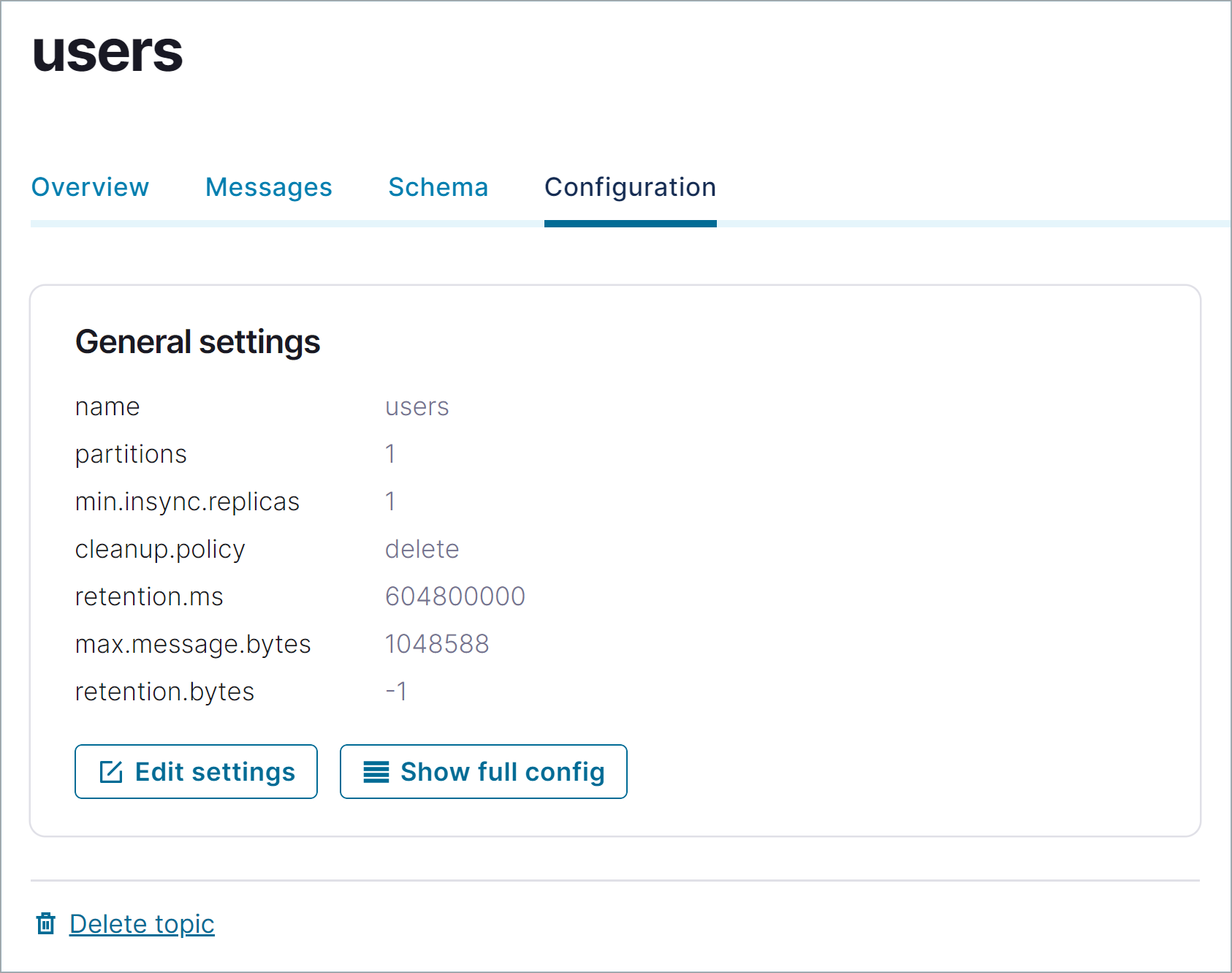

Create the users topic

Repeat the previous steps to create the users topic.

Step 3: Generate mock data

In Confluent Platform, you get events from an external source by using Kafka Connect. Connectors enable you to stream large volumes of data to and from your Kafka cluster. Confluent publishes many connectors for integrating with external systems, like MongoDB and Elasticsearch. For more information, see the Kafka Connect Overview page.

In this step, you run the Datagen Source Connector to generate mock data. The mock data is stored in the pageviews and users topics that you created previously. To learn more about installing connectors, see Install Self-Managed Connectors.

In the navigation menu, click Connect.

Click the

connect-defaultcluster in the Connect clusters list.Click Add connector to start creating a connector for pageviews data.

Select the

DatagenConnectortile.Tip

To see source connectors only, click Filter by category and select Sources.

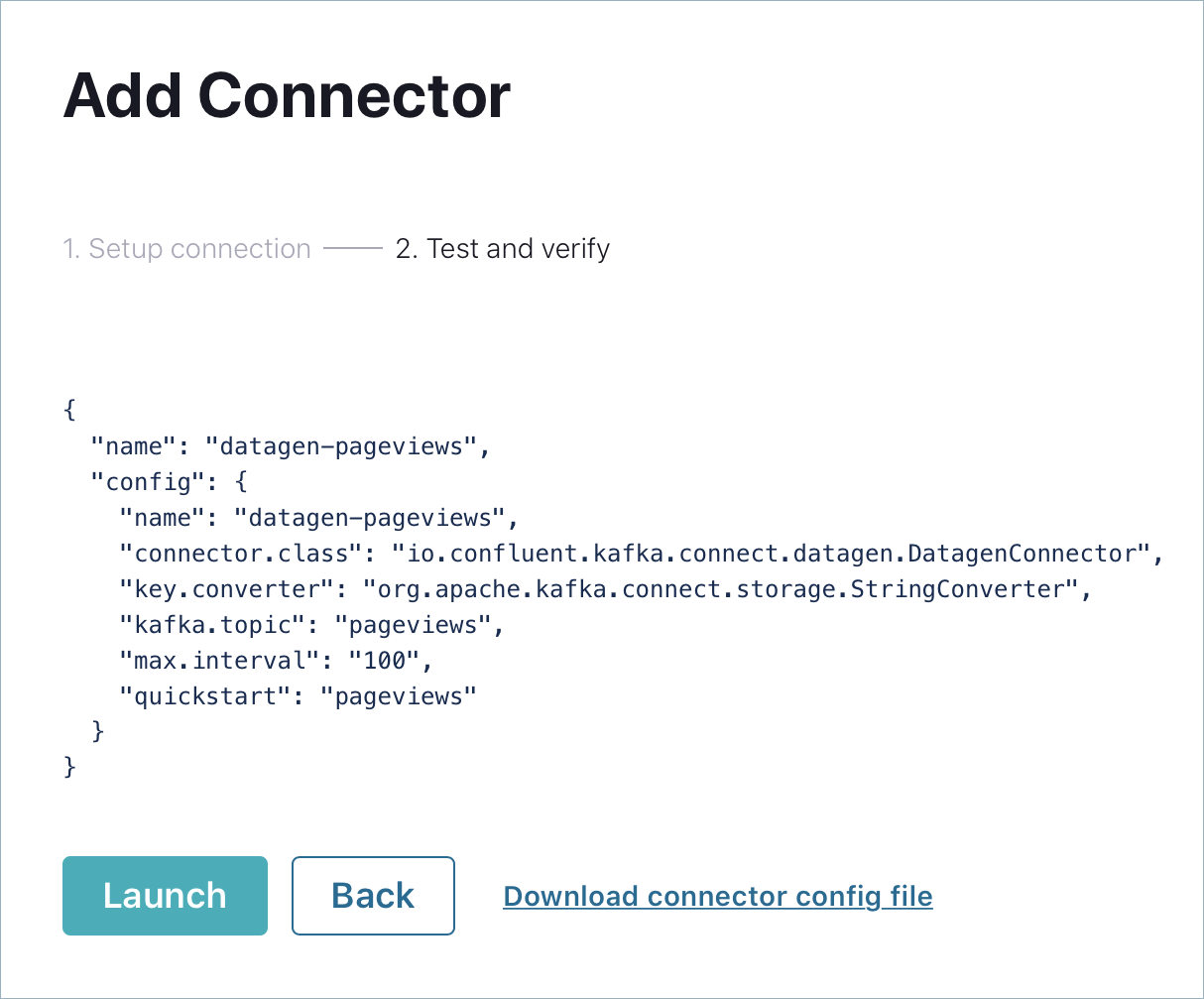

In the Name field, enter

datagen-pageviewsas the name of the connector.Enter the following configuration values in the following sections:

Common section:

Key converter class:

org.apache.kafka.connect.storage.StringConverter

General section:

kafka.topic: Choose

pageviewsfrom the dropdown menumax.interval:

100quickstart:

pageviews

Click Next to review the connector configuration. When you’re satisfied with the settings, click Launch.

Run a second instance of the Datagen Source connector connector to produce mock data to the users topic.

In the navigation menu, click Connect.

In the Connect clusters list, click

connect-default.Click Add connector.

Select the

DatagenConnectortile.In the Name field, enter

datagen-usersas the name of the connector.Enter the following configuration values:

Common section:

Key converter class:

org.apache.kafka.connect.storage.StringConverter

General section:

kafka.topic: Choose

usersfrom the dropdown menumax.interval:

1000quickstart:

users

Click Next to review the connector configuration. When you’re satisfied with the settings, click Launch.

In the navigation menu, click Topics and in the list, click users.

Click Messages to confirm that the

datagen-usersconnector is producing data to theuserstopic.

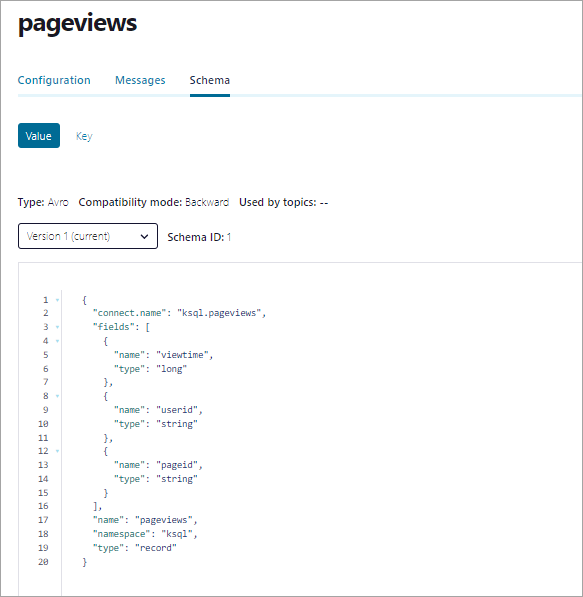

Inspect the schema of a topic

By default, the Datagen Source Connector produces data in Avro format, which defines the schemas of pageviews and users messages.

Schema Registry ensures that messages sent to your cluster have the correct schema. For more information, see Schema Registry Documentation.

Step 4: Uninstall and clean up

If you’re done working with Confluent Platform, you can stop and remove the Docker containers and images.

Run the following command to stop the Docker containers for Confluent:

docker-compose stopAfter stopping the Docker containers, run the following commands to prune the Docker system. Running these commands deletes containers, networks, volumes, and images, freeing up disk space:

docker system prune -a --volumes --filter "label=io.confluent.docker"

For more information, refer to the official Docker documentation.