Important

You are viewing documentation for an older version of Confluent Platform. For the latest, click here.

Confluent Enterprise Quick Start¶

This quick start shows you how to get up and running with Confluent Enterprise and its main components. This quick start will show you the basic and most powerful capabilities of Confluent Enterprise, including using Control Center for topic management and stream processing by using KSQL. In this quick start you will create Kafka topics and streaming queries on these topics by using KSQL. You will then go to Control Center to monitor and analyze the streaming queries!

You can also run an automated version of this quick start designed for Confluent Platform local installs.

Important

Java 1.7 and 1.8 are supported in this version of Confluent Platform (Java 1.9 is currently not supported). You should run with the Garbage-First (G1) garbage collector. For more information, see the Supported Versions and Interoperability.

Step 1: Download and Start Confluent Platform¶

Go to the downloads page and choose Confluent Platform Enterprise.

Provide your name and email and select Download.

Decompress the file. You should have these directories:

Folder Description /bin/ Driver scripts for starting and stopping services /etc/ Configuration files /lib/ Systemd services /logs/ Log files /share/ Jars and licenses /src/ Source files that require a platform-dependent build Create a folder named

<path-to-confluent>/ui/and download the experimental KSQL web interface file to this location. This quick start uses the preview KSQL web interface to run KSQL commands for stream processing.Important

The preview KSQL web interface is meant for development purposes in non-production environments. The final version of the interface will be available in a future version of Confluent Control Center.

$ mkdir <path-to-confluent>/ui/ && cd <path-to-confluent>/ui/ $ curl -O https://s3.amazonaws.com/ksql-experimental-ui/ksql-experimental-ui-0.1.war

Start Confluent Platform using the Confluent CLI. This command will start all of the Confluent Platform components, including Kafka, ZooKeeper, Schema Registry, HTTP REST Proxy for Apache Kafka, Kafka Connect, KSQL, and Control Center.

Tip

You can add the

bindirectory to your PATH by running:export PATH=<path-to-confluent>/bin:$PATH.$ <path-to-confluent>/bin/confluent start

Your output should resemble:

Starting zookeeper zookeeper is [UP] Starting kafka kafka is [UP] Starting schema-registry schema-registry is [UP] Starting kafka-rest kafka-rest is [UP] Starting connect connect is [UP] Starting ksql ksql is [UP] Starting control-center control-center is [UP]

Step 2: Create Kafka Topics¶

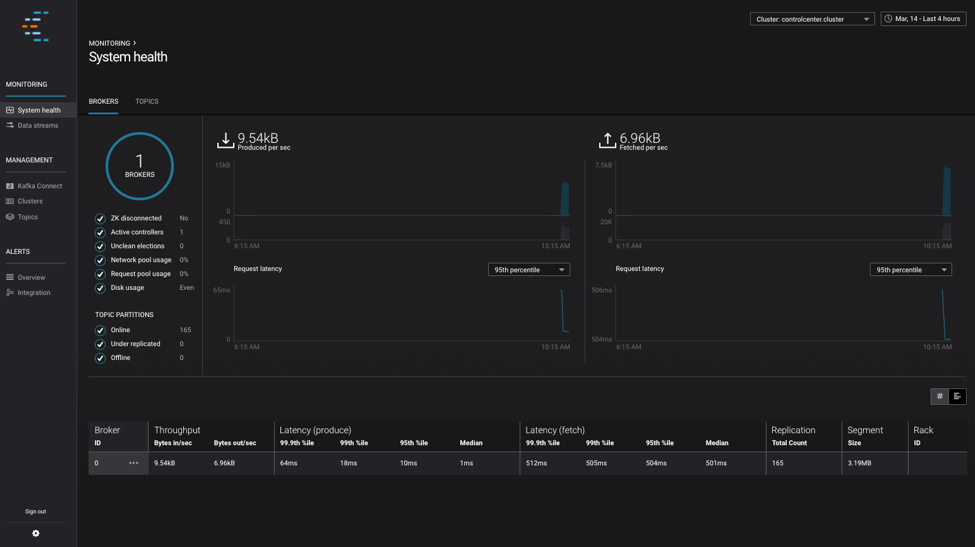

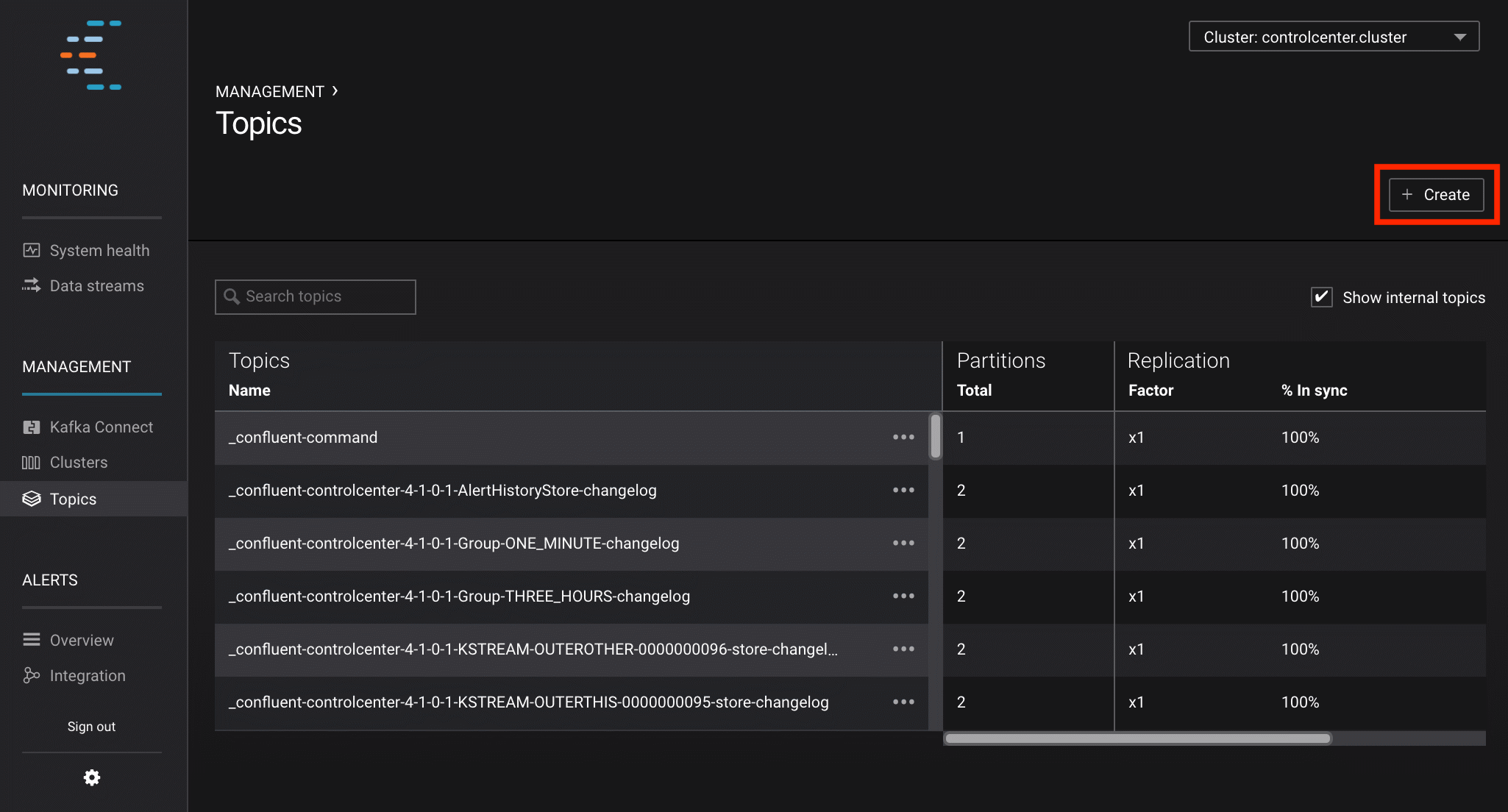

In this step Kafka topics are created by using the Confluent Control Center. Confluent Control Center provides the functionality for building and monitoring production data pipelines and streaming applications.

Navigate to the Control Center web interface at http://localhost:9021/.

Select Management -> Topics and click Create on the upper-right corner.

Create a topic named

pageviewsand click Create with defaults.

Create a topic named

usersand click Create with defaults.

Step 3: Create Sample Data¶

In this step you create sample data for the Kafka topics pageviews and users by using the ksql-datagen. This datagen is included with the Confluent Platform installation.

Tip

The datagen runs as a long-running process in your terminal. Run each datagen step in a separate terminal.

Produce Kafka data to the

pageviewstopic using the data generator. The following example continuously generates data with a value in DELIMITED format.$ <path-to-confluent>/bin/ksql-datagen quickstart=pageviews format=delimited topic=pageviews maxInterval=100 \ propertiesFile=<path-to-confluent>/etc/ksql/datagen.properties

Produce Kafka data to the

userstopic using the data generator. The following example continuously generates data with a value in JSON format.$ <path-to-confluent>/bin/ksql-datagen quickstart=users format=json topic=users maxInterval=1000 \ propertiesFile=<path-to-confluent>/etc/ksql/datagen.properties

Step 4: Create and Write to a Stream and Table using KSQL¶

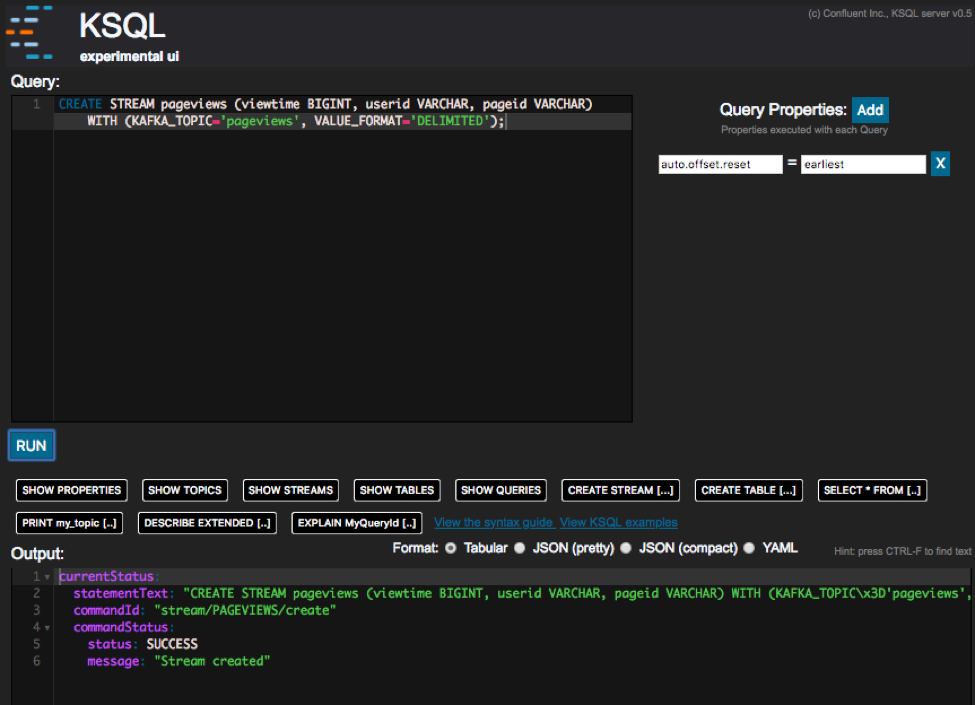

In this step, KSQL queries are run on the pageviews and users topics that were created in the previous step. The

KSQL commands are run using the preview KSQL web interface at http://localhost:8088/index.html. Enter

these commands in the Query field and click RUN. Each command must be entered separately.

Important

- Confluent Platform and the preview KSQL web interface must be installed and running.

- You can also run these commands using the KSQL CLI from your terminal

with this command:

<path-to-confluent>/bin/ksql http://localhost:8088. - All KSQL commands must end with a closing semicolon (

;).

Create Streams and Tables¶

In this step, KSQL is used to create streams and tables for the pageviews and users topics.

Create a stream (

pageviews) from the Kafka topicpageviews, specifying thevalue_formatofDELIMITED.CREATE STREAM pageviews (viewtime BIGINT, userid VARCHAR, pageid VARCHAR) WITH (KAFKA_TOPIC='pageviews', VALUE_FORMAT='DELIMITED');

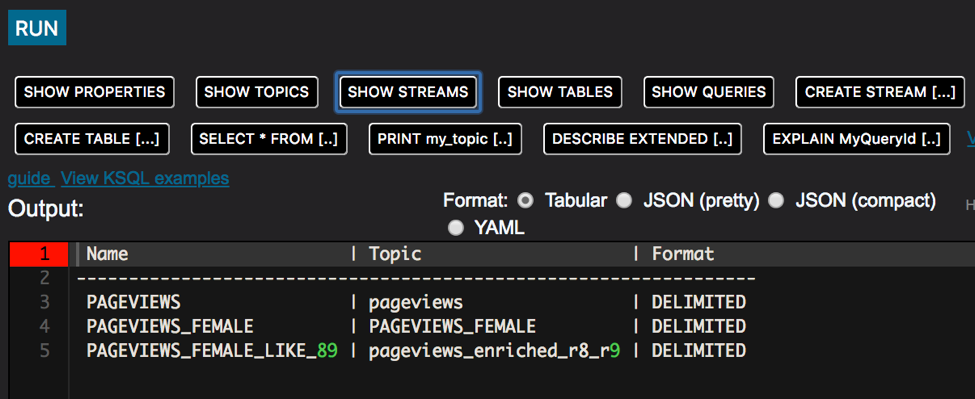

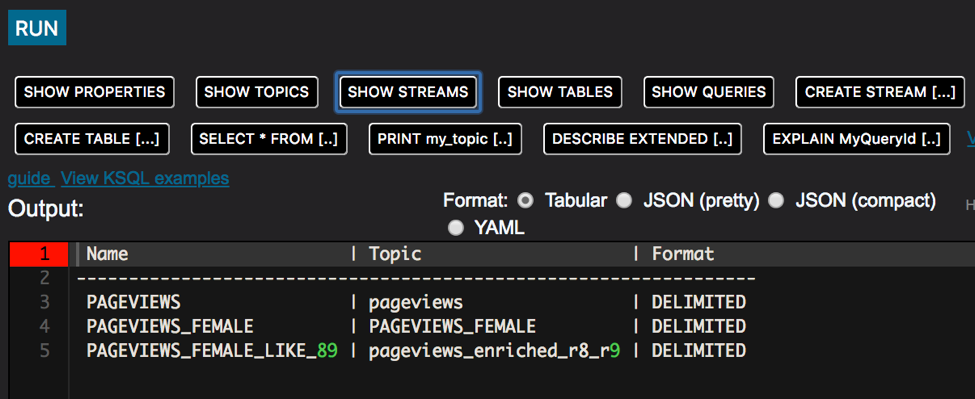

Tip: Click the SHOW STREAMS predefined query to view your streams.

Create a table (

users) with several columns from the Kafka topicusers, with thevalue_formatofJSON.CREATE TABLE users (registertime BIGINT, gender VARCHAR, regionid VARCHAR, userid VARCHAR, interests array<VARCHAR>, contact_info map<VARCHAR, VARCHAR>) WITH (KAFKA_TOPIC='users', VALUE_FORMAT='JSON', KEY = 'userid');

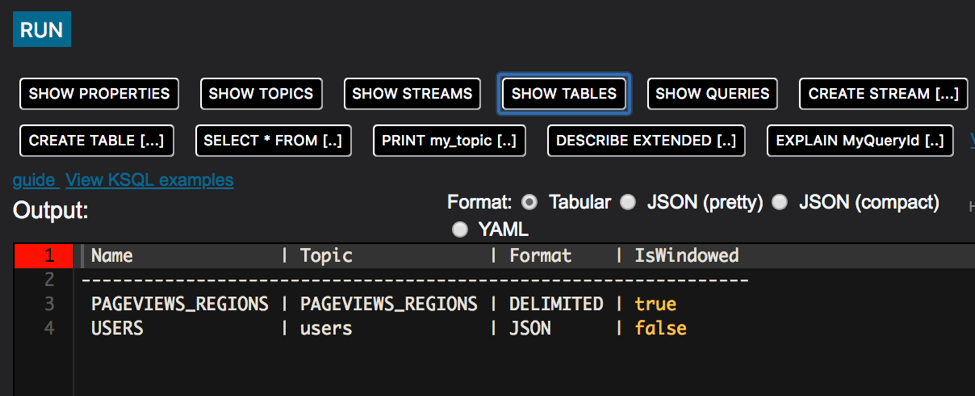

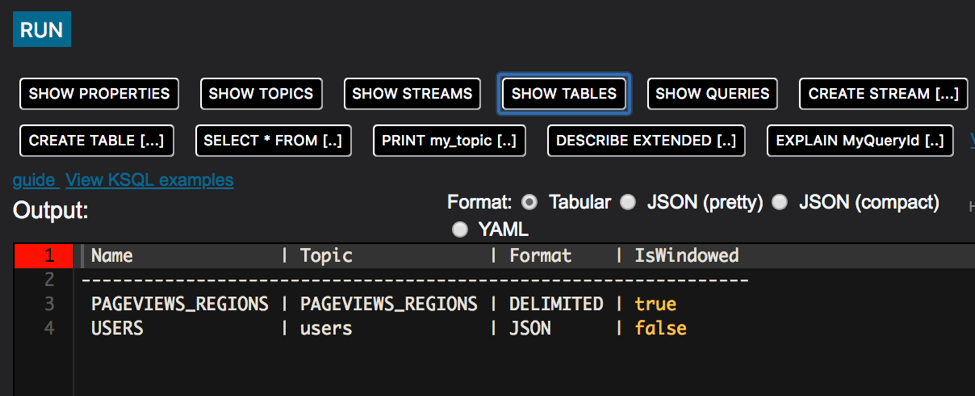

Tip: Click the SHOW TABLES predefined query to view your tables.

Write Queries¶

These examples write queries using KSQL.

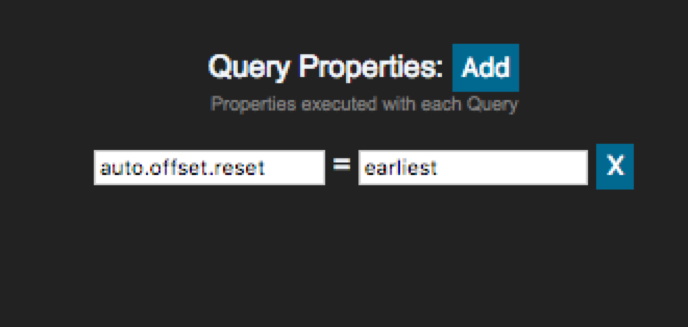

Add the custom query property

earliestfor theauto.offset.resetparameter. This instructs KSQL queries to read all available topic data from the beginning. This configuration is used for each subsequent query. For more information, see configuration parameter.

Create a query that returns data from a stream with the results limited to three rows.

SELECT pageid FROM pageviews LIMIT 3;

Create a persistent query that filters for female users. The results from this query are written to the Kafka

PAGEVIEWS_FEMALEtopic. This query enriches thepageviewsSTREAM by doing aLEFT JOINwith theusersTABLE on the user ID, where a condition (gender = 'FEMALE') is met.CREATE STREAM pageviews_female AS SELECT users.userid AS userid, pageid, regionid, gender FROM pageviews LEFT JOIN users ON pageviews.userid = users.userid WHERE gender = 'FEMALE';

Create a persistent query where a condition (

regionid) is met, usingLIKE. Results from this query are written to a Kafka topic namedpageviews_enriched_r8_r9.CREATE STREAM pageviews_female_like_89 WITH (kafka_topic='pageviews_enriched_r8_r9', value_format='DELIMITED') AS SELECT * FROM pageviews_female WHERE regionid LIKE '%_8' OR regionid LIKE '%_9';

Tip: Click the SHOW STREAMS predefined query to view your streams.

Create a persistent query that counts the pageviews for each region and gender combination in a tumbling window of 30 seconds when the count is greater than 1. Because the procedure is grouping and counting, the result is now a table, rather than a stream. Results from this query are written to a Kafka topic called

PAGEVIEWS_REGIONS.CREATE TABLE pageviews_regions AS SELECT gender, regionid , COUNT(*) AS numusers FROM pageviews_female WINDOW TUMBLING (size 30 second) GROUP BY gender, regionid HAVING COUNT(*) > 1;

Tip: Click the SHOW TABLES predefined query to view your streams.

Step 5: View Your Stream in Control Center¶

From the Control Center interface you can view all of your streaming KSQL queries.

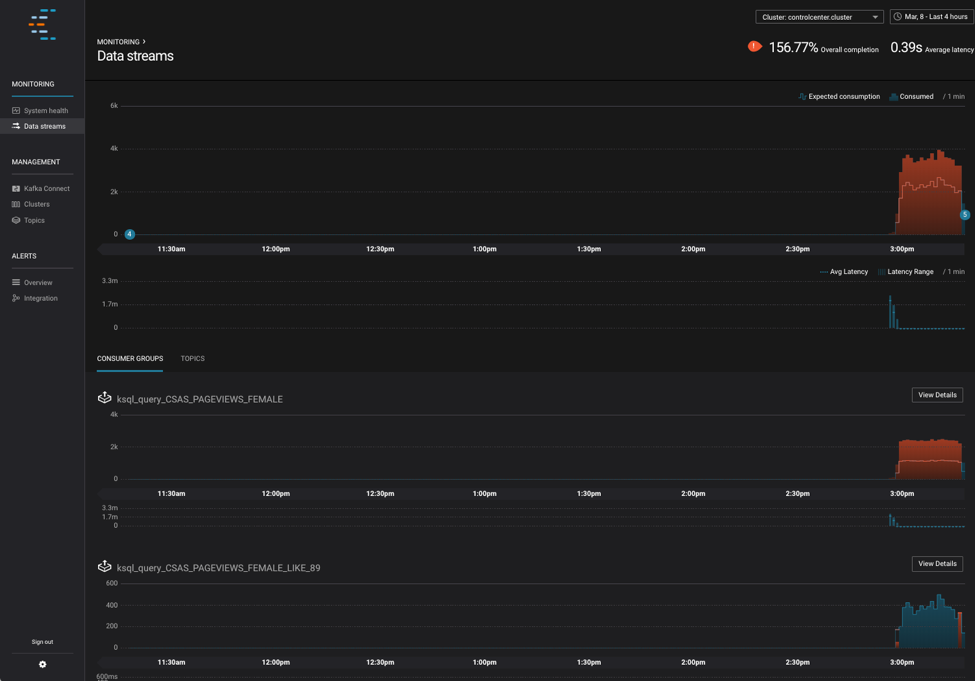

Navigate to the Control Center web interface Monitoring -> Data streams tab at http://localhost:9021/monitoring/streams. The monitoring page shows the total number of messages produced and consumed on the cluster. You can scroll down to see more details on the consumer groups for your queries.

Tip

Depending on your machine, these charts may take a few minutes to populate and you might need to refresh your browser.

Here are some cool things to try:

- View the consumers that have been created by KSQL

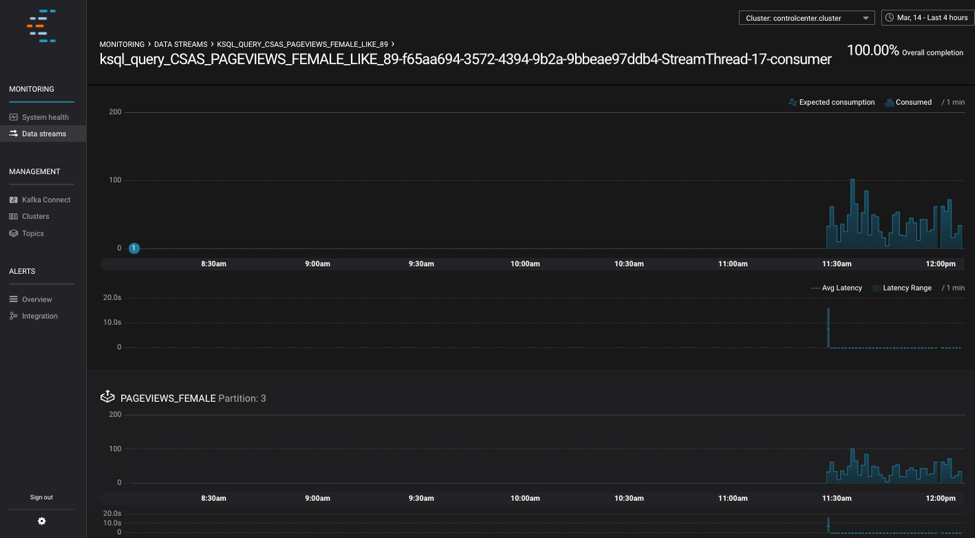

Click the View Details button for the

ksql_query_CSAS_PAGEVIEWS_FEMALE_LIKE_89stream. This graph shows the messages being consumed by the stream query.

For more information about Control Center, see Confluent Control Center.

Next Steps¶

Learn more about the components shown in this quick start:

- KSQL documentation Learn about processing your data with KSQL for use cases such as streaming ETL, real-time monitoring, and anomaly detection. You can also learn how to use KSQL with this collection of scripted demos.

- Kafka Streams documentation Learn how to build stream processing applications in Java or Scala.

- Kafka Connect documentation Learn how to integrate Kafka with other systems and download ready-to-use connectors to easily ingest data in and out of Kafka in real-time.

- Kafka Clients documentation Learn how to read and write data to and from Kafka using programming languages such as Go, Python, .NET, C/C++.

- Tutorials and Resources Try out the Confluent Platform tutorials and examples, watch demos and screencasts, and learn with white papers and blogs.