Custom Connectors for Confluent Cloud Limitations and Support

This page describes the limitations and support for custom connectors. Be sure to review all the information in this page before proceeding with the quick start.

Limitations

The following are limitations for custom connectors:

You can only create custom connectors in selected Amazon Web Services (AWS), Microsoft Azure and Google Cloud Platform (GCP) regions supported by Confluent Cloud.

The cluster can use public internet endpoints. Alternatively, Dedicated and Enterprise AWS clusters can use PrivateLink egress access points. Public egress IP addresses (static egress IPs) are not supported for custom connectors.

Custom Connect creates three or four role bindings when you provision a custom connector. These provide the connector with permissions required to operate. These role bindings count toward your Confluent Cloud organization role binding quota.

You can only run Java-based Apache Kafka® custom connectors in Confluent Cloud.

Organizations are limited to 30 custom connectors and 100 plugins.

Up to 250 tasks are supported per custom connector.

Custom connectors cannot write data to a local file system in Confluent Cloud. If you configure your custom connector to write to the local file system, it will fail.

Private Link egress connectivity for custom connectors is not supported when using an existing Confluent Cloud network configuration. A dedicated, newly provisioned serverless egress Private Link gateway and access point are required.

You cannot restart customer connectors via Cloud Console or the Restart API.

Adhere to the connector naming conventions:

Do not exceed 64 characters.

A connector name can contain Unicode letters, numbers, marks, and the following special characters:

. , & _ + | [] -. Note that you can use spaces, but using dashes (-) makes it easier to reference the cluster in a programmatic way.

Confluent and Partner support

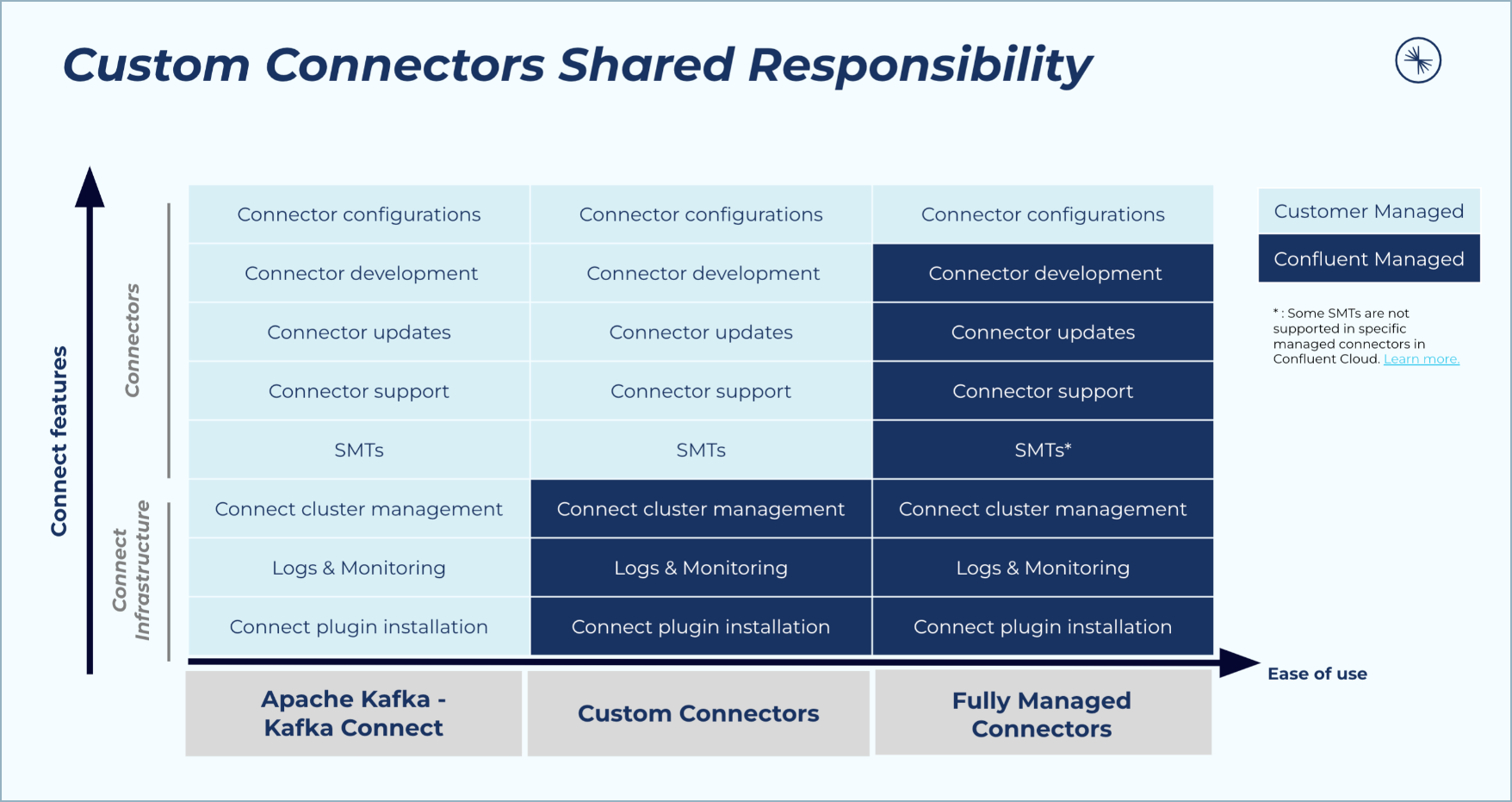

You are responsible for the connectors you upload through the Custom Connector feature.

Customers that upload connectors to Confluent Cloud through the Custom Connector feature are responsible for management and support of the connector. Confluent does not provide support for custom connectors.

Partner-built connectors that are published on Confluent Marketplace may not be tested or certified for Custom Connector functionality on Confluent Cloud. Confluent does not provide Enterprise support for customers who choose to provision a custom connector using a Partner-built connector that is not certified by Confluent.

Confluent-built connectors are not tested or certified for Custom Connector functionality in Confluent Cloud. Confluent does not provide Enterprise support for customers who choose to provision a custom connector using a Confluent-built plugin.

The following table provides additional details about enterprise support.

Enterprise Support for Custom Connectors

Connector Type | Confluent Cloud Infrastructure Support | Confluent Connector Support |

|---|---|---|

Customer-built plug-in/connector | Confluent | Not supported by Confluent. |

Partner-built plug-in/connector | Confluent | |

Confluent-built plug-in/connector | Confluent | When used for Custom Connectors, all types of connectors (Premium, Commercial, Community, and Open Source) are not supported by Confluent, regardless of whether a Trial or Enterprise license is held. In particular, Confluent-built proprietary connectors will only function for up to 30 days with a Trial or Enterprise license and, importantly, are not supported by Confluent even within this period. |

Certified Partner-built connectors

The Connect plugins below are tested and supported by the listed Confluent Partner.

Certified Partner Connectors

Confluent Partner | Confluent Marketplace Link | Documentation and Support |

|---|---|---|

Ably | ||

ClickHouse | ||

Neo4j | Neo4j docs and support |

Supported AWS, Azure and GCP regions

You can create custom connectors in the following AWS, Azure, and GCP regions supported by Confluent Cloud.

AWS regions

AWS Region | Location | Custom Connectors |

|---|---|---|

us-east-1 |

| ✓ |

us-east-2 | Ohio, USA | ✓ |

us-west-2 | Oregon, USA | ✓ |

ca-central-1 | Canada Central | ✓ |

ca-west-1 | Calgary, Canada | |

sa-east-1 | São Paulo, Brazil | ✓ |

AWS Region | Location | Custom Connectors |

|---|---|---|

ap-east-1 | Hong Kong | ✓ |

ap-northeast-1 | Tokyo, Japan | ✓ |

ap-northeast-2 | Seoul, South Korea | ✓ |

ap-northeast-3 | Osaka, Japan | ✓ |

ap-south-1 | Mumbai, India | ✓ |

ap-south-2 | Hyderabad, India | ✓ |

ap-southeast-1 | Singapore | ✓ |

ap-southeast-2 | Sydney, Australia | ✓ |

ap-southeast-3 | Jakarta, Indonesia | ✓ |

ap-southeast-4 | Melbourne, Australia | ✓ |

ap-southeast-5 | Malaysia | |

ap-southeast-7 | Thailand |

AWS Region | Location | Custom Connectors |

|---|---|---|

eu-central-1 | Frankfurt, Germany | ✓ |

eu-central-2 | Zurich, Switzerland | ✓ |

eu-north-1 | Stockholm, Sweden | ✓ |

eu-south-1 | Milan, Italy | ✓ |

eu-south-2 | Spain | ✓ |

eu-west-1 | Ireland | ✓ |

eu-west-2 | London, UK | ✓ |

eu-west-3 | Paris, France | ✓ |

AWS Region | Location | Custom Connectors |

|---|---|---|

me-south-1 | Bahrain | ✓ |

me-central-1 | UAE | ✓ |

af-south-1 | Cape Town, South Africa | ✓ |

il-central-1 | Tel Aviv, Israel |

Azure regions

Azure Region | Location | Custom Connectors |

|---|---|---|

canadacentral | Toronto, Canada | ✓ |

mexicocentral | Mexico Central | |

brazilsouth | Sao Paulo state, Brazil | ✓ |

centralus | Iowa, USA | ✓ |

eastus | Virginia, USA | ✓ |

eastus2 | Virginia, USA | ✓ |

southcentralus | Texas, USA | ✓ |

westus2 | Washington, USA | ✓ |

westus3 | Phoenix, USA | ✓ |

Azure Region | Location | Custom Connectors |

|---|---|---|

australiaeast | New South Wales, Australia | ✓ |

centralindia | Pune, India | ✓ |

eastasia | Hong Kong | ✓ |

japaneast | Tokyo, Japan | ✓ |

koreacentral | Seoul, South Korea | ✓ |

southeastasia | Singapore | ✓ |

newzealandnorth | New Zealand North | ✓ |

Azure Region | Location | Custom Connectors |

|---|---|---|

francecentral | Paris, France | ✓ |

germanywestcentral | Frankfurt, Germany | ✓ |

northeurope | Ireland | ✓ |

norwayeast | Oslo, Norway | ✓ |

swedencentral | Gävle, Sweden | ✓ |

switzerlandnorth | Zurich, Switzerland | ✓ |

uksouth | London, UK | ✓ |

westeurope | Netherlands | ✓ |

italynorth | Milan, Italy | |

spaincentral | Spain | ✓ |

austriaeast | Vienna, Austria |

Azure Region | Location | Custom Connectors |

|---|---|---|

southafricanorth | Johannesburg, South Africa | ✓ |

uaenorth | Dubai, UAE | ✓ |

qatarcentral | Doha, Qatar |

GCP regions

GCP Region | Location | Custom Connectors |

|---|---|---|

northamerica-northeast1 | Montreal, Canada | |

northamerica-northeast2 | Toronto, Canada | ✓ |

southamerica-east1 | São Paulo, Brazil | ✓ |

southamerica-west1 | Santiago, Chile | |

us-central1 | Iowa, USA | ✓ |

us-east1 |

| ✓ |

us-east4 |

| ✓ |

us-west1 | Oregon, USA | ✓ |

us-west2 | Los Angeles, USA | ✓ |

us-west3 | Salt Lake City, USA | |

us-west4 | Las Vegas, USA | ✓ |

us-south1 | Dallas, USA |

GCP Region | Location | Custom Connectors |

|---|---|---|

asia-east1 | Taiwan | |

asia-east2 | Hong Kong | |

asia-northeast1 | Tokyo, Japan | ✓ |

asia-northeast2 | Osaka, Japan | ✓ |

asia-northeast3 | Seoul, South Korea | |

asia-south1 | Mumbai, India | ✓ |

asia-south2 | Delhi, India | ✓ |

asia-southeast1 | Singapore | ✓ |

asia-southeast2 | Jakarta, Indonesia | |

australia-southeast1 | Sydney, Australia | ✓ |

australia-southeast2 | Melbourne, Australia | ✓ |

GCP Region | Location | Custom Connectors |

|---|---|---|

europe-central2 | Warsaw, Poland | |

europe-north1 | Finland | ✓ |

europe-southwest1 | Madrid, Spain | |

europe-west1 | Belgium | ✓ |

europe-west2 | London, UK | ✓ |

europe-west3 | Frankfurt, Germany | ✓ |

europe-west4 | Netherlands | ✓ |

europe-west6 | Zurich, Switzerland | |

europe-west8 | Milan, Italy | ✓ |

europe-west9 | Paris, France | |

europe-west12 | Turin, Italy |

GCP Region | Location | Custom Connectors |

|---|---|---|

me-west1 | Tel Aviv, Israel | |

me-central1 | Doha, Qatar | |

me-central2 | Dammam, Saudi Arabia |

Schema Registry integration

Schema Registry must be enabled for the environment, for the custom connector to use a Schema Registry-based format (for example, Avro, JSON_SR (JSON Schema), or Protobuf). Schema Registry can be enabled as a fully-managed service in Confluent Cloud or as a self-managed service.

Managed Schema Registry

When Schema Registry is enabled as a fully-managed service for your Confluent Cloud environment, you can automatically add the Schema Registry configuration properties to your custom connector using the UI. The following example shows the required configuration properties added to the custom connector configuration when using the Avro data format for both the key and value:

{

"confluent.custom.schema.registry.auto": "true",

"key.converter": "io.confluent.connect.avro.AvroConverter",

"value.converter": "io.confluent.connect.avro.AvroConverter"

}

Self-Managed Schema Registry

If you plan to use a self-managed Schema Registry configuration, the self-managed Schema Registry instance must be accessible over the public internet. You also must add all Schema Registry configuration entries, including credentials, in the connector configuration. For example:

{

"key.converter": "io.confluent.connect.protobuf.ProtobufConverter",

"key.converter.basic.auth.credentials.source": "USER_INFO",

"key.converter.basic.auth.user.info": "<username>:<password>",

"key.converter.schema.registry.url": "https://defgh-ijk.us-west-2.aws.devel.cc.cloud",

"value.converter": "io.confluent.connect.protobuf.ProtobufConverter",

"value.converter.basic.auth.credentials.source": "USER_INFO",

"value.converter.basic.auth.user.info": "<username>:<password>",

"value.converter.schema.registry.url": "https://defgh-ijk.us-west-2.aws.devel.cc.cloud"

}

App log topic

Note the following usage details for the app log topic:

Customers are responsible for all charges related to using the app log topic with a custom connector. For all other billing details, see Manage Billing in Confluent Cloud.

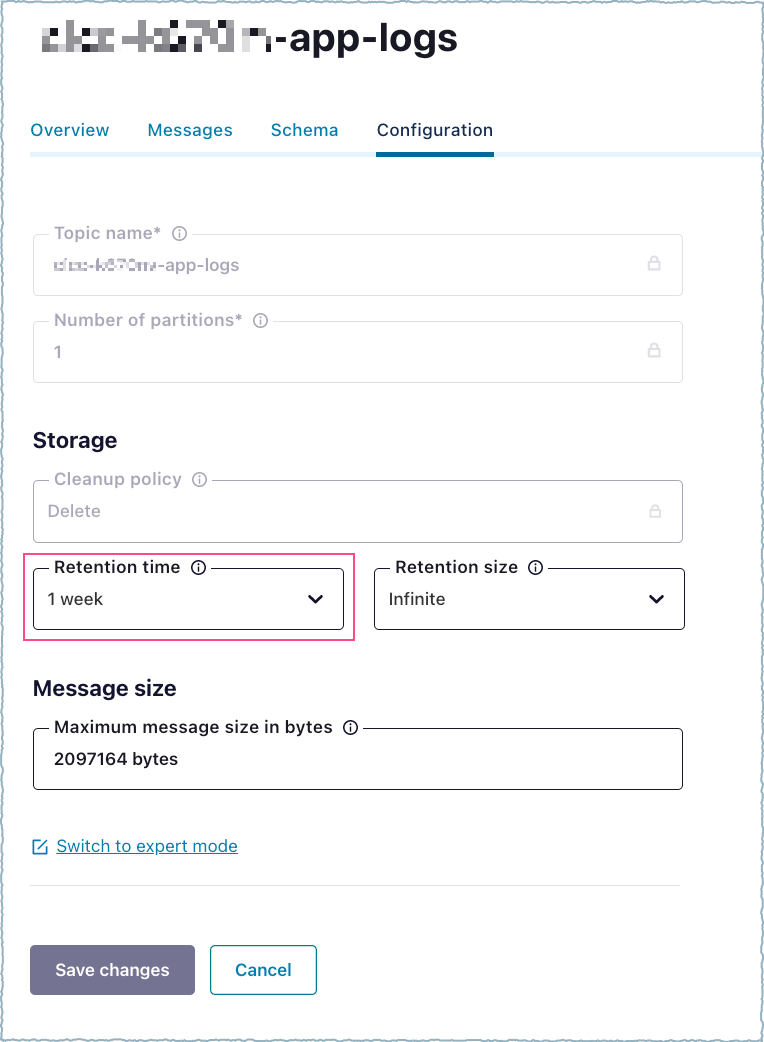

Kafka topics have a default retention period of seven days. You must consume the logs from the app log topic within seven days. If you need logs for a longer period, change the log retention period configuration property. In the UI, open the appropriate log topic and change the Retention time.

Log retention period