Microsoft SQL Server CDC Source (Debezium) Connector [End of Life] for Confluent Cloud

Important

This connector reached its end of life (EOL) on March 31, 2026. Confluent recommends migrating to Microsoft SQL Server CDC Source V2 (Debezium) connector. For more information, see Deprecated and end of life connectors.

The fully-managed Microsoft SQL Server Change Data Capture (CDC) Source (Debezium) [Deprecated] connector for Confluent Cloud can obtain a snapshot of the existing data in a SQL Server database and then monitor and record all subsequent row-level changes to that data. The connector supports Avro, JSON Schema, Protobuf, or JSON (schemaless) output data formats. All of the events for each table are recorded in a separate Apache Kafka® topic. The events can then be easily consumed by applications and services.

Note

This Quick Start is for the fully-managed Confluent Cloud connector. If you are installing the connector locally for Confluent Platform, see Debezium SQL Server CDC Source Connector for Confluent Platform.

If you require private networking for fully-managed connectors, make sure to set up the proper networking beforehand. For more information, see Manage Networking for Confluent Cloud Connectors.

Features

The SQL Server CDC Source (Debezium) [Deprecated] connector provides the following features:

Topics created automatically: The connector automatically creates Kafka topics using the naming convention:

<database.server.name>.<schemaName>.<tableName>. The topics are created with the properties:topic.creation.default.partitions=1andtopic.creation.default.replication.factor=3. For topic sizing information, see Maximum message size.Tables included and Tables excluded: Allows you to set whether a table is or is not monitored for changes. By default, the connector monitors every non-system table.

Tasks per connector: Organizations can run multiple connectors with a limit of one task per connector (that is,

"tasks.max": "1").Snapshot mode: Allows you to specify the criteria for running a snapshot.

Tombstones on delete: Allows you to configure whether a tombstone event should be generated after a delete event. Default is

true.Database authentication: password authentication.

Output formats: The connector supports Avro, JSON Schema, Protobuf, or JSON (schemaless) output Kafka record value format. It supports Avro, JSON Schema, Protobuf, JSON (schemaless), and String output Kafka record key format. Schema Registry must be enabled to use a Schema Registry-based format (for example, Avro, JSON_SR (JSON Schema), or Protobuf). For Schema Registry environment limits, see Schema Registry Enabled Environments.

Incremental snapshot: Supports incremental snapshotting via signaling.

For more information and examples to use with the Confluent Cloud API for Connect, see the Confluent Cloud API for Connect Usage Examples section.

Limitations

Be sure to review the following information.

For connector limitations, see Microsoft SQL Server CDC Source Connector (Debezium) [Legacy] limitations.

If you plan to use one or more Single Message Transforms (SMTs), see SMT Limitations.

If you plan to use one or more Custom SMTs, see Custom SMT limitations.

If you plan to use Confluent Cloud Schema Registry, see Schema Registry Enabled Environments.

Maximum message size

This connector creates topics automatically. When it creates topics, the internal connector configuration property max.message.bytes is set to the following:

Basic cluster:

8 MBStandard cluster:

8 MBEnterprise cluster:

8 MBDedicated cluster:

20 MB

For more information about Confluent Cloud clusters, see Kafka Cluster Types in Confluent Cloud.

Log retention during snapshot

When launched, the CDC connector creates a snapshot of the existing data in the database to capture the nominated tables. To do this, the connector executes a “SELECT *” statement. Completing the snapshot can take a while if one or more of the nominated tables is very large.

During the snapshot process, the database server must retain redo logs and transaction logs so that when the snapshot is complete, the CDC connector can start processing database changes that have completed since the snapshot process began. These logs are retained in a binary log (binlog) on the database server.

If one or more of the tables are very large, the snapshot process could run longer than the binlog retention time set on the database server (that is, expire_logs_days = <number-of-days>). To capture very large tables, you should temporarily retain the binlog for longer than normal by increasing the expire_logs_days number.

Quick Start

Use this quick start to get up and running with the Confluent Cloud Microsoft SQL Server CDC Source (Debezium) [Legacy] connector. The quick start provides the basics of selecting the connector and configuring it to obtain a snapshot of the existing data in a Microsoft SQL Server database and then monitoring and recording all subsequent row-level changes.

- Prerequisites

Authorized access to a Confluent Cloud cluster on Amazon Web Services (AWS), Microsoft Azure (Azure), or Google Cloud.

The Confluent CLI installed and configured for the cluster. See Install the Confluent CLI.

Schema Registry must be enabled to use a Schema Registry-based format (for example, Avro, JSON_SR (JSON Schema), or Protobuf). For Schema Registry environment limits, see Schema Registry Enabled Environments.

SQL Server configured for change data capture (CDC).

For Debezium instructions, see Setting up SQL Server.

For Amazon RDS instructions, see Using change data capture.

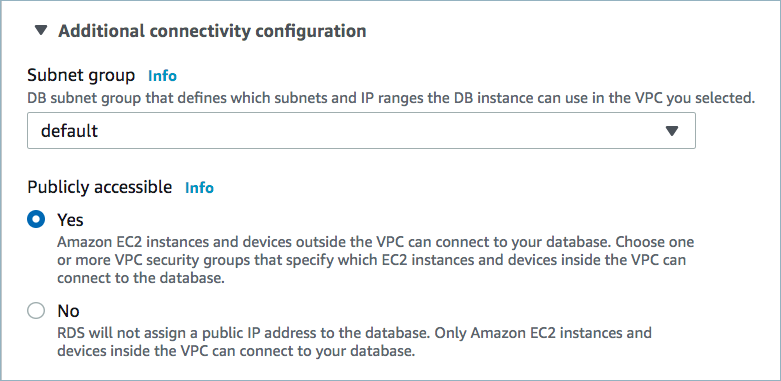

Public access may be required for your database. For database connectivity requirements, see Manage Networking for Confluent Cloud Connectors. The example below shows the AWS Management Console when setting up a Microsoft SQL Server database.

Public access enabled

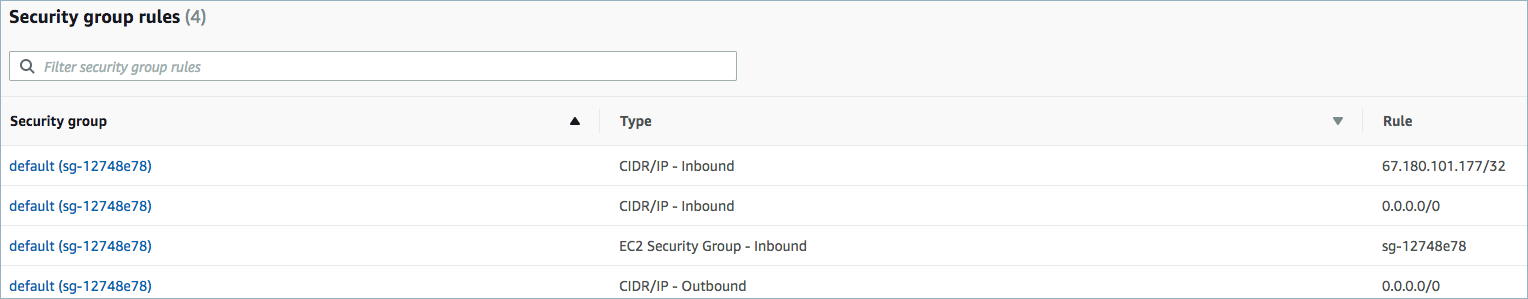

For networking considerations, see Networking and DNS. To use a set of public egress IP addresses, see Public Egress IP Addresses for Confluent Cloud Connectors. The following example shows the AWS Management Console when setting up security group rules for the VPC.

Open inbound traffic

Note

See your specific cloud platform documentation for how to configure security rules for your VPC.

Kafka cluster credentials. The following lists the different ways you can provide credentials.

Enter an existing service account resource ID.

Create a Confluent Cloud service account for the connector. Make sure to review the ACL entries required in the service account documentation. Some connectors have specific ACL requirements.

Create a Confluent Cloud API key and secret. To create a key and secret, you can use confluent api-key create or you can autogenerate the API key and secret directly in the Cloud Console when setting up the connector.

Using the Confluent Cloud Console

Step 1: Launch your Confluent Cloud cluster

To create and launch a Kafka cluster in Confluent Cloud, see Create a kafka cluster in Confluent Cloud.

Step 2: Add a connector

In the left navigation menu, click Connectors. If you already have connectors in your cluster, click + Add connector.

Step 3: Select your connector

Click the Microsoft SQL Server CDC Source (Debezium) [Legacy] connector card.

Step 4: Enter the connector details

Note

Make sure you have all your prerequisites completed.

An asterisk ( * ) designates a required entry.

At the Microsoft SQL Server CDC Source (Debezium) [Legacy] screen, complete the following:

Select the way you want to provide Kafka Cluster credentials. You can choose one of the following options:

My account: This setting allows your connector to globally access everything that you have access to. With a user account, the connector uses an API key and secret to access the Kafka cluster. This option is not recommended for production.

Service account: This setting limits the access for your connector by using a service account. This option is recommended for production.

Use an existing API key: This setting allows you to specify an API key and a secret pair. You can use an existing pair or create a new one. This method is not recommended for production environments.

Note

Freight clusters support only service accounts for Kafka authentication.

Click Continue.

Configure the authentication properties:

Database hostname: The address of the Microsoft SQL Server.

Database port: The port number of the Microsoft SQL Server.

Database username: The name of the Microsoft SQL Server user that has the required authorization.

Database password: The password of the Microsoft SQL Server user that has the required authorization.

Database name: The name of the Microsoft SQL Server database to connect to.

Database server name: The logical name of the Microsoft SQL Server cluster.

Database Instance: The name of the Microsoft SQL Server instance.

Database application intent: The keyword ApplicationIntent in your connection string. The assignable values are

ReadWrite, the default, orReadOnly.

Click Continue.

Output Kafka record value format: Sets the output Kafka record value format. Valid entries are AVRO, JSON_SR, PROTOBUF, or JSON. Note that you need to have Confluent Cloud Schema Registry configured if using a schema-based message format like AVRO, JSON_SR, and PROTOBUF.

Output Kafka record key format: Set the output Kafka record key format. Valid values are AVRO, JSON, JSON_SR (JSON Schema), PROTOBUF, or STRING. A valid schema must be available in Confluent Cloud Schema Registry to use a schema-based record format (for example, Avro, JSON_SR, or Protobuf).

Skip unparseable DDL: Set this to

trueto have the connector ignore malformed or unknown database statements. Set tofalseto stop processing so these issues can be corrected. Defaults tofalse. Consider setting this totrueto ignore unparseable statements.

Show advanced configurations

Schema context: Select a schema context to use for this connector, if using a schema-based data format. This property defaults to the Default context, which configures the connector to use the default schema set up for Schema Registry in your Confluent Cloud environment. A schema context allows you to use separate schemas (like schema sub-registries) tied to topics in different Kafka clusters that share the same Schema Registry environment. For example, if you select a non-default context, a Source connector uses only that schema context to register a schema and a Sink connector uses only that schema context to read from. For more information about setting up a schema context, see What are schema contexts and when should you use them?.

Snapshot mode: Specifies the criteria for performing a database snapshot when the connector starts.

Snapshot Isolation mode: A mode to control which transaction isolation level is used and how long the connector locks tables that are designated for capture. The snapshot, read_committed and read_uncommitted modes do not prevent other transactions from updating table rows during initial snapshot. The exclusive and repeatable_read modes do prevent concurrent updates.Mode choice also affects data consistency. Only exclusive and snapshot modes guarantee full consistency, that is, initial snapshot and streaming logs constitute a linear history. In case of repeatable_read and read_committed modes, it might happen that, for instance, a record added appears twice - once in initial snapshot and once in streaming phase. Nonetheless, that consistency level should do for data mirroring. For read_uncommitted there are no data consistency guarantees at all (some data might be lost or corrupted).

Signal data collection: Fully-qualified name of the data collection that is used to send signals to the connector. The collection name is of the form

databaseName.schemaName.tableName. These signals can be used to perform incremental snapshotting.Store only captured tables DDL: A Boolean value that specifies whether the connector records schema structures from all tables in a schema or database, or only from tables that are designated for capture. Defaults to false. false - During a database snapshot, the connector records the schema data for all non-system tables in the database, including tables that are not designated for capture. It’s best to retain the default setting. If you later decide to capture changes from tables that you did not originally designate for capture, the connector can easily begin to capture data from those tables, because their schema structure is already stored in the schema history topic. true - During a database snapshot, the connector records the table schemas only for the tables from which Debezium captures change events. If you change the default value, and you later configure the connector to capture data from other tables in the database, the connector lacks the schema information that it requires to capture change events from the tables.

Additional Configs

value.converter.replace.null.with.default: Whether to replace fields that have a default value and that are null to the default value. When set to true, the default value is used, otherwise null is used. Applicable for JSON Converter.

Value Converter Reference Subject Name Strategy: Set the subject reference name strategy for value. Valid entries are DefaultReferenceSubjectNameStrategy or QualifiedReferenceSubjectNameStrategy. Note that the subject reference name strategy can be selected only for PROTOBUF format with the default strategy being DefaultReferenceSubjectNameStrategy.

value.converter.schemas.enable: Include schemas within each of the serialized values. Input messages must contain schema and payload fields and may not contain additional fields. For plain JSON data, set this to false. Applicable for JSON Converter.

errors.tolerance: Use this property if you would like to configure the connector’s error handling behavior. WARNING: This property should be used with CAUTION for SOURCE CONNECTORS as it may lead to dataloss. If you set this property to ‘all’, the connector will not fail on errant records, but will instead log them (and send to DLQ for Sink Connectors) and continue processing. If you set this property to ‘none’, the connector task will fail on errant records.

value.converter.ignore.default.for.nullables: When set to true, this property ensures that the corresponding record in Kafka is NULL, instead of showing the default column value. Applicable for AVRO,PROTOBUF and JSON_SR Converters.

Value Converter Decimal Format: Specify the JSON/JSON_SR serialization format for Connect DECIMAL logical type values with two allowed literals: BASE64 to serialize DECIMAL logical types as base64 encoded binary data and NUMERIC to serialize Connect DECIMAL logical type values in JSON/JSON_SR as a number representing the decimal value.

Key Converter Schema ID Serializer: The class name of the schema ID serializer for keys. This is used to serialize schema IDs in the message headers.

Value Converter Connect Meta Data: Allow the Connect converter to add its metadata to the output schema. Applicable for Avro Converters.

Value Converter Value Subject Name Strategy: Determines how to construct the subject name under which the value schema is registered with Schema Registry.

Key Converter Key Subject Name Strategy: How to construct the subject name for key schema registration.

Value Converter Schema ID Serializer: The class name of the schema ID serializer for values. This is used to serialize schema IDs in the message headers.

Auto-restart policy

Enable Connector Auto-restart: Control the auto-restart behavior of the connector and its task in the event of user-actionable errors. Defaults to

true, enabling the connector to automatically restart in case of user-actionable errors. Set this property tofalseto disable auto-restart for failed connectors. In such cases, you would need to manually restart the connector.

Database details

Tables included: Enter a comma-separated list of fully-qualified table identifiers for the connector to monitor. By default, the connector monitors all non-system tables. A fully-qualified table name is in the form

schemaName.tableName. This property cannot be used with the property tables excluded.Tables excluded: Enter a comma-separated list of fully-qualified table identifiers for the connector to ignore. A fully-qualified table name is in the form

schemaName.tableName. This property cannot be used with the property tables included.Tombstones on delete: Configure whether a tombstone event should be generated after a delete event. The default is

true.Columns Excluded: An optional, comma-separated list of regular expressions that match the fully-qualified names of columns to exclude from change event record values. Fully-qualified names for columns are in the form

databaseName.tableName.columnName.Propagate Source Types by Data Type: Enter a comma-separated list of regular expressions matching database-specific data types. This property adds the data type’s original type and original length (as parameters) to the corresponding field schemas in the emitted change records.

Connection details

Poll interval (ms): Set the time in milliseconds to wait for new change events when no data is returned. Default is

500ms.Max batch size: Enter the maximum number of events the connector batches during each iteration. Defaults to

1000events.Event processing failure handling mode: Specify how the connector reacts to exceptions when processing binlog events. Defaults to

fail. Selectskiporwarnto skip the event or issue a warning, respectively.Heartbeat interval (ms): Set the interval time in milliseconds (ms) between heartbeat messages that the connector sends to a Kafka topic. Defaults to

0, which means the connector does not send heartbeat messages.

Output messages

After-state only: Defaults to true, which results in the Kafka record having only the record state from change events applied. Select false to maintain the prior record states after applying the change events.

Connector details

Provide transaction metadata: Select whether transaction metadata is enabled. Transaction metadata is stored in a dedicated Kafka topic. Defaults to

false.Decimal handling mode: Specify how

DECIMALandNUMERICcolumns are represented in change events.precise(the default) uses java.math.BigDecimal to represent values. The values are encoded in change events using a binary representation and the Connect org.apache.kafka.connect.data.Decimal data type. Selectstringto use string type to represent values.doublerepresents values using Java’sdoubledata type.doubledoes not provide precision, but is much easier to use in consumers.Binary handling mode: Specify how binary (blob, binary) columns are represented in change events. Select

bytes(the default) to represent binary data in byte array format. Selectbase64to represent binary data in base64-encoded string format. Selecthexto represent binary data in hex-encoded (base16) string format.Time precision mode: Time, date, and timestamps can be represented with different kinds of precision. Select

adaptive(the default) to base the precision for time, date, and timestamp values on the database column’s precision.adaptive_time_microsecondsis essentially the same as adaptive mode, with the exception thatTIMEfields always use microseconds precision.connectalways represents time, date, and timestamp values using Connect’s built-in representations for Time, Date, and Timestamp.connectuses millisecond precision regardless of what precision is used for the database columns. For more information, see Temporal types.Topic cleanup policy: Set the topic retention cleanup policy. Select

delete(the default) to discard old topics. Selectcompactto enable log compaction on the topic.

Transforms

Single Message Transforms: To add a new SMT, see Add transforms. For more information about unsupported SMTs, see Unsupported transformations.

For additional information about the Debezium SMTs ExtractNewRecordState and EventRouter (Debezium), see Debezium transformations.

For all property values and definitions, see Configuration Properties.

Click Continue.

Based on the number of topic partitions you select, you will be provided with a recommended number of tasks.

To change the number of tasks, use the Range Slider to select the desired number of tasks.

Click Continue.

Verify the connection details by previewing the running configuration.

After you’ve validated that the properties are configured to your satisfaction, click Launch.

The status for the connector should go from Provisioning to Running.

Step 5: Check the Kafka topic

After the connector is running, verify that messages are populating your Kafka topic.

Note

A topic named dbhistory.<database.server.name>.<connect-id> is automatically created for database.history.kafka.topic with one partition.

For more information and examples to use with the Confluent Cloud API for Connect, see the Confluent Cloud API for Connect Usage Examples section.

Using the Confluent CLI

Complete the following steps to set up and run the connector using the Confluent CLI.

Note

Make sure you have all your prerequisites completed.

Step 1: List the available connectors

Enter the following command to list available connectors:

confluent connect plugin list

Step 2: List the connector configuration properties

Enter the following command to show the connector configuration properties:

confluent connect plugin describe <connector-plugin-name>

The command output shows the required and optional configuration properties.

Step 3: Create the connector configuration file

Create a JSON file that contains the connector configuration properties. The following example shows the required connector properties.

{

"connector.class": "SqlServerCdcSource",

"name": "SqlServerCdcSourceConnector_0",

"kafka.auth.mode": "KAFKA_API_KEY",

"kafka.api.key": "****************",

"kafka.api.secret": "****************************************************************",

"database.hostname": "connect-sqlserver-cdc.<host-id>.us-west-2.rds.amazonaws.com",

"database.port": "1433",

"database.user": "admin",

"database.password": "************",

"database.dbname": "database-name",

"database.server.name": "sql",

"table.include.list":"public.passengers",

"snapshot.mode": "initial",

"output.data.format": "JSON",

"tasks.max": "1"

}

Note the following property definitions:

"connector.class": Identifies the connector plugin name."name": Sets a name for your new connector.

"kafka.auth.mode": Identifies the connector authentication mode you want to use. There are two options:SERVICE_ACCOUNTorKAFKA_API_KEY(the default). To use an API key and secret, specify the configuration propertieskafka.api.keyandkafka.api.secret, as shown in the example configuration (above). To use a service account, specify the Resource ID in the propertykafka.service.account.id=<service-account-resource-ID>. To list the available service account resource IDs, use the following command:confluent iam service-account list

For example:

confluent iam service-account list Id | Resource ID | Name | Description +---------+-------------+-------------------+------------------- 123456 | sa-l1r23m | sa-1 | Service account 1 789101 | sa-l4d56p | sa-2 | Service account 2

"table.include.list": (Optional) Enter a comma-separated list of fully-qualified table identifiers for the connector to monitor. By default, the connector monitors all non-system tables. A fully-qualified table name is in the formschemaName.tableName."snapshot.mode": Specifies the criteria for performing a database snapshot when the connector starts.The default setting is

initial. When selected, the connector takes a snapshot of the structure and data from captured tables. This is useful if you want the topics populated with a complete representation of captured table data when the connector starts.

Setting this to

schema.onlytakes a snapshot of the structure of the captured tables only. Useful if you want to only capture changes to data that happen from when the connector is started.

"output.data.format": Sets the output Kafka record value format (data coming from the connector). Valid entries are AVRO, JSON_SR, PROTOBUF, or JSON. You must have Confluent Cloud Schema Registry configured if using a schema-based record format (for example, Avro, JSON_SR (JSON Schema), or Protobuf)."after.state.only": (Optional) Defaults to true, which results in the Kafka record having only the record state from change events applied. Enter false to maintain the prior record states after applying the change events."json.output.decimal.format": (Optional) Defaults to BASE64. Specify the JSON/JSON_SR serialization format for Connect DECIMAL logical type values with two allowed literals:BASE64 to serialize DECIMAL logical types as base64 encoded binary data.

NUMERIC to serialize Connect DECIMAL logical type values in JSON or JSON_SR as a number representing the decimal value.

"column.exclude.list": (Optional) A comma-separated list of regular expressions that match the fully-qualified names of columns to exclude from change event record values. Fully-qualified names for columns are in the formdatabaseName.tableName.columnName."signal.data.collection": (Optional) Fully-qualified name of the data collection that is used to send signals to the connector. The collection name is of the formdatabaseName.schemaName.tableName. These signals can be used to perform incremental snapshotting."tasks.max": Enter the number of tasks in use by the connector. Organizations can run multiple connectors with a limit of one task per connector (that is,"tasks.max": "1").

Single Message Transforms: See the Single Message Transforms (SMT) documentation for details about adding SMTs using the CLI. For additional information about the Debezium SMTs ExtractNewRecordState and EventRouter (Debezium), see Debezium transformations.

See Configuration Properties for all property values and definitions.

Step 4: Load the properties file and create the connector

Enter the following command to load the configuration and start the connector:

confluent connect cluster create --config-file <file-name>.json

For example:

confluent connect cluster create --config-file microsoft-sql-cdc-source.json

Example output:

Created connector SqlServerCdcSourceConnector_0 lcc-ix4dl

Step 5: Check the connector status

Enter the following command to check the connector status:

confluent connect cluster list

Example output:

ID | Name | Status | Type

+-----------+--------------------------------+---------+-------+

lcc-ix4dl | SqlServerCdcSourceConnector_0 | RUNNING | source

Step 6: Check the Kafka topic.

After the connector is running, verify that messages are populating your Kafka topic.

For more information and examples to use with the Confluent Cloud API for Connect, see the Confluent Cloud API for Connect Usage Examples section.

Note

A topic named dbhistory.<database.server.name>.<connect-id> is automatically created for database.history.kafka.topic with one partition.

Configuration Properties

Use the following configuration properties with the fully-managed connector. For self-managed connector property definitions and other details, see the connector docs in Self-managed connectors for Confluent Platform.

How should we connect to your data?

nameSets a name for your connector.

Type: string

Valid Values: A string at most 64 characters long

Importance: high

Kafka Cluster credentials

kafka.auth.modeKafka Authentication mode. It can be one of KAFKA_API_KEY or SERVICE_ACCOUNT. It defaults to KAFKA_API_KEY mode, whenever possible.

Type: string

Valid Values: SERVICE_ACCOUNT, KAFKA_API_KEY

Importance: high

kafka.api.keyKafka API Key. Required when kafka.auth.mode==KAFKA_API_KEY.

Type: password

Importance: high

kafka.service.account.idThe Service Account that will be used to generate the API keys to communicate with Kafka Cluster.

Type: string

Importance: high

kafka.api.secretSecret associated with Kafka API key. Required when kafka.auth.mode==KAFKA_API_KEY.

Type: password

Importance: high

Schema Config

schema.context.nameAdd a schema context name. A schema context represents an independent scope in Schema Registry. It is a separate sub-schema tied to topics in different Kafka clusters that share the same Schema Registry instance. If not used, the connector uses the default schema configured for Schema Registry in your Confluent Cloud environment.

Type: string

Default: default

Importance: medium

How should we connect to your database?

database.hostnameThe address of the Microsoft SQL Server.

Type: string

Importance: high

database.portPort number of the Microsoft SQL Server.

Type: int

Valid Values: [0,…,65535]

Importance: high

database.userThe name of the Microsoft SQL Server user that has the required authorization.

Type: string

Importance: high

database.passwordThe password for the Microsoft SQL Server user that has the required authorization.

Type: password

Importance: high

database.dbnameThe name of the Microsoft SQL Server database to connect to.

Type: string

Importance: high

database.server.nameThe logical name of the Microsoft SQL Server cluster. This logical name forms a namespace and is used in all the names of the Kafka topics and the Kafka Connect schema names. The logical name is also used for the namespaces of the corresponding Avro schema, if Avro data format is used. Kafka topics should/will be created with the prefix

database.server.name. Only alphanumeric characters, underscores, hyphens and dots are allowed.Type: string

Importance: high

database.instanceThe name of the Microsoft SQL Server Instance

Type: string

Importance: medium

database.applicationIntentYou can specify the keyword ApplicationIntent in your connection string. The assignable values are ReadWrite (the default) or ReadOnly

Type: string

Default: ReadWrite

Valid Values: ReadOnly, ReadWrite

Importance: medium

Database details

signal.data.collectionFully-qualified name of the data collection that needs to be used to send signals to the connector. Use the following format to specify the fully-qualified collection name:

databaseName.schemaName.tableNameType: string

Importance: medium

snapshot.isolation.modeA mode to control which transaction isolation level is used and how long the connector locks tables that are designated for capture. The snapshot, read_committed and read_uncommitted modes do not prevent other transactions from updating table rows during initial snapshot. The exclusive and repeatable_read modes do prevent concurrent updates.Mode choice also affects data consistency. Only exclusive and snapshot modes guarantee full consistency, that is, initial snapshot and streaming logs constitute a linear history. In case of repeatable_read and read_committed modes, it might happen that, for instance, a record added appears twice - once in initial snapshot and once in streaming phase. Nonetheless, that consistency level should do for data mirroring. For read_uncommitted there are no data consistency guarantees at all (some data might be lost or corrupted).

Type: string

Default: repeatable_read

Valid Values: exclusive, read_committed, read_uncommitted, repeatable_read, snapshot

Importance: low

table.include.listAn optional comma-separated list of strings that match fully-qualified table identifiers for tables to be monitored. Any table not included in this config property is excluded from monitoring. Each identifier is in the form

schemaName.tableName. By default the connector monitors every non-system table in each monitored schema. May not be used with “Table excluded”.Type: list

Importance: medium

table.exclude.listAn optional comma-separated list of strings that match fully-qualified table identifiers for tables to be excluded from monitoring. Any table not included in this config property is monitored. Each identifier is in the form

schemaName.tableName. May not be used with “Table included”.Type: list

Importance: medium

snapshot.modeA mode for taking an initial snapshot of the structure and optionally data of captured tables. Once the snapshot is complete, the connector will continue reading change events from the database’s redo logs. The default setting is

initial, and takes a snapshot of structure and data of captured tables; useful if topics should be populated with a complete representation of the data from the captured tables. Theschema_onlyoption takes a snapshot of the structure of captured tables only; useful if only changes happening from now onwards should be propagated to topics. Theinitial_onlytakes a snapshot of structure and data like initial but instead does not transition into streaming changes once the snapshot has completed.Type: string

Default: initial

Valid Values: initial, initial_only, schema_only

Importance: low

datatype.propagate.source.typeA comma-separated list of regular expressions matching the database-specific data type names that adds the data type’s original type and original length as parameters to the corresponding field schemas in the emitted change records.

Type: list

Importance: low

tombstones.on.deleteControls whether a tombstone event should be generated after a delete event. When set to

true, the delete operations are represented by a delete event and a subsequent tombstone event. When set tofalse, only a delete event is sent. Emitting the tombstone event (the default behavior) allows Kafka to completely delete all events pertaining to the given key, once the source record got deleted.Type: boolean

Default: true

Importance: high

column.exclude.listRegular expressions matching columns to exclude from change events

Type: list

Importance: medium

Connection details

poll.interval.msPositive integer value that specifies the number of milliseconds the connector should wait during each iteration for new change events to appear. Defaults to 1000 milliseconds, or 1 second.

Type: int

Default: 1000 (1 second)

Valid Values: [1,…]

Importance: low

max.batch.sizePositive integer value that specifies the maximum size of each batch of events that should be processed during each iteration of this connector.

Type: int

Default: 1000

Valid Values: [1,…,5000]

Importance: low

event.processing.failure.handling.modeSpecifies how the connector should react to exceptions during processing of binlog events.

Type: string

Default: fail

Valid Values: fail, skip, warn

Importance: low

heartbeat.interval.msControls how frequently the connector sends heartbeat messages to a Kafka topic. The behavior of default value 0 is that the connector does not send heartbeat messages.

Type: int

Default: 0

Valid Values: [0,…]

Importance: low

database.history.skip.unparseable.ddlA Boolean value that specifies whether the connector should ignore malformed or unknown database statements (true), or stop processing so a human can fix the issue (false). Defaults to false. Consider setting this to true to ignore unparseable statements.

Type: boolean

Default: false

Importance: low

Connector details

provide.transaction.metadataStores transaction metadata information in a dedicated topic and enables the transaction metadata extraction together with event counting.

Type: boolean

Default: false

Importance: low

decimal.handling.modeSpecifies how DECIMAL and NUMERIC columns should be represented in change events, including: ‘precise’ (the default) uses java.math.BigDecimal to represent values, which are encoded in the change events using a binary representation and Kafka Connect’s ‘org.apache.kafka.connect.data.Decimal’ type; ‘string’ uses string to represent values; ‘double’ represents values using Java’s ‘double’, which may not offer the precision but will be far easier to use in consumers.

Type: string

Default: precise

Valid Values: double, precise, string

Importance: medium

binary.handling.modeSpecifies how binary (blob, binary, etc.) columns should be represented in change events, including: ‘bytes’ (the default) represents binary data as byte array; ‘base64’ represents binary data as base64-encoded string; ‘hex’ represents binary data as hex-encoded (base16) string.

Type: string

Default: bytes

Valid Values: base64, bytes, hex

Importance: low

time.precision.modeTime, date, and timestamps can be represented with different kinds of precisions, including: ‘adaptive’ (the default) bases the precision of time, date, and timestamp values on the database column’s precision; ‘adaptive_time_microseconds’ like ‘adaptive’ mode, but TIME fields always use microseconds precision; ‘connect’ always represents time, date, and timestamp values using Kafka Connect’s built-in representations for Time, Date, and Timestamp, which uses millisecond precision regardless of the database columns’ precision.

Type: string

Default: adaptive

Valid Values: adaptive, adaptive_time_microseconds, connect

Importance: medium

cleanup.policySet the topic cleanup policy

Type: string

Default: delete

Valid Values: compact, delete

Importance: medium

database.history.store.only.captured.tables.ddlA Boolean value that specifies whether the connector records schema structures from all tables in a schema or database, or only from tables that are designated for capture. Defaults to false.

false - During a database snapshot, the connector records the schema data for all non-system tables in the database, including tables that are not designated for capture. It’s best to retain the default setting. If you later decide to capture changes from tables that you did not originally designate for capture, the connector can easily begin to capture data from those tables, because their schema structure is already stored in the schema history topic.

true - During a database snapshot, the connector records the table schemas only for the tables from which Debezium captures change events. If you change the default value, and you later configure the connector to capture data from other tables in the database, the connector lacks the schema information that it requires to capture change events from the tables.

Type: boolean

Default: false

Importance: low

Output messages

output.data.formatSets the output Kafka record value format. Valid entries are AVRO, JSON_SR, PROTOBUF, or JSON. Note that you need to have Confluent Cloud Schema Registry configured if using a schema-based message format like AVRO, JSON_SR, and PROTOBUF.

Type: string

Default: JSON

Importance: high

output.key.formatSets the output Kafka record key format. Valid entries are AVRO, JSON_SR, PROTOBUF, STRING or JSON. Note that you need to have Confluent Cloud Schema Registry configured if using a schema-based message format like AVRO, JSON_SR, and PROTOBUF

Type: string

Default: JSON

Valid Values: AVRO, JSON, JSON_SR, PROTOBUF, STRING

Importance: high

after.state.onlyControls whether the generated Kafka record should contain only the state after applying change events.

Type: boolean

Default: true

Importance: low

Number of tasks for this connector

tasks.maxMaximum number of tasks for the connector.

Type: int

Valid Values: [1,…,1]

Importance: high

Additional Configs

value.converter.connect.meta.dataAllow the Connect converter to add its metadata to the output schema. Applicable for Avro Converters.

Type: boolean

Importance: low

errors.toleranceUse this property if you would like to configure the connector’s error handling behavior. WARNING: This property should be used with CAUTION for SOURCE CONNECTORS as it may lead to dataloss. If you set this property to ‘all’, the connector will not fail on errant records, but will instead log them (and send to DLQ for Sink Connectors) and continue processing. If you set this property to ‘none’, the connector task will fail on errant records.

Type: string

Default: none

Importance: low

key.converter.key.schema.id.serializerThe class name of the schema ID serializer for keys. This is used to serialize schema IDs in the message headers.

Type: string

Default: io.confluent.kafka.serializers.schema.id.PrefixSchemaIdSerializer

Importance: low

key.converter.key.subject.name.strategyHow to construct the subject name for key schema registration.

Type: string

Default: TopicNameStrategy

Importance: low

value.converter.decimal.formatSpecify the JSON/JSON_SR serialization format for Connect DECIMAL logical type values with two allowed literals:

BASE64 to serialize DECIMAL logical types as base64 encoded binary data and

NUMERIC to serialize Connect DECIMAL logical type values in JSON/JSON_SR as a number representing the decimal value.

Type: string

Default: BASE64

Importance: low

value.converter.ignore.default.for.nullablesWhen set to true, this property ensures that the corresponding record in Kafka is NULL, instead of showing the default column value. Applicable for AVRO,PROTOBUF and JSON_SR Converters.

Type: boolean

Default: false

Importance: low

value.converter.reference.subject.name.strategySet the subject reference name strategy for value. Valid entries are DefaultReferenceSubjectNameStrategy or QualifiedReferenceSubjectNameStrategy. Note that the subject reference name strategy can be selected only for PROTOBUF format with the default strategy being DefaultReferenceSubjectNameStrategy.

Type: string

Default: DefaultReferenceSubjectNameStrategy

Importance: low

value.converter.replace.null.with.defaultWhether to replace fields that have a default value and that are null to the default value. When set to true, the default value is used, otherwise null is used. Applicable for JSON Converter.

Type: boolean

Default: true

Importance: low

value.converter.schemas.enableInclude schemas within each of the serialized values. Input messages must contain schema and payload fields and may not contain additional fields. For plain JSON data, set this to false. Applicable for JSON Converter.

Type: boolean

Default: false

Importance: low

value.converter.value.schema.id.serializerThe class name of the schema ID serializer for values. This is used to serialize schema IDs in the message headers.

Type: string

Default: io.confluent.kafka.serializers.schema.id.PrefixSchemaIdSerializer

Importance: low

value.converter.value.subject.name.strategyDetermines how to construct the subject name under which the value schema is registered with Schema Registry.

Type: string

Default: TopicNameStrategy

Importance: low

Auto-restart policy

auto.restart.on.user.errorEnable connector to automatically restart on user-actionable errors.

Type: boolean

Default: true

Importance: medium

Next Steps

For an example that shows fully-managed Confluent Cloud connectors in action with Confluent Cloud for Apache Flink, see the Cloud ETL Demo. This example also shows how to use Confluent CLI to manage your resources in Confluent Cloud.