MongoDB Atlas Sink Connector for Confluent Cloud

The fully-managed MongoDB Atlas Sink connector for Confluent Cloud maps and persists events from Apache Kafka® topics directly to a MongoDB Atlas database collection. The connector supports Avro, JSON Schema, Protobuf, JSON (schemaless), String, or BSON data from Apache Kafka® topics. The connector ingests events from Kafka topics directly into a MongoDB Atlas database, exposing the data to services for querying, enrichment, and analytics.

Note

This Quick Start is for the fully-managed Confluent Cloud connector. If you are installing the connector locally for Confluent Platform, see MongoDB Kafka Connector documentation.

The fully-managed MongoDB Atlas Sink connector for Confluent Cloud connects to resources in the same region and cloud provider as your Confluent Cloud cluster. If your MongoDB Atlas instance is in a different region or cloud provider than your Confluent Cloud cluster, contact Confluent Support to enable cross-region or cross-cloud connectivity before you configure the connector.

If you require private networking for fully-managed connectors, make sure to set up the proper networking beforehand. For more information, see Manage Networking for Confluent Cloud Connectors.

Features

The MongoDB Atlas Sink connector supports both MongoDB Atlas and self-managed MongoDB databases.

The connector provides the following features:

Collections: Collections can be auto-created based on topic names.

Database authentication: The connector supports both username/password-based and X.509 certificate-based authentication. For more information on MONGODB-X.509-based authentication setup, see connector authentication.

Input data formats: The connector supports Avro, JSON Schema, Protobuf, JSON (schemaless), String, or BSON input data formats. Schema Registry must be enabled to use a Schema Registry-based format (for example, Avro, JSON_SR (JSON Schema), or Protobuf).

Select configuration properties:

"max.num.retries": How often retries should be attempted on write errors."max.batch.size": The maximum number of sink records to batch together for processing."delete.on.null.values": Whether the connector should delete documents with matching key values when the value is null."doc.id.strategy": The strategy to generate a unique document ID (_id)."write.strategy": Defines the behavior of bulk write operations made on a MongoDB collection.

See Configuration Properties for all property values and definitions.

Client-side encryption (CSFLE and CSPE) support: The connector supports CSFLE and CSPE for sensitive data. For more information about CSFLE or CSPE setup, see the connector configuration.

For more information and examples to use with the Confluent Cloud API for Connect, see the Confluent Cloud API for Connect Usage Examples section.

Limitations

Be sure to review the following information.

For connector limitations, see MongoDB Atlas Sink Connector limitations.

If you plan to use one or more Single Message Transforms (SMTs), see SMT Limitations.

Quick Start

Use this quick start to get up and running with the Confluent Cloud MongoDB Atlas sink connector. The quick start provides the basics of selecting the connector and configuring it to consume data from Kafka and persist the data to a MongoDB database.

- Prerequisites

Authorized access to a Confluent Cloud cluster on Amazon Web Services (AWS), Microsoft Azure (Azure), or Google Cloud.

The Confluent CLI installed and configured for the cluster. See Install the Confluent CLI.

Schema Registry must be enabled to use a Schema Registry-based format (for example, Avro, JSON_SR (JSON Schema), or Protobuf).

Access to a MongoDB database.

The MongoDB database service endpoint and the Kafka cluster must be in the same region.

The MongoDB hostname address must provide a service record (SRV) when connecting to MONGODB_ATLAS. For MONGODB_SELF_MANAGED, a standard connection string is required.

For networking considerations, see Networking and DNS. To use a set of public egress IP addresses, see Public Egress IP Addresses for Confluent Cloud Connectors.

If you have a VPC-peered cluster in Confluent Cloud, consider configuring a PrivateLink Connection between MongoDB Atlas and the VPC.

Kafka cluster credentials. The following lists the different ways you can provide credentials.

Enter an existing service account resource ID.

Create a Confluent Cloud service account for the connector. Make sure to review the ACL entries required in the service account documentation. Some connectors have specific ACL requirements.

Create a Confluent Cloud API key and secret. To create a key and secret, you can use confluent api-key create or you can autogenerate the API key and secret directly in the Cloud Console when setting up the connector.

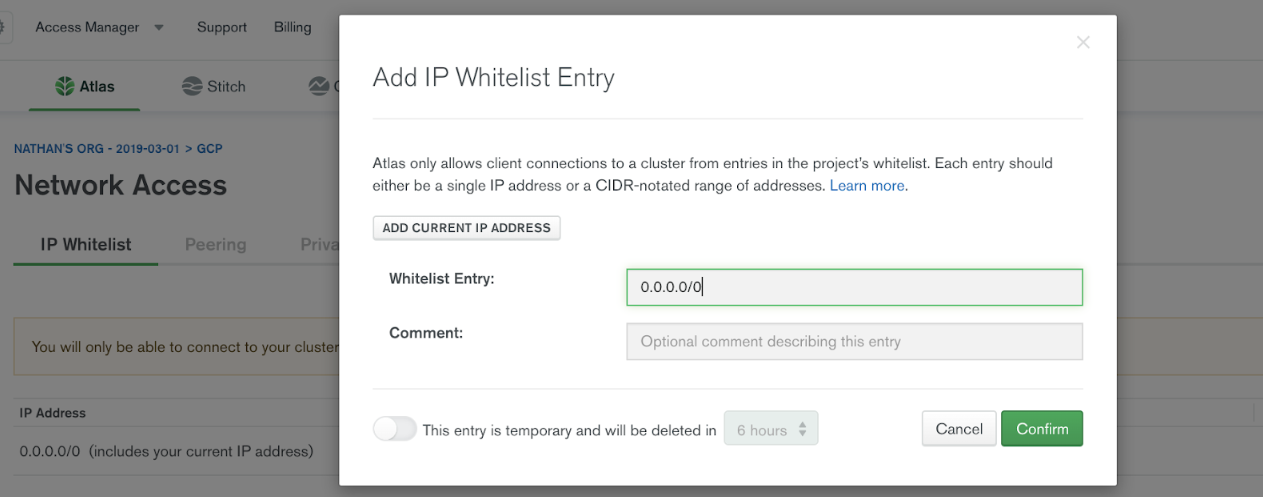

Adding an IP Whitelist Entry

Important

For networking considerations, see Networking and DNS.

To use a set of public egress IP addresses, see Public Egress IP Addresses for Confluent Cloud Connectors.

By default, MongoDB Atlas does not allow external network connections from the Internet. To allow external connections, you can add a specific IP or a CIDR IP range using the IP Whitelist entry dialog box under the Network Access menu in MongoDB.

In order for Confluent Cloud to connect to MongoDB Atlas, you need to specify the public IP address of your Confluent Cloud cluster. Add all of the Confluent Cloud egress IP addresses to the whitelist entry to your MongoDB Atlas cluster.

Using the Confluent Cloud Console

Step 1: Launch your Confluent Cloud cluster

To create and launch a Kafka cluster in Confluent Cloud, see Create a kafka cluster in Confluent Cloud.

Step 2: Add a connector

In the left navigation menu, click Connectors. If you already have connectors in your cluster, click + Add connector.

Step 3: Select your connector

Click the MongoDB Atlas Sink connector card.

Step 4: Enter the connector details

Note

Ensure you have all your prerequisites completed.

An asterisk ( * ) designates a required entry.

At the Add MongoDB Atlas Sink Connector screen, complete the following:

If you’ve already populated your Kafka topics, select the topics you want to connect from the Topics list.

To create a new topic, click +Add new topic.

Select the way you want to provide Kafka Cluster credentials. You can choose one of the following options:

My account: This setting allows your connector to globally access everything that you have access to. With a user account, the connector uses an API key and secret to access the Kafka cluster. This option is not recommended for production.

Service account: This setting limits the access for your connector by using a service account. This option is recommended for production.

Use an existing API key: This setting allows you to specify an API key and a secret pair. You can use an existing pair or create a new one. This method is not recommended for production environments.

Note

Freight clusters support only service accounts for Kafka authentication.

Click Continue.

Configure the authentication properties:

MongoDB instance type

MongoDB instance type: Specify the MongoDB deployment type. Use MONGODB_ATLAS for cloud hosted Atlas clusters or MONGODB_SELF_MANAGED for self hosted MongoDB instances.

MongoDB authentication mechanism

Authentication mechanism: Choose an authentication mechanism for MongoDB. Use SCRAM-SHA-256 for username or password authentication. Use MONGODB-X509 for certificate-based authentication. For MONGODB-X509, you must configure the SSL keystore properties.

MongoDB credentials

Connection host: The MongoDB host with connection string options. Use a hostname address and not a full URL. For example, use

cluster4-r5q3r7.gcp.mongodb.net/?readPreference=secondaryfor MONGODB_ATLAS and54.190.171.123:27017/?authSource=adminfor MONGODB_SELF_MANAGED.Note

You don’t need to manually add the connection string parameters

authMechanism=MONGODB-X509&authSource=$externalas part of the host. The connector automatically includes the parameter when you selectMONGODB-X.509as the authentication mechanism.Connection user: The MongoDB Atlas connection user.

Connection password: The MongoDB Atlas connection password. When entering the password, make sure that any special characters are URL encoded.

MongoDB Database Details

Database name: The MongoDB Atlas database name.

Collection name: The collection name to write to. If the connector is sinking data from multiple topics, this is the default collection the topics are mapped to.

SSL Configuration

SSL keystore file: Upload the SSL keystore file containing the server certificate and enter the SSL keystore password used to access the keystore.

SSL keystore password: Password used to access the keystore.

SSL truststore file: Upload the SSL truststore file containing a server CA certificate and enter the SSL truststore password used to access the truststore.

SSL truststore password: Password used to access the truststore.

Click Continue.

Note

See Configuration Properties for all property values and definitions.

Input Kafka record value format: Sets the input Kafka record value format. Valid entries are AVRO, JSON_SR, PROTOBUF, JSON, STRING or BSON. Note that you need to have Confluent Cloud Schema Registry configured if using a schema-based message format like AVRO, JSON_SR, and PROTOBUF.

Data decryption

Enable Client-Side Field Level Encryption for data decryption. Specify a Service Account to access the Schema Registry and associated encryption rules or keys with that schema. Select the connector behavior (

ERRORorNONE) on data decryption failure. If set toERROR, the connector fails and writes the encrypted data in the DLQ. If set toNONE, the connector writes the encrypted data in the target system without decryption. For more information on CSFLE or CSPE setup, see Manage encryption for connectors.

Show advanced configurations

Schema context: Select a schema context to use for this connector, if using a schema-based data format. This property defaults to the Default context, which configures the connector to use the default schema set up for Schema Registry in your Confluent Cloud environment. A schema context allows you to use separate schemas (like schema sub-registries) tied to topics in different Kafka clusters that share the same Schema Registry environment. For example, if you select a non-default context, a Source connector uses only that schema context to register a schema and a Sink connector uses only that schema context to read from. For more information about setting up a schema context, see What are schema contexts and when should you use them?.

Input Kafka record key format: Sets the input Kafka record key format. Valid entries are AVRO, BYTES, JSON, JSON_SR, PROTOBUF, or STRING. Note that you need to have Confluent Cloud Schema Registry configured if using a schema-based message format like AVRO, JSON_SR, and PROTOBUF.

Post Processor Chain: A comma separated list of post processor classes to process the data before saving to MongoDB.

Field Renamer Mapping: An inline JSON array with objects describing field name mappings. Example:

[{\"oldName\":\"key.fieldA\",\"newName\":\"field1\"},{\"oldName\":\"value.xyz\",\"newName\":\"abc\"}].Topic override map: A JSON map to override sink connector properties for specific topics. Specify the map as

{"<topicName>.<property>": "<value>"}. For example,{"orders.collection": "orders_collection", "orders.database": "orders_db"}routes the orders topic to the orders_collection in the orders_db database. Supported properties arecollection,database, and other topic-level sink configurations.

Additional Configs

Value Converter Replace Null With Default: Specifies whether to replace fields that have a default value and that are null to the default value. When set to

true, the connector uses the default value; otherwise, it usesnull. Applies to theJSONconverter.Value Converter Schema ID Deserializer: Sets the class name of the schema ID deserializer for values. The deserializer reads schema IDs from message headers.

Value Converter Reference Subject Name Strategy: Sets the subject reference name strategy for values. Valid entries are

DefaultReferenceSubjectNameStrategyorQualifiedReferenceSubjectNameStrategy. You can use this strategy only withPROTOBUFformat; the default strategy isDefaultReferenceSubjectNameStrategy.Schema ID For Value Converter: Sets the schema ID to use for deserialization when using

ConfigSchemaIdDeserializer. This lets you specify a fixed schema ID for deserializing message values. This property is applicable only whenvalue.converter.value.schema.id.deserializeris set toConfigSchemaIdDeserializer.Value Converter Schemas Enable: Includes schema within each of the serialized values. Input messages must contain

schemaandpayloadfields and must not contain additional fields. For plainJSONdata, set this tofalse. Applies to theJSONconverter.Errors Tolerance: Use this property to configure the connector’s error handling behavior.

Warning

Use this property with caution for sink connectors, as it can lead to data loss. If you set this property to

all, the connector does not fail on errant records, but logs them (and sends to DLQ for sink connectors) and continues processing. If you set this property tonone, the connector task fails on errant records.Value Converter Ignore Default For Nullables: When set to

true, this property ensures that the corresponding record in Kafka isnull, instead of showing the default column value. Applies to theAVRO,PROTOBUF, andJSON_SRconverters.Key Converter Schema ID Deserializer: Sets the class name of the schema ID deserializer for keys. The deserializer reads schema IDs from message headers.

Value Converter Decimal Format: Specifies the

JSONorJSON_SRserialization format for ConnectDECIMALlogical type values with two allowed literals:BASE64to serializeDECIMALlogical types as base64 encoded binary data, andNUMERICto serializeDECIMALlogical type values inJSONorJSON_SRas a number representing the decimal value.Schema GUID For Key Converter: Sets the schema GUID to use for deserialization when using

ConfigSchemaIdDeserializer. This lets you specify a fixed schema GUID for deserializing message keys. This property is applicable only whenkey.converter.key.schema.id.deserializeris set toConfigSchemaIdDeserializer.Schema GUID For Value Converter: Sets the schema GUID to use for deserialization when using

ConfigSchemaIdDeserializer. This lets you specify a fixed schema GUID for deserializing message values. This property is applicable only whenvalue.converter.value.schema.id.deserializeris set toConfigSchemaIdDeserializer.Value Converter Connect Meta Data: Enables the Connect converter to add its metadata to the output schema. Applies to Avro converters.

Value Converter Value Subject Name Strategy: Determines how to construct the subject name under which the value schema is registered with Schema Registry.

Key Converter Key Subject Name Strategy: Determines how to construct the subject name for key schema registration.

Schema ID For Key Converter: Sets the schema ID to use for deserialization when using

ConfigSchemaIdDeserializer. This lets you specify a fixed schema ID for deserializing message keys. This property is applicable only whenkey.converter.key.schema.id.deserializeris set toConfigSchemaIdDeserializer.

Auto-restart policy

Enable Connector Auto-restart: Enables the auto-restart behavior of the connector and its task in the event of user-actionable errors. Defaults to

true, enabling the connector to automatically restart in case of user-actionable errors. Set this property tofalseto disable auto-restart for failed connectors. In such cases, you would need to manually restart the connector.

Consumer configuration

Max poll interval(ms): Sets the maximum delay between subsequent consume requests to Kafka. Use this property to improve connector performance in cases when the connector cannot send records to the sink system. The default is 300,000 milliseconds (5 minutes).

Max poll records: Sets the maximum number of records to consume from Kafka in a single request. Use this property to improve connector performance in cases when the connector cannot send records to the sink system. The default is 500 records.

Input messages

Change Data Capture handler: Sets the class name of the CDC handler to use for processing. You can capture CDC events with this connector and perform corresponding insert, update, and delete operations to a destination MongoDB cluster. Valid options are

None,MongoDbChangeStreamHandler,DebeziumMongoDbHandler,DebeziumMySqlHandler,DebeziumPostgresHandler, orQlikRdbmsHandler. If not used, this property defaults toNone. For more information, see mongodb-sink-cdc.

Writes

Delete on null values: Select whether or not the connector deletes documents with matching key values when the value is null. The default is false.

Write Model Strategy: The class that specifies the

WriteModelto use for bulk writes. Defaults toDefaultWriteModelStrategy. Valid entries areDefaultWriteModelStrategy,ReplaceOneDefaultStrategy,InsertOneDefaultStrategy,ReplaceOneBusinessKeyStrategy,DeleteOneDefaultStrategy,UpdateOneTimestampsStrategy,UpdateOneBusinessKeyTimestampStrategy, orUpdateOneDefaultStrategy. For time-series collections, theDefaultWriteModelStrategywill internally default toInsertOneDefaultStrategy. For normal collections, it defaults toReplaceOneDefaultStrategy. For detailed information about each write strategy, see Strategies.Max batch size: Enter the maximum number of records to batch together for processing. The default is

0(uses the server default setting).Use ordered bulk writes: When batch processing is used, this property sets whether the connector writes the batches using ordered bulk writes. Defaults to

true.Rate limiting timeout: After a rate limit is reached, this sets how long in milliseconds (ms) the connector waits before continuing to process data. Defaults to

0.Rate limiting batch number: The number of processed batches that trigger a rate limit. Defaults to

0(that is, no rate limiting).Delete Write Model Strategy: The class that handles how to build the delete write models for the sink documents.

Connection details

Max number of retries: Enter the maximum number of retries on a write error. The default value is 3 retries.

Retry defer timeout (ms): Enter value in milliseconds (ms) that a retry gets deferred. The default is 5000 ms (5 seconds).

ID strategies

Document ID strategy: Select the strategy to generate a unique document ID

_id. To delete a document when the value is null, this has to be set toFullKeyStrategy,PartialKeyStrategy, orProvidedInKeyStrategy. The default isBsonOidStrategy. For more information, see DocumentIdAdder.Document ID strategy overwrite existing: Whether the connector should overwrite existing values in the

_idfield when the strategy defined indoc.id.strategyis applied.Document ID strategy UUID format: The BSON output format when using

UuidStrategy. Options areStringorBinary.Document ID strategy key projection type: For use with the

PartialKeyStrategy. Allows custom key fields to be projected for the ID strategy. Use eitherAllowListorBlockList.Document ID strategy key projection list: For use with the

PartialKeyStrategy. Allows custom key fields to be projected for the ID strategy. A comma-separated list of fields names for key projection.Document ID strategy value projection type: For use with the

PartialValueStrategy. Allows custom value fields to be projected for the ID strategy. Use eitherAllowListorBlockList.Document ID strategy value projection list: For use with the

PartialValueStrategy. Allows custom value fields to be projected for the ID strategy. A comma-separated list of field names for value projection.

Time Series configuration

Timefield: The name of the top-level time field that contains the date in each time-series document. Setting this config will create a time-series collection where each document will have a BSON date value for the time field. Time-series collections were introduced in MongoDB v5.0, which is only available for dedicated clusters in MongoDB Atlas. For more information, see Time Series Properties.

Auto Conversion: Whether to convert the data in the time field to BSON date format. Supported formats include integer, long and string.

Auto Convert Date Format: The string pattern to convert the source data from format to convert the source data from. The setting expects the string representation to contain both date and time information and uses the Java

DateTimeFormatter.ofPattern(pattern, locale) API for the conversion. If the string only contains date information, then the time since epoch is from the start of that day. If a string representation does not contain time-zone offset, then the setting interprets the extracted date and time as UTC.Locale Language Tag: The

DateTimeFormatter’s locale language tag to use with the date pattern.Metafield: The name of the top-level field that contains metadata in each time-series document. The metadata in the specified field should be data that is used to label a unique series of documents. The field can be of any type except array.

Expire After Seconds: The amount of seconds the data remains in MongoDB before MongoDB deletes it. Omitting this field means data will not be deleted automatically.

Granularity: The expected interval between subsequent measurements for a time-series. Set this to

Noneor leave it blank to disable time-series collection creation.

Server API

Server API version: The server API version to use. Disabled by default.

Deprecation errors: Sets whether the connector requires use of deprecated server APIs to be reported as errors.

Strict: Sets whether the application requires strict server API version enforcement.

Namespace mapping

Namespace mapper class: The class that determines the namespace to write the sink data to. By default, this is based on the ‘database’ configuration and either the topic name or the ‘collection’ configuration.

Key field for destination database name: The key field to use as the destination database name.

Key field for destination collection name: The key field to use as the destination collection name.

Value field for destination database name: The value field to use as the destination database name.

Value field for destination collection name: The value field to use as the destination collection name.

Mapped field error: Whether to throw an error if the mapped field is missing or invalid. Defaults to false.

Error handling Mongo

Error tolerance: Use this property if you would like to configure the connector’s error handling behavior differently from the Connect framework’s.

Transforms

Single Message Transforms: To add a new SMT, see Add transforms. For more information about unsupported SMTs, see Unsupported transformations.

Processing position

Set offsets: Click Set offsets to define a specific offset for this connector to begin procession data from. For more information on managing offsets, see Manage offsets.

Click Continue.

Based on the number of topic partitions you select, you will be provided with a recommended number of tasks. One task can handle up to 100 partitions.

To change the number of recommended tasks, enter the number of tasks for the connector to use in the Tasks field.

Click Continue.

Step 5: Check MongoDB

After the connector is running, verify that messages are populating your MongoDB database.

Tip

When you launch a connector, a Dead Letter Queue topic is automatically created. See View Connector Dead Letter Queue Errors in Confluent Cloud for details.

Using the Confluent CLI

Complete the following steps to set up and run the connector using the Confluent CLI.

Note

Make sure you have all your prerequisites completed.

Step 1: List the available connectors

Enter the following command to list available connectors:

confluent connect plugin list

Step 2: List the connector configuration properties

Enter the following command to show the connector configuration properties:

confluent connect plugin describe <connector-plugin-name>

The command output shows the required and optional configuration properties.

Step 3: Create the connector configuration file

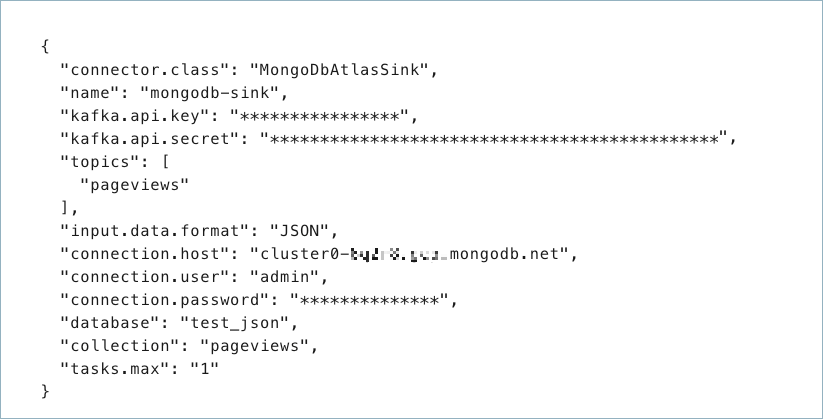

Create a JSON file that contains the connector configuration properties. The following example shows the required connector properties.

{

"connector.class": "MongoDbAtlasSink",

"name": "confluent-mongodb-sink",

"kafka.auth.mode": "KAFKA_API_KEY",

"kafka.api.key": "<my-kafka-api-key",

"kafka.api.secret": "<my-kafka-api-secret>",

"input.data.format" : "JSON",

"connection.host": "<database-host-address>",

"connection.user": "<my-username>",

"connection.password": "<my-password>",

"topics": "<kafka-topic-name>",

"max.num.retries": "3",

"retries.defer.timeout": "5000",

"max.batch.size": "0",

"database": "<database-name>",

"collection": "<collection-name>",

"tasks.max": "1"

}

Note the following property definitions:

"connector.class": Identifies the connector plugin name."name": Sets a name for your new connector.

"kafka.auth.mode": Identifies the connector authentication mode you want to use. There are two options:SERVICE_ACCOUNTorKAFKA_API_KEY(the default). To use an API key and secret, specify the configuration propertieskafka.api.keyandkafka.api.secret, as shown in the example configuration (above). To use a service account, specify the Resource ID in the propertykafka.service.account.id=<service-account-resource-ID>. To list the available service account resource IDs, use the following command:confluent iam service-account list

For example:

confluent iam service-account list Id | Resource ID | Name | Description +---------+-------------+-------------------+------------------- 123456 | sa-l1r23m | sa-1 | Service account 1 789101 | sa-l4d56p | sa-2 | Service account 2

"input.data.format": Sets the input Kafka record value format (data coming from the Kafka topic). Valid entries are AVRO, JSON_SR, PROTOBUF, JSON, STRING, or BSON. You must have Confluent Cloud Schema Registry configured if using a schema-based message format (for example, Avro, JSON_SR (JSON Schema), or Protobuf)."connection.host": The MongoDB host with connection string options. Use a hostname address and not a full URL. For example, usecluster4-r5q3r7.gcp.mongodb.net/?readPreference=secondaryfor MONGODB_ATLAS and54.190.171.123:27017/?authSource=adminfor MONGODB_SELF_MANAGED.

Note

The connector does not support following connection string options in

connection.hostconfig:tlsCertificateKeyFile,tlsCertificateKeyFilePassword,tlsCAFile,tlsAllowInvalidCertificates,tlsInsecure,tlsAllowInvalidHostnames,authMechanism,authMechanismProperties,gssapiServiceName.Other options like

readPreference,readConcernLevel, orwdefined in MongoDB Connection String Options can be configured inconnection.hostconfiguration. For example,cluster4-r5q3r7.gcp.mongodb.net/?readPreference=secondary&readConcernLevel=local&appName=test&w=majority.- The connector supports connecting to self-managed MongoDB database.

Use

mongodb.instance.typeas MONGODB_SELF_MANAGED and specify theconnection.hostaccordingly to connect to self-managed instance. For example,54.190.171.123:27017/?authSource=admin.

"collection": The MongoDB collection name. For multiple topics, this is the default collection the topics are mapped to.The following are optional (with the exception of the number of tasks).

"max.num.retries": How often retries should be attempted on write errors. If not used, this property defaults to 3."retries.defer.timeout": How long (in milliseconds) a retry should get deferred. If not used, the default is 5000 ms."max.batch.size": The maximum number of sink records to batch together for processing. If not used, this property defaults to 0."delete.on.null.values": Whether the connector should delete documents with matching key values, when the value is null. If not used, this property defaults tofalse."doc.id.strategy": Sets the strategy to generate a unique document ID(_id). Enter the strategy to generate a unique document ID (_id). Valid entries areBsonOidStrategy,KafkaMetaDataStrategy,FullKeyStrategy,PartialKeyStrategy,PartialValueStrategy,ProvidedInKeyStrategy,ProvidedInValueStrategy, orUuidStrategy. To delete the document when the value is null, you must set the strategy toFullKeyStrategy,PartialKeyStrategy, orProvidedInKeyStrategy. The default value isBsonOidStrategy. For more information, see DocumentIdAdder.Depending on the selected strategy, add the appropriate Document ID strategy projection list:

"key.projection.type": For use withPartialKeyStrategy. Use eitherallowlistorblocklistto allow or block the custom key fields to be projected for ID strategy. If not used, this property defaults tonone."key.projection.list": For use withPartialKeyStrategy. A comma-separated list of key fields to be projected for ID strategy."value.projection.type": For use withPartialValueStrategy. Use eitherallowlistorblocklistto allow or block the custom value fields to be projected for ID strategy. If not used, this property defaults tonone."value.projection.list": For use withPartialValueStrategy. A comma-separated list of value fields to be projected for ID strategy.

"write.strategy": Sets the write model for bulk write operations. Valid entries areDefaultWriteModelStrategy,ReplaceOneDefaultStrategy,InsertOneDefaultStrategy,ReplaceOneBusinessKeyStrategy,DeleteOneDefaultStrategy,UpdateOneTimestampsStrategy,UpdateOneBusinessKeyTimestampStrategy, orUpdateOneDefaultStrategy. If not used, this property defaults toDefaultWriteModelStrategy. For time-series collections, theDefaultWriteModelStrategywill internally default toInsertOneDefaultStrategy. For normal collections, it defaults toReplaceOneDefaultStrategy. For detailed information about each write strategy, see Strategies."cdc.handler": Sets the class name of CDC handler to use for processing. You can capture CDC events with the MongoDB Kafka Sink connector and perform corresponding insert, update, and delete operations to a destination MongoDB cluster. Valid entries areNone,MongoDbChangeStreamHandler,DebeziumMongoDbHandler,DebeziumMySqlHandler,DebeziumPostgresHandler, orQlikRdbmsHandler. If not used, this property defaults toNone. For more information, see mongodb-sink-cdc."timeseries.timefield": Sets the name of the top-level time field that contains the date in each time-series document. Setting this property will create a time-series collection where each document will have a BSON date as the value for the time field. Time-series collections were introduced in MongoDB v5.0, which is only available for dedicated clusters in MongoDB Atlas."timeseries.timefield.auto.convert": Whether to convert the data in the time field to BSON date format. Supported formats for data include integer, long and string. If not used, this property defaults tofalse."timeseries.timefield.auto.convert.date.format": Sets the DateTimeFormatter format to convert the source data from. The setting expects the string representation to contain both date and time information and uses the Java DateTimeFormatter.ofPattern(pattern, locale) API for the conversion. If the string only contains date information, then the time since epoch is from the start of that day. If a string representation does not contain time-zone offset, then the setting interprets the extracted date and time as UTC. If not used, this property defaults toyyyy-MM-dd[['T'][ ]][HH:mm:ss[[.][SSSSSS][SSS]][ ]VV[ ]'['VV']'][HH:mm:ss[[.][SSSSSS][SSS]][ ]X][HH:mm:ss[[.][SSSSSS][SSS]]]."timeseries.timefield.auto.convert.locale.language.tag": Sets the DateTimeFormatter locale language tag to use with the date pattern. See Language tags in HTML and XML for more information on constructing tags. If not used, this property defaults toen."timeseries.metafield": Sets the name of the top-level field that contains metadata in each time-series document. The metadata in the specified field should be data that is used to label a unique series of documents. The field can be of any type except array."timeseries.expire.after.seconds": Sets the number of seconds after which the document expires. MongoDB deletes expired documents automatically. If not used, this property default to0, which means data will not be deleted automatically."ts.granularity": Sets the interval granularity for subsequent measurements for a time-series. Valid entries areNone,seconds,minutes, orhours. If not used, this property defaults toNone. For normal collections,Noneis the only applicable value. For time-series collections, all entries are applicable andNoneinternally defaults toseconds.Enter the number of tasks for the connector. Refer to Confluent Cloud connector limitations for additional information.

Note

To enable CSFLE or CSPE for data encryption, specify the following properties:

csfle.enabled: Flag to indicate whether the connector honors CSFLE or CSPE rules.sr.service.account.id: A Service Account to access the Schema Registry and associated encryption rules or keys with that schema.csfle.onFailure: Configures the connector behavior (ERRORorNONE) on data decryption failure. If set toERROR, the connector fails and writes the encrypted data in the DLQ. If set toNONE, the connector writes the encrypted data in the target system without decryption.

When using CSFLE or CSPE with connectors that route failed messages to a Dead Letter Queue (DLQ), be aware that data sent to the DLQ is written in unencrypted plaintext. This poses a significant security risk as sensitive data that should be encrypted may be exposed in the DLQ.

Do not use DLQ with CSFLE or CSPE in the current version. If you need error handling for CSFLE- or CSPE-enabled data, use alternative approaches such as:

Setting the connector behavior to

ERRORto throw exceptions instead of routing to DLQImplementing custom error handling in your applications

Using

NONEto pass encrypted data through without decryption

For more information on CSFLE or CSPE setup, see Manage encryption for connectors.

Single Message Transforms: See the Single Message Transforms (SMT) documentation for details about adding SMTs using the CLI.

See Configuration Properties for all property values and definitions.

Step 4: Load the properties file and create the connector

Enter the following command to load the configuration and start the connector:

confluent connect cluster create --config-file <file-name>.json

For example:

confluent connect cluster create --config-file mongo-db-sink.json

Example output:

Created connector confluent-mongodb-sink lcc-ix4dl

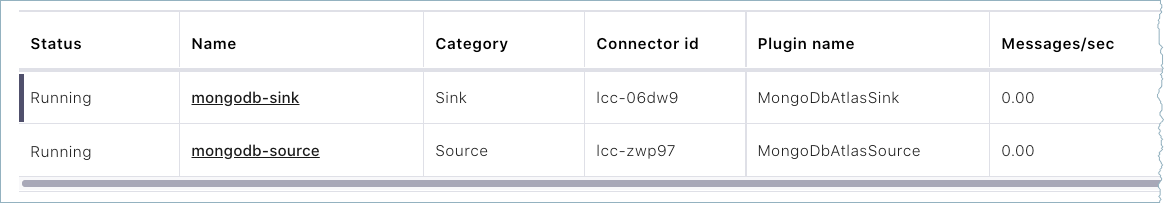

Step 5: Check the connector status

Enter the following command to check the connector status:

confluent connect cluster list

Example output:

ID | Name | Status | Type

+-----------+-------------------------+---------+------+

lcc-ix4dl | confluent-mongodb-sink | RUNNING | sink

Step 6: Check MongoDB

After the connector is running, verify that records are populating your MongoDB database.

Tip

When you launch a connector, a Dead Letter Queue topic is automatically created. See View Connector Dead Letter Queue Errors in Confluent Cloud for details.

Configuration Properties

Use the following configuration properties with the fully-managed connector. For self-managed connector property definitions and other details, see the connector docs in Self-managed connectors for Confluent Platform.

How should we connect to your data?

nameSets a name for your connector.

Type: string

Valid Values: A string at most 64 characters long

Importance: high

Schema Config

schema.context.nameAdd a schema context name. A schema context represents an independent scope in Schema Registry. It is a separate sub-schema tied to topics in different Kafka clusters that share the same Schema Registry instance. If not used, the connector uses the default schema configured for Schema Registry in your Confluent Cloud environment.

Type: string

Default: default

Importance: medium

Input messages

input.data.formatSets the input Kafka record value format. Valid entries are AVRO, JSON_SR, PROTOBUF, JSON, STRING or BSON. Note that you need to have Confluent Cloud Schema Registry configured if using a schema-based message format like AVRO, JSON_SR, and PROTOBUF.

Type: string

Default: JSON

Importance: high

input.key.formatSets the input Kafka record key format. Valid entries are AVRO, BYTES, JSON, JSON_SR, PROTOBUF, or STRING. Note that you need to have Confluent Cloud Schema Registry configured if using a schema-based message format like AVRO, JSON_SR, and PROTOBUF

Type: string

Default: STRING

Valid Values: AVRO, BYTES, JSON, JSON_SR, PROTOBUF, STRING

Importance: high

cdc.handlerThe class name of the CDC handler to use for processing. You can capture CDC events with the MongoDB Kafka sink connector and perform corresponding insert, update, and delete operations to a destination MongoDB cluster.

Type: string

Default: None

Importance: low

Writes

delete.on.null.valuesWhether or not the connector should try to delete documents based on key when value is null.

Type: boolean

Default: false

Importance: low

max.batch.sizeThe maximum number of sink records to possibly batch together for processing.

Type: int

Default: 0

Valid Values: [0,…]

Importance: low

bulk.write.orderedWhether the batches controlled by ‘max.batch.size’ must be written via ordered bulk writes.

Type: boolean

Default: true

Importance: low

rate.limiting.timeoutHow long in ms processing should wait before continuing after triggering a rate limit.

Type: int

Default: 0

Importance: low

rate.limiting.every.nThe number of processed batches that will trigger rate limiting. The default value of 0 sets no rate limiting.

Type: int

Default: 0

Importance: low

write.strategyThe class that specifies the WriteModel to use for bulk writes.

Type: string

Default: DefaultWriteModelStrategy

Importance: low

delete.write.strategyThe class that handles how to build the delete write models for the sink documents.

Type: string

Default: DeleteOneDefaultStrategy

Importance: low

topic.override.mapA JSON map to override sink connector properties for specific topics. Specify the map as

{"<topicName>.<property>": "<value>"}. For example,{"orders.collection": "orders_collection", "orders.database": "orders_db"}routes the orders topic to the orders_collection in the orders_db database. Supported properties arecollection,database, and other topic-level sink configurations.Type: string

Default: {}

Importance: low

Kafka Cluster credentials

kafka.auth.modeKafka Authentication mode. It can be one of KAFKA_API_KEY or SERVICE_ACCOUNT. It defaults to KAFKA_API_KEY mode, whenever possible.

Type: string

Valid Values: SERVICE_ACCOUNT, KAFKA_API_KEY

Importance: high

kafka.api.keyKafka API Key. Required when kafka.auth.mode==KAFKA_API_KEY.

Type: password

Importance: high

kafka.service.account.idThe Service Account that will be used to generate the API keys to communicate with Kafka Cluster.

Type: string

Importance: high

kafka.api.secretSecret associated with Kafka API key. Required when kafka.auth.mode==KAFKA_API_KEY.

Type: password

Importance: high

Which topics do you want to get data from?

topics.regexA regular expression that matches the names of the topics to consume from. This is useful when you want to consume from multiple topics that match a certain pattern without having to list them all individually.

Type: string

Importance: low

topicsIdentifies the topic name or a comma-separated list of topic names.

Type: list

Importance: high

errors.deadletterqueue.topic.nameThe name of the topic to be used as the dead letter queue (DLQ) for messages that result in an error when processed by this sink connector, or its transformations or converters. Defaults to ‘dlq-${connector}’ if not set. The DLQ topic will be created automatically if it does not exist. You can provide

${connector}in the value to use it as a placeholder for the logical cluster ID.Type: string

Default: dlq-${connector}

Importance: low

How should we connect to your MongoDB database?

mongodb.instance.typeSpecifies the type of MongoDB instance the connector will connect to.

Type: string

Default: MONGODB_ATLAS

Valid Values: MONGODB_ATLAS, MONGODB_SELF_MANAGED

Importance: high

mongodb.auth.mechanismChoose an authentication mechanism for MongoDB. Use SCRAM-SHA-256 for username/password authentication. Use MONGODB-X509 for certificate-based authentication. For MONGODB-X509, you must configure the SSL keystore properties.

Type: string

Default: SCRAM-SHA-256

Valid Values: MONGODB-X509, SCRAM-SHA-256

Importance: high

connection.hostFor MongoDB Atlas, provide the SRV connection host (e.g., mycluster.abc123.mongodb.net). For Self Managed MongoDB, provide the host and port in MongoDB URI format, e.g., host1:27017 or host1:27017/?replicaSet=myReplicaSet.

Type: string

Default: “”

Importance: high

connection.userMongoDB connection user.

Type: string

Importance: high

connection.passwordMongoDB connection password.

Type: password

Importance: high

connection.ssl.truststore.fileThe trust store file containing trusted certificates. Supported formats include JKS and PKCS12. If not set, the default Java trust store is used.

Type: password

Default: [hidden]

Importance: medium

connection.ssl.truststorePasswordThe password for the trust store file.

Type: password

Default: [hidden]

Importance: medium

connection.ssl.keystore.fileThe key store file containing the client certificate and private key for MONGODB-X509 authentication. Supported formats include JKS and PKCS12.

Type: password

Default: [hidden]

Importance: medium

connection.ssl.keystorePasswordThe password for the key store file. This is optional for the client and only needed if

connection.ssl.keystore.fileis configured.Type: password

Default: [hidden]

Importance: medium

databaseMongoDB database name.

Type: string

Importance: high

Database details

collectionMongoDB collection name.

Type: string

Importance: medium

ID strategies

doc.id.strategyThe IdStrategy class name to use for generating a unique document id (_id).

Type: string

Default: BsonOidStrategy

Importance: low

doc.id.strategy.overwrite.existingWhether the connector should overwrite existing values in the _id field when the strategy defined in doc.id.strategy is applied.

Type: boolean

Default: false

Importance: low

document.id.strategy.uuid.formatThe bson output format when using the UuidStrategy. Can be either String or Binary.

Type: string

Default: string

Importance: low

key.projection.typeFor use with the PartialKeyStrategy allows custom key fields to be projected for the ID strategy. Use either AllowList or BlockList.

Type: string

Default: none

Importance: low

key.projection.listFor use with the PartialKeyStrategy allows custom key fields to be projected for the ID strategy. A comma-separated list of field names for key projection.

Type: string

Importance: low

value.projection.typeFor use with the PartialValueStrategy allows custom value fields to be projected for the ID strategy. Use either AllowList or BlockList.

Type: string

Default: none

Importance: low

value.projection.listFor use with the PartialValueStrategy allows custom value fields to be projected for the ID strategy. A comma-separated list of field names for value projection.

Type: string

Importance: low

Namespace mapping

namespace.mapper.classThe class that determines the namespace to write the sink data to. By default this will be based on the ‘database’ configuration and either the topic name or the ‘collection’ configuration.

Type: string

Default: DefaultNamespaceMapper

Importance: low

namespace.mapper.key.database.fieldThe key field to use as the destination database name.

Type: string

Importance: low

namespace.mapper.key.collection.fieldThe key field to use as the destination collection name.

Type: string

Importance: low

namespace.mapper.value.database.fieldThe value field to use as the destination database name.

Type: string

Importance: low

namespace.mapper.value.collection.fieldThe value field to use as the destination collection name.

Type: string

Importance: low

namespace.mapper.error.if.invalidWhether to throw an error if the mapped field is missing or invalid. Defaults to false.

Type: boolean

Default: false

Importance: low

Server API

server.api.versionThe server API version to use. Disabled by default.

Type: string

Importance: low

server.api.deprecation.errorsSets whether the connector requires use of deprecated server APIs to be reported as errors.

Type: boolean

Default: false

Importance: low

server.api.strictSets whether the application requires strict server API version enforcement.

Type: boolean

Default: false

Importance: low

Connection details

max.num.retriesHow many retries should be attempted on write errors.

Type: int

Default: 3

Valid Values: [0,…]

Importance: low

retries.defer.timeoutHow long a retry should get deferred.

Type: int

Default: 5000

Valid Values: [0,…]

Importance: low

Time Series configuration

timeseries.timefieldThe name of the top-level field which contains the date in each time series document. Setting this config will create a time series collection where each document will have a BSON date as the value for the timefield.

Type: string

Default: “”

Importance: low

timeseries.timefield.auto.convertWhether to convert the data in the field into a BSON Date format. Supported formats include integer, long, and string.

Type: boolean

Default: false

Importance: low

timeseries.timefield.auto.convert.date.formatThe string pattern to convert the source data from. The setting expects the string representation to contain both date and time information and uses the Java DateTimeFormatter.ofPattern(pattern, locale) API for the conversion. If the string only contains date information, then the time since epoch is from the start of that day. If a string representation does not contain time-zone offset, then the setting interprets the extracted date and time as UTC.

Type: string

Default: yyyy-MM-dd[[‘T’][ ]][HH:mm:ss[[.][SSSSSS][SSS]][ ]VV[ ]’[‘VV’]’][HH:mm:ss[[.][SSSSSS][SSS]][ ]X][HH:mm:ss[[.][SSSSSS][SSS]]]

Importance: low

timeseries.timefield.auto.convert.locale.language.tagThe DateTimeFormatter locale language tag to use with the date pattern.

Type: string

Default: en

Importance: low

timeseries.metafieldThe name of the top-level field that contains metadata in each time series document. This field groups related data. It can be of any type except array.

Type: string

Default: “”

Importance: low

timeseries.expire.after.secondsThe amount of seconds the data remains in MongoDB before MongoDB deletes it. Omitting this field means data will not be deleted automatically.

Type: int

Default: 0

Valid Values: [0,…]

Importance: low

ts.granularityThe expected interval between subsequent measurements for a time-series. Set this to None or leave it empty if the data is not time-series

Type: string

Default: None

Importance: low

Error handling

mongo.errors.toleranceUse this property if you would like to configure the connector’s error handling behavior differently from the Connect framework’s.

Type: string

Default: NONE

Importance: medium

Consumer configuration

max.poll.interval.msThe maximum delay between subsequent consume requests to Kafka. This configuration property may be used to improve the performance of the connector, if the connector cannot send records to the sink system. Defaults to 300000 milliseconds (5 minutes).

Type: long

Default: 300000 (5 minutes)

Valid Values: [60000,…,1800000] for non-dedicated clusters and [60000,…] for dedicated clusters

Importance: low

max.poll.recordsThe maximum number of records to consume from Kafka in a single request. This configuration property may be used to improve the performance of the connector, if the connector cannot send records to the sink system. Defaults to 500 records.

Type: long

Default: 500

Valid Values: [1,…,500] for non-dedicated clusters and [1,…] for dedicated clusters

Importance: low

Number of tasks for this connector

tasks.maxMaximum number of tasks for the connector.

Type: int

Valid Values: [1,…]

Importance: high

Post Processing

post.processor.chainA comma separated list of post processor classes to process the data before saving to MongoDB.

Type: list

Default: com.mongodb.kafka.connect.sink.processor.DocumentIdAdder

Importance: low

field.renamer.mappingAn inline JSON array with objects describing field name mappings. Example:

[{"oldName":"key.fieldA","newName":"field1"},{"oldName":"value.xyz","newName":"abc"}]Type: string

Default: []

Importance: low

Additional Configs

consumer.override.auto.offset.resetDefines the behavior of the consumer when there is no committed position (which occurs when the group is first initialized) or when an offset is out of range. You can choose either to reset the position to the “earliest” offset (the default) or the “latest” offset. You can also select “none” if you would rather set the initial offset yourself and you are willing to handle out of range errors manually. More details: https://docs.confluent.io/platform/current/installation/configuration/consumer-configs.html#auto-offset-reset

Type: string

Importance: low

consumer.override.isolation.levelControls how to read messages written transactionally. If set to read_committed, consumer.poll() will only return transactional messages which have been committed. If set to read_uncommitted (the default), consumer.poll() will return all messages, even transactional messages which have been aborted. Non-transactional messages will be returned unconditionally in either mode. More details: https://docs.confluent.io/platform/current/installation/configuration/consumer-configs.html#isolation-level

Type: string

Importance: low

header.converterThe converter class for the headers. This is used to serialize and deserialize the headers of the messages.

Type: string

Importance: low

key.converter.use.schema.guidThe schema GUID to use for deserialization when using ConfigSchemaIdDeserializer. This allows you to specify a fixed schema GUID to be used for deserializing message keys. Only applicable when key.converter.key.schema.id.deserializer is set to ConfigSchemaIdDeserializer.

Type: string

Importance: low

key.converter.use.schema.idThe schema ID to use for deserialization when using ConfigSchemaIdDeserializer. This allows you to specify a fixed schema ID to be used for deserializing message keys. Only applicable when key.converter.key.schema.id.deserializer is set to ConfigSchemaIdDeserializer.

Type: int

Importance: low

value.converter.allow.optional.map.keysAllow optional string map key when converting from Connect Schema to Avro Schema. Applicable for Avro Converters.

Type: boolean

Importance: low

value.converter.auto.register.schemasSpecify if the Serializer should attempt to register the Schema.

Type: boolean

Importance: low

value.converter.connect.meta.dataAllow the Connect converter to add its metadata to the output schema. Applicable for Avro Converters.

Type: boolean

Importance: low

value.converter.enhanced.avro.schema.supportEnable enhanced schema support to preserve package information and Enums. Applicable for Avro Converters.

Type: boolean

Importance: low

value.converter.enhanced.protobuf.schema.supportEnable enhanced schema support to preserve package information. Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.flatten.unionsWhether to flatten unions (oneofs). Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.generate.index.for.unionsWhether to generate an index suffix for unions. Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.generate.struct.for.nullsWhether to generate a struct variable for null values. Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.int.for.enumsWhether to represent enums as integers. Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.latest.compatibility.strictVerify latest subject version is backward compatible when use.latest.version is true.

Type: boolean

Importance: low

value.converter.object.additional.propertiesWhether to allow additional properties for object schemas. Applicable for JSON_SR Converters.

Type: boolean

Importance: low

value.converter.optional.for.nullablesWhether nullable fields should be specified with an optional label. Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.optional.for.proto2Whether proto2 optionals are supported. Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.scrub.invalid.namesWhether to scrub invalid names by replacing invalid characters with valid characters. Applicable for Avro and Protobuf Converters.

Type: boolean

Importance: low

value.converter.use.latest.versionUse latest version of schema in subject for serialization when auto.register.schemas is false.

Type: boolean

Importance: low

value.converter.use.optional.for.nonrequiredWhether to set non-required properties to be optional. Applicable for JSON_SR Converters.

Type: boolean

Importance: low

value.converter.use.schema.guidThe schema GUID to use for deserialization when using ConfigSchemaIdDeserializer. This allows you to specify a fixed schema GUID to be used for deserializing message values. Only applicable when value.converter.value.schema.id.deserializer is set to ConfigSchemaIdDeserializer.

Type: string

Importance: low

value.converter.use.schema.idThe schema ID to use for deserialization when using ConfigSchemaIdDeserializer. This allows you to specify a fixed schema ID to be used for deserializing message values. Only applicable when value.converter.value.schema.id.deserializer is set to ConfigSchemaIdDeserializer.

Type: int

Importance: low

value.converter.wrapper.for.nullablesWhether nullable fields should use primitive wrapper messages. Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.wrapper.for.raw.primitivesWhether a wrapper message should be interpreted as a raw primitive at root level. Applicable for Protobuf Converters.

Type: boolean

Importance: low

errors.toleranceUse this property if you would like to configure the connector’s error handling behavior. WARNING: This property should be used with CAUTION for SOURCE CONNECTORS as it may lead to dataloss. If you set this property to ‘all’, the connector will not fail on errant records, but will instead log them (and send to DLQ for Sink Connectors) and continue processing. If you set this property to ‘none’, the connector task will fail on errant records.

Type: string

Default: all

Importance: low

key.converter.key.schema.id.deserializerThe class name of the schema ID deserializer for keys. This is used to deserialize schema IDs from the message headers.

Type: string

Default: io.confluent.kafka.serializers.schema.id.DualSchemaIdDeserializer

Importance: low

key.converter.key.subject.name.strategyHow to construct the subject name for key schema registration.

Type: string

Default: TopicNameStrategy

Importance: low

key.converter.replace.null.with.defaultWhether to replace fields that have a default value and that are null to the default value. When set to true, the default value is used, otherwise null is used. Applicable for JSON Key Converter.

Type: boolean

Default: true

Importance: low

key.converter.schemas.enableInclude schemas within each of the serialized keys. Input message keys must contain schema and payload fields and may not contain additional fields. For plain JSON data, set this to false. Applicable for JSON Key Converter.

Type: boolean

Default: false

Importance: low

value.converter.decimal.formatSpecify the JSON/JSON_SR serialization format for Connect DECIMAL logical type values with two allowed literals:

BASE64 to serialize DECIMAL logical types as base64 encoded binary data and

NUMERIC to serialize Connect DECIMAL logical type values in JSON/JSON_SR as a number representing the decimal value.

Type: string

Default: BASE64

Importance: low

value.converter.flatten.singleton.unionsWhether to flatten singleton unions. Applicable for Avro and JSON_SR Converters.

Type: boolean

Default: false

Importance: low

value.converter.ignore.default.for.nullablesWhen set to true, this property ensures that the corresponding record in Kafka is NULL, instead of showing the default column value. Applicable for AVRO,PROTOBUF and JSON_SR Converters.

Type: boolean

Default: false

Importance: low

value.converter.reference.subject.name.strategySet the subject reference name strategy for value. Valid entries are DefaultReferenceSubjectNameStrategy or QualifiedReferenceSubjectNameStrategy. Note that the subject reference name strategy can be selected only for PROTOBUF format with the default strategy being DefaultReferenceSubjectNameStrategy.

Type: string

Default: DefaultReferenceSubjectNameStrategy

Importance: low

value.converter.replace.null.with.defaultWhether to replace fields that have a default value and that are null to the default value. When set to true, the default value is used, otherwise null is used. Applicable for JSON Converter.

Type: boolean

Default: true

Importance: low

value.converter.schemas.enableInclude schemas within each of the serialized values. Input messages must contain schema and payload fields and may not contain additional fields. For plain JSON data, set this to false. Applicable for JSON Converter.

Type: boolean

Default: false

Importance: low

value.converter.value.schema.id.deserializerThe class name of the schema ID deserializer for values. This is used to deserialize schema IDs from the message headers.

Type: string

Default: io.confluent.kafka.serializers.schema.id.DualSchemaIdDeserializer

Importance: low

value.converter.value.subject.name.strategyDetermines how to construct the subject name under which the value schema is registered with Schema Registry.

Type: string

Default: TopicNameStrategy

Importance: low

Auto-restart policy

auto.restart.on.user.errorEnable connector to automatically restart on user-actionable errors.

Type: boolean

Default: true

Importance: medium

Suggested Reading

Blog post: Announcing the MongoDB Atlas Sink and Source connectors in Confluent Cloud

Frequently asked questions

Find answers to frequently asked questions about the MongoDB Atlas Sink connector for Confluent Cloud.

Why is my connector stuck in PROVISIONING state?

If your connector remains in PROVISIONING state indefinitely without transitioning to RUNNING, this indicates the connector failed to complete the deployment process.

Common causes and solutions:

Invalid MongoDB credentials: Verify your MongoDB connection host, username, and password are correctly configured. Test your credentials using a MongoDB client before configuring the connector.

Connection host format: Use the correct connection host format. For

MONGODB_ATLAS, use a service record such ascluster4-r5q3r7.gcp.mongodb.net. ForMONGODB_SELF_MANAGED, use a standard connection string such as54.190.171.123:27017.Network connectivity: Ensure the connector can reach your MongoDB instance. If your MongoDB instance is not publicly accessible, verify that you have added all Confluent Cloud egress IP addresses to your MongoDB IP whitelist. For more information, see Adding an IP Whitelist Entry.

Insufficient privileges: Verify the database user has been granted either the

readWriterole, or both thereadAnyDatabaseandclusterMonitorroles. For more information, see Prerequisites.Invalid collection name: Verify the collection specified in the configuration exists or can be created by the connector.

If the connector remains stuck after verifying these settings, delete and recreate the connector with the corrected configuration.

Why does my connector fail with authentication errors?

Authentication errors occur when the connector cannot authenticate with your MongoDB instance.

Common causes and solutions:

Incorrect credentials: Verify your MongoDB username and password are correct. Test authentication using a MongoDB client to confirm credentials work.

X.509 certificate issues: If you use X.509 certificate authentication, ensure your certificate and private key are correctly formatted. The certificate should include the full chain and the private key should be in PEM format without a passphrase.

Authentication source: For

MONGODB_SELF_MANAGEDdeployments, you may need to specify theauthSourceparameter in the connection host. For example:54.190.171.123:27017/?authSource=admin.Connection string format: Do not include the username and password in the connection host string. Instead, provide them in the separate

connection.userandconnection.passwordfields.Insufficient database permissions: Verify the user has

readWritepermissions on the target database.

For X.509 authentication setup, see connector authentication.

How do I configure the connector to delete MongoDB documents when Kafka records have null values?

To delete MongoDB documents when receiving Kafka records with null values, you must configure both delete.on.null.values and the appropriate document ID strategy.

Required configuration:

"delete.on.null.values": "true",

"doc.id.strategy": "FullKeyStrategy"

Supported document ID strategies for deletes:

FullKeyStrategy: Uses the entire Kafka record key as the MongoDB document

_id.PartialKeyStrategy: Uses a subset of key fields as the

_id. Requireskey.projection.typeandkey.projection.listsettings.ProvidedInKeyStrategy: Expects the

_idto be provided in the key.

Important considerations:

Tombstone records: When

delete.on.null.valuesistrue, the connector treats tombstone records with null values as delete operations.Key requirement: The Kafka record must have a non-null key for deletes to work. Records without keys cannot be deleted.

Write strategy: The

write.strategymust be compatible with deletes.DeleteOneDefaultStrategyis designed for delete operations.

For more information, see the doc.id.strategy property in Configuration Properties.

Which write strategy should I use?

The write.strategy determines how the connector performs bulk write operations on MongoDB collections.

Common write strategies:

DefaultWriteModelStrategy: Internally uses

ReplaceOneDefaultStrategyfor normal collections andInsertOneDefaultStrategyfor time-series collections. Use this for most scenarios.ReplaceOneDefaultStrategy: Replaces entire documents matching the

_id. Best for upsert operations where you want to replace the full document.InsertOneDefaultStrategy: Only inserts new documents. Fails if a document with the same

_idalready exists. Use for append-only workloads.UpdateOneDefaultStrategy: Updates existing documents. Use when you want to modify specific fields without replacing the entire document.

UpdateOneBusinessKeyTimestampStrategy: Updates documents using a business key and timestamp. Useful for maintaining the latest version of a document based on timestamps.

DeleteOneDefaultStrategy: Deletes documents. Use with

delete.on.null.values=truefor CDC scenarios.

To configure the write strategy:

"write.strategy": "ReplaceOneDefaultStrategy"

For detailed information about each write strategy, see Write Strategies.

How do I handle connection string options for MongoDB?

Specify connection string options in the connection.host property.

For MONGODB_ATLAS, use the format:

cluster4-r5q3r7.gcp.mongodb.net/?readPreference=secondary&retryWrites=true

For MONGODB_SELF_MANAGED, use the format:

54.190.171.123:27017/?authSource=admin&writeConcern=majority

Important considerations:

Do not include protocol: Do not include

mongodb://ormongodb+srv://in the connection host.Do not include credentials: Do not include username and password in the connection string. Use the separate

connection.userandconnection.passwordfields.Supported options: Not all MongoDB connection string options are supported. For the list of unsupported options, see the connector documentation.

Write concern: For production workloads, consider setting

writeConcern=majorityto ensure writes are acknowledged by a majority of replica set members.

For more information about connection string options, see MongoDB Connection String Options.

What should I set for the max.batch.size and max.num.retries properties?

These properties control the connector’s write behavior and error handling.

max.batch.size: The maximum number of sink records to batch together for processing. Default is

0, which means no limit. A value of0allows the connector to batch all available records, which provides maximum throughput but may increase latency. Lower this value if you experience connector memory issues or need lower latency.max.num.retries: The number of times the connector retries on write errors. Default is

3. Increase this value for production workloads to handle transient network issues.retries.defer.timeout: The time in milliseconds to wait between retries. Default is

5000ms.

Adjust these values based on your specific workload requirements and monitor your connector’s error logs.

How do I configure time-series collections?

MongoDB time-series collections require specific configuration to properly store time-series data.

Required configuration:

timeseries.timefield: Sets the name of the top-level time field that contains the date in each time-series document. This field must contain a BSON date.

timeseries.timefield.auto.convert: Set to

trueto automatically convert the time field data to BSON date format. Supported source formats include integer, long, and string. Default isfalse.

Optional configuration:

timeseries.timefield.auto.convert.date.format: Sets the DateTimeFormatter format to convert the source data from. Default is

yyyy-MM-dd[['T'][ ]][HH:mm:ss[[.][SSSSSS][SSS]][ ]VV[ ]'['VV']'][HH:mm:ss[[.][SSSSSS][SSS]][ ]X][HH:mm:ss[[.][SSSSSS][SSS]]].timeseries.metafield: Sets the name of the top-level field that contains metadata in each time-series document.

timeseries.expire.after.seconds: Sets the number of seconds after which the document expires. MongoDB deletes expired documents automatically. Default is

0, which means data will not be deleted automatically.ts.granularity: Sets the interval granularity. Valid entries are

None,seconds,minutes, orhours. Default isNone.

Important considerations:

Time-series collections require MongoDB version 5.0 or later and are only available on dedicated clusters in MongoDB Atlas.

For time-series collections,

DefaultWriteModelStrategyinternally defaults toInsertOneDefaultStrategy.

For more information, see the time-series properties in Configuration Properties.

Next Steps

For an example that shows fully-managed Confluent Cloud connectors in action with Confluent Cloud for Apache Flink, see the Cloud ETL Demo. This example also shows how to use Confluent CLI to manage your resources in Confluent Cloud.