Azure Event Hubs Source Connector for Confluent Cloud

The fully-managed Azure Event Hubs Source connector for Confluent Cloud is used to poll data from Azure Event Hubs and persist the data to an Apache Kafka® topic. For additional information about Azure Event Hubs, see the Azure Event Hubs documentation. The connector fetches records from Azure Event Hubs through a subscription. The connector uses a BytesArrayConverter internally to transform data elements into byte records.

Confluent Cloud is available through Azure Marketplace or directly from Confluent.

Note

This Quick Start is for the fully-managed Confluent Cloud connector. If you are installing the connector locally for Confluent Platform, see Azure Event Hubs Source connector for Confluent Platform.

If you require private networking for fully-managed connectors, make sure to set up the proper networking beforehand. For more information, see Manage Networking for Confluent Cloud Connectors.

Features

The Azure Event Hubs Source connector provides the following features:

Topics created automatically: The connector can automatically create Kafka topics.

Select configuration properties:

azure.eventhubs.partition.starting.positionazure.eventhubs.consumer.groupazure.eventhubs.transport.typeazure.eventhubs.offset.typemax.events(defaults to 50 with a maximum of 499 events)

Provider integration support: The connector supports Microsoft’s native identity authorization using Confluent Provider Integration. For more information about provider integration setup, see the connector authentication.

For more information and examples to use with the Confluent Cloud API for Connect, see the Confluent Cloud API for Connect Usage Examples section.

Limitations

Be sure to review the following information.

For connector limitations, see Azure Event Hubs Source Connector limitations.

If you plan to use one or more Single Message Transforms (SMTs), see SMT Limitations.

Quick Start

Use this quick start to get up and running with the Confluent Cloud Azure Event Hubs Source connector.

- Prerequisites

Authorized access to a Confluent Cloud cluster on Amazon Web Services (AWS), Microsoft Azure (Azure), or Google Cloud.

At least one topic must exist before creating the connector.

The Confluent CLI installed and configured for the cluster. See Install the Confluent CLI.

An Azure account with an existing Event Hubs Namespace, Event Hub, and Consumer Group.

An Azure Event Hubs Shared Access Policy with its policy name and key.

Kafka cluster credentials. The following lists the different ways you can provide credentials.

Enter an existing service account resource ID.

Create a Confluent Cloud service account for the connector. Make sure to review the ACL entries required in the service account documentation. Some connectors have specific ACL requirements.

Create a Confluent Cloud API key and secret. To create a key and secret, you can use confluent api-key create or you can autogenerate the API key and secret directly in the Cloud Console when setting up the connector.

Using the Confluent Cloud Console

Step 1: Launch your Confluent Cloud cluster

To create and launch a Kafka cluster in Confluent Cloud, see Create a kafka cluster in Confluent Cloud.

Step 2: Add a connector

In the left navigation menu, click Connectors. If you already have connectors in your cluster, click + Add connector.

Step 3: Select your connector

Click the Azure Event Hubs Source connector card.

Step 4: Enter the connector details

Note

Make sure you have all your prerequisites completed.

An asterisk ( * ) designates a required entry.

At the Add Azure Event Hubs Source Connector screen, complete the following:

Select the topic you want to send data to from the Topics list. To create a new topic, click +Add new topic.

Select the way you want to provide Kafka Cluster credentials. You can choose one of the following options:

My account: This setting allows your connector to globally access everything that you have access to. With a user account, the connector uses an API key and secret to access the Kafka cluster. This option is not recommended for production.

Service account: This setting limits the access for your connector by using a service account. This option is recommended for production.

Use an existing API key: This setting allows you to specify an API key and a secret pair. You can use an existing pair or create a new one. This method is not recommended for production environments.

Note

Freight clusters support only service accounts for Kafka authentication.

Click Continue.

Configure the authentication properties:

Azure credentials

Authentication method: Under Azure credentials, select how you want to authenticate with the database:

If you select SAS Key (default), enter the following Azure Event Hubs details:

Shared access policy name: Shared access policy name to use for access authentication.

Shared access key: Shared access key to use for access authentication.

If you select Microsoft Entra ID application, enter the following Azure Event Hubs details:

Under the Provider integration dropdown, select an existing integration name that has access to your Azure resource required to run this connector. For more information, see Manage an Microsoft Azure Provider Integration.

Provider Integration: Select an existing integration name that has access to your Azure resource required to run this connector. Required for

Microsoft Entra ID applicationauthentication.

How should we connect to your data?

Shared access policy name: Shared access policy name to use for access authentication. Required for

SAS Keyauthentication.Shared access key: Shared access key to use for access authentication. Required for

SAS Keyauthentication.Event Hubs namespace: Event Hubs namespace to connect to.

Event Hub name: Event Hub to read from.

Azure Event Hubs consumer group: Specific consumer group to read from.

Azure Event Hubs Domain: Domain of the Event Hub to connect to. Valid options are

servicebus.windows.net(default),servicebus.usgovcloudapi.net,servicebus.cloudapi.de, orservicebus.chincloudapi.cn.

Click Continue.

Connection details

Azure Event Hubs transport type: Event Hubs communication transport type.

Azure Event Hubs offset type: Offset type to use to keep track of events.

Show advanced configurations

Additional Configs

Value Converter Decimal Format: Specifies the

JSONorJSON_SRserialization format for ConnectDECIMALlogical type values with two allowed literals:BASE64to serializeDECIMALlogical types as base64 encoded binary data, andNUMERICto serializeDECIMALlogical type values inJSONorJSON_SRas a number representing the decimal value.Key Converter Schema ID Serializer: The class name of the schema ID serializer for keys. This is used to serialize schema IDs in the message headers.

Value Converter Reference Subject Name Strategy: Sets the subject reference name strategy for values. Valid entries are

DefaultReferenceSubjectNameStrategyorQualifiedReferenceSubjectNameStrategy. You can use this strategy only withPROTOBUFformat; the default strategy isDefaultReferenceSubjectNameStrategy.Errors Tolerance: Use this property to configure the connector’s error handling behavior.

Warning

Use this property with caution for sink connectors, as it can lead to data loss. If you set this property to

all, the connector does not fail on errant records, but logs them (and sends to DLQ for sink connectors) and continues processing. If you set this property tonone, the connector task fails on errant records.Value Converter Connect Meta Data: Enables the Connect converter to add its metadata to the output schema. Applies to Avro converters.

Value Converter Value Subject Name Strategy: Determines how to construct the subject name under which the value schema is registered with Schema Registry.

Key Converter Key Subject Name Strategy: Determines how to construct the subject name for key schema registration.

Value Converter Schema ID Serializer: The class name of the schema ID serializer for values. This is used to serialize schema IDs in the message headers.

Auto-restart policy

Enable Connector Auto-restart: Enables the auto-restart behavior of the connector and its task in the event of user-actionable errors. Defaults to

true, enabling the connector to automatically restart in case of user-actionable errors. Set this property tofalseto disable auto-restart for failed connectors. In such cases, you would need to manually restart the connector.

Connection details

Default starting position: Default reset position if no offsets are stored.

Max events: Maximum number of events to read when polling an Event Hub partition.

Transforms

Single Message Transforms: To add a new SMT, see Add transforms. For more information about unsupported SMTs, see Unsupported transformations.

Click Continue

Based on the number of topic partitions you select, you will be provided with a recommended number of tasks.

To change the number of tasks, use the Range Slider to select the desired number of tasks.

Click Continue.

Step 5: Check the Kafka topic

After the connector is running, verify that messages are populating your Kafka topic.

For more information and examples to use with the Confluent Cloud API for Connect, see the Confluent Cloud API for Connect Usage Examples section.

Using the Confluent CLI

Complete the following steps to set up and run the connector using the Confluent CLI.

Important

Make sure you have all your prerequisites completed.

You must create topic names before creating and launching this connector. Use the following command to create a topic using the Confluent CLI.

confluent kafka topic create <topic-name>

Step 1: List the available connectors

Enter the following command to list available connectors:

confluent connect plugin list

Step 2: List the connector configuration properties

Enter the following command to show the connector configuration properties:

confluent connect plugin describe <connector-plugin-name>

The command output shows the required and optional configuration properties.

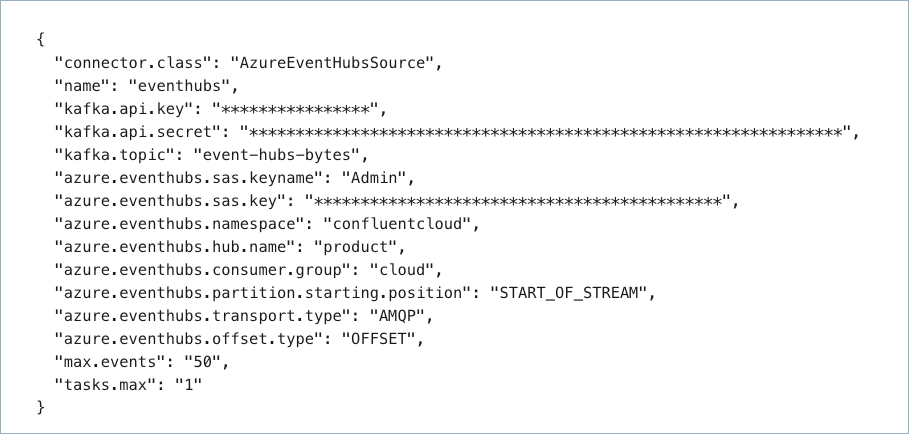

Step 3: Create the connector configuration file

Create a JSON file that contains the connector configuration properties. The following example shows required and optional connector properties.

{

"connector.class": "AzureEventHubsSource",

"name": "azure-eventhubs-source",

"kafka.auth.mode": "KAFKA_API_KEY",

"kafka.api.key": "<my-kafka-api-key>",

"kafka.api.secret": "<my-kafka-api-secret>",

"azure.eventhubs.sas.keyname": "<-my-shared-access-policy name->",

"azure.eventhubs.sas.key": "<my-shared-access-key>",

"azure.eventhubs.namespace": "<my-eventhubs-namespace>",

"azure.eventhubs.hub.name": "<my-eventhub-name>",

"azure.eventhubs.consumer.group": "<my-eventhub-consumer-group>",

"kafka.topic": "<my-topic-name>",

"azure.eventhubs.partition.starting.position": "START_OF_STREAM",

"azure.eventhubs.transport.type": "AMQP",

"azure.eventhubs.offset.type": "OFFSET",

"max.events": "50",

"tasks.max": "1"

}

Note the following property definitions:

"name": Sets a name for your new connector."connector.class": Identifies the connector plugin name.

"kafka.auth.mode": Identifies the connector authentication mode you want to use. There are two options:SERVICE_ACCOUNTorKAFKA_API_KEY(the default). To use an API key and secret, specify the configuration propertieskafka.api.keyandkafka.api.secret, as shown in the example configuration (above). To use a service account, specify the Resource ID in the propertykafka.service.account.id=<service-account-resource-ID>. To list the available service account resource IDs, use the following command:confluent iam service-account list

For example:

confluent iam service-account list Id | Resource ID | Name | Description +---------+-------------+-------------------+------------------- 123456 | sa-l1r23m | sa-1 | Service account 1 789101 | sa-l4d56p | sa-2 | Service account 2

"azure.eventhubs.partition.starting.position": (Optional) Sets the starting position in the Event Hub if no offsets are stored and a reset occurs. The value can beSTART_OF_STREAMorEND_OF_STREAM. If no property is entered, the configuration defaults toSTART_OF_STREAM.Note

The Azure Event Hub Source connector uses

x-opt-kafka-keykey-value pair as the partition key when the Azure SDKEventData.PartitionKeyproperty is null and when thex-opt-kafka-keyis present."azure.eventhubs.transport.type": (Optional) Sets the transport type for communicating with Azure Event Hubs. The value can beAMQPorAMQP_WEB_SOCKETS. AMQP (over TCP) uses port 5671. AMQP over web sockets uses port 443. If no property is entered, the configuration defaults toAMQP."azure.eventhubs.offset.type": (Optional) Sets the offset type used to keep track of events. The value can beOFFSET(the Azure Event Hubs offset for the event) orSEQ_NUM(the sequence number of the event). If no property is entered, the configuration defaults toOFFSET."max.events": (Optional) The maximum number of events to read from an Event Hub partition when polling. If no property is entered, the configuration defaults to50.499is the maximum number of events.

Single Message Transforms: See the Single Message Transforms (SMT) documentation for details about adding SMTs. See Unsupported transformations for a list of SMTs that are not supported with this connector.

See Configuration Properties for all property values and definitions.

Step 4: Load the properties file and create the connector

Enter the following command to load the configuration and start the connector:

confluent connect cluster create --config-file <file-name>.json

For example:

confluent connect cluster create --config-file az-event-hubs.json

Example output:

Created connector azure-eventhubs-source lcc-ix4dl

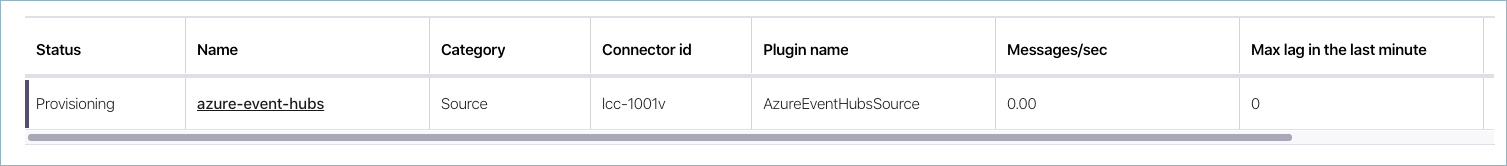

Step 5: Check the connector status

Enter the following command to check the connector status:

confluent connect cluster list

Example output:

ID | Name | Status | Type

+-----------+--------------------------+---------+--------+

lcc-ix4dl | azure-eventhubs-source | RUNNING | source

Step 6: Check the Kafka topic.

After the connector is running, verify that messages are populating your Kafka topic.

For more information and examples to use with the Confluent Cloud API for Connect, see the Confluent Cloud API for Connect Usage Examples section.

Configuration Properties

Use the following configuration properties with the fully-managed connector. For self-managed connector property definitions and other details, see the connector docs in Self-managed connectors for Confluent Platform.

Note

These are properties for the fully-managed cloud connector. If you are installing the connector locally for Confluent Platform, see Azure Event Hubs Source Connector for Confluent Platform.

Azure credentials

provider.integration.idAzure provider-integration to mint Microsoft Entra ID application tokens.

Type: string

Importance: high

authentication.methodHow Confluent Cloud authenticates with Azure.

Type: string

Default: SAS Key

Valid Values: Microsoft Entra ID application, SAS Key

Importance: high

azure.eventhubs.sas.keynameShared access policy name to use for access authentication.

Type: string

Importance: high

azure.eventhubs.sas.keyShared access key to use for access authentication.

Type: password

Importance: high

How should we connect to your data?

nameSets a name for your connector.

Type: string

Valid Values: A string at most 64 characters long

Importance: high

azure.eventhubs.namespaceEvent Hubs namespace to connect to.

Type: string

Importance: high

azure.eventhubs.hub.nameEvent Hub to read from.

Type: string

Importance: high

azure.eventhubs.consumer.groupSpecific consumer group to read from.

Type: string

Default: $Default

Importance: low

azure.eventhubs.domainSpecifies the domain of the Event Hub to connect to. Available options include: servicebus.windows.net, servicebus.usgovcloudapi.net, servicebus.cloudapi.de, servicebus.chinacloudapi.cn. Default is servicebus.windows.net.

Type: string

Default: servicebus.windows.net

Importance: low

Kafka Cluster credentials

kafka.auth.modeKafka Authentication mode. It can be one of KAFKA_API_KEY or SERVICE_ACCOUNT. It defaults to KAFKA_API_KEY mode, whenever possible.

Type: string

Valid Values: SERVICE_ACCOUNT, KAFKA_API_KEY

Importance: high

kafka.api.keyKafka API Key. Required when kafka.auth.mode==KAFKA_API_KEY.

Type: password

Importance: high

kafka.service.account.idThe Service Account that will be used to generate the API keys to communicate with Kafka Cluster.

Type: string

Importance: high

kafka.api.secretSecret associated with Kafka API key. Required when kafka.auth.mode==KAFKA_API_KEY.

Type: password

Importance: high

Which topic do you want to send data to?

kafka.topicIdentifies the topic name to write the data to.

Type: string

Importance: high

Connection details

azure.eventhubs.partition.starting.positionDefault reset position if no offsets are stored.

Type: string

Default: START_OF_STREAM

Importance: medium

azure.eventhubs.transport.typeEvent Hubs communication transport type.

Type: string

Default: AMQP

Importance: low

azure.eventhubs.offset.typeOffset type to use to keep track of events

Type: string

Default: OFFSET

Importance: medium

max.eventsMaximum number of events to read when polling an Event Hub partition.

Type: int

Default: 50

Valid Values: [1,…,500]

Importance: low

Number of tasks for this connector

tasks.maxMaximum number of tasks for the connector.

Type: int

Valid Values: [1,…]

Importance: high

Additional Configs

header.converterThe converter class for the headers. This is used to serialize and deserialize the headers of the messages.

Type: string

Importance: low

producer.override.compression.typeThe compression type for all data generated by the producer. Valid values are none, gzip, snappy, lz4, and zstd.

Type: string

Importance: low

producer.override.linger.msThe producer groups together any records that arrive in between request transmissions into a single batched request. More details can be found in the documentation: https://docs.confluent.io/platform/current/installation/configuration/producer-configs.html#linger-ms.

Type: long

Valid Values: [100,…,1000]

Importance: low

value.converter.allow.optional.map.keysAllow optional string map key when converting from Connect Schema to Avro Schema. Applicable for Avro Converters.

Type: boolean

Importance: low

value.converter.auto.register.schemasSpecify if the Serializer should attempt to register the Schema.

Type: boolean

Importance: low

value.converter.connect.meta.dataAllow the Connect converter to add its metadata to the output schema. Applicable for Avro Converters.

Type: boolean

Importance: low

value.converter.enhanced.avro.schema.supportEnable enhanced schema support to preserve package information and Enums. Applicable for Avro Converters.

Type: boolean

Importance: low

value.converter.enhanced.protobuf.schema.supportEnable enhanced schema support to preserve package information. Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.flatten.unionsWhether to flatten unions (oneofs). Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.generate.index.for.unionsWhether to generate an index suffix for unions. Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.generate.struct.for.nullsWhether to generate a struct variable for null values. Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.int.for.enumsWhether to represent enums as integers. Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.latest.compatibility.strictVerify latest subject version is backward compatible when use.latest.version is true.

Type: boolean

Importance: low

value.converter.object.additional.propertiesWhether to allow additional properties for object schemas. Applicable for JSON_SR Converters.

Type: boolean

Importance: low

value.converter.optional.for.nullablesWhether nullable fields should be specified with an optional label. Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.optional.for.proto2Whether proto2 optionals are supported. Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.use.latest.versionUse latest version of schema in subject for serialization when auto.register.schemas is false.

Type: boolean

Importance: low

value.converter.use.optional.for.nonrequiredWhether to set non-required properties to be optional. Applicable for JSON_SR Converters.

Type: boolean

Importance: low

value.converter.wrapper.for.nullablesWhether nullable fields should use primitive wrapper messages. Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.wrapper.for.raw.primitivesWhether a wrapper message should be interpreted as a raw primitive at root level. Applicable for Protobuf Converters.

Type: boolean

Importance: low

errors.toleranceUse this property if you would like to configure the connector’s error handling behavior. WARNING: This property should be used with CAUTION for SOURCE CONNECTORS as it may lead to dataloss. If you set this property to ‘all’, the connector will not fail on errant records, but will instead log them (and send to DLQ for Sink Connectors) and continue processing. If you set this property to ‘none’, the connector task will fail on errant records.

Type: string

Default: none

Importance: low

key.converter.key.schema.id.serializerThe class name of the schema ID serializer for keys. This is used to serialize schema IDs in the message headers.

Type: string

Default: io.confluent.kafka.serializers.schema.id.PrefixSchemaIdSerializer

Importance: low

key.converter.key.subject.name.strategyHow to construct the subject name for key schema registration.

Type: string

Default: TopicNameStrategy

Importance: low

value.converter.decimal.formatSpecify the JSON/JSON_SR serialization format for Connect DECIMAL logical type values with two allowed literals:

BASE64 to serialize DECIMAL logical types as base64 encoded binary data and

NUMERIC to serialize Connect DECIMAL logical type values in JSON/JSON_SR as a number representing the decimal value.

Type: string

Default: BASE64

Importance: low

value.converter.flatten.singleton.unionsWhether to flatten singleton unions. Applicable for Avro and JSON_SR Converters.

Type: boolean

Default: false

Importance: low

value.converter.reference.subject.name.strategySet the subject reference name strategy for value. Valid entries are DefaultReferenceSubjectNameStrategy or QualifiedReferenceSubjectNameStrategy. Note that the subject reference name strategy can be selected only for PROTOBUF format with the default strategy being DefaultReferenceSubjectNameStrategy.

Type: string

Default: DefaultReferenceSubjectNameStrategy

Importance: low

value.converter.value.schema.id.serializerThe class name of the schema ID serializer for values. This is used to serialize schema IDs in the message headers.

Type: string

Default: io.confluent.kafka.serializers.schema.id.PrefixSchemaIdSerializer

Importance: low

value.converter.value.subject.name.strategyDetermines how to construct the subject name under which the value schema is registered with Schema Registry.

Type: string

Default: TopicNameStrategy

Importance: low

Auto-restart policy

auto.restart.on.user.errorEnable connector to automatically restart on user-actionable errors.

Type: boolean

Default: true

Importance: medium

Frequently asked questions

Find answers to frequently asked questions about the Azure Event Hubs Source connector for Confluent Cloud.

Why do I see unique configuration value or higher epoch errors?

These errors occur when multiple connectors or applications attempt to use the same Azure Event Hubs consumer group simultaneously. Azure uses an epoch value to prioritize receivers. When a new instance connects with the same group name, it triggers a conflict, causing existing tasks to disconnect or fail validation.

Solution:

Assign a unique consumer group name to every connector instance reading from the same Event Hub.

Create distinct consumer groups in your Azure Event Hubs namespace.

Ensure that connectors in different environments like development and production are not sharing the same group name.

Why does the connector fail during initialization with a transport exception error?

A TransportException error during initialization indicates that the connector cannot establish a connection to Azure Event Hubs.

Common causes and solutions:

Network connectivity: Verify that your Confluent Cloud environment can reach Azure Event Hubs. If you’re using private networking, ensure proper configuration of PrivateLink or VPC peering.

Firewall rules: Check that Azure Event Hubs firewall rules allow connections from Confluent Cloud.

Port: The transport type setting

azure.eventhubs.transport.typeaffects which port is used. Ensure the appropriate port is open based on your transport type:AMQPuses port 5671AMQP_WEB_SOCKETSuses port 443

Connection string validity: Verify that your Event Hubs namespace, Event Hub name, and Shared Access Policy credentials are correct.

Transport type configuration: If port 5671 is blocked in your environment, try using

AMQP_WEB_SOCKETSas the transport type.

Why does Terraform return a 400 error with Azure OAuth?

This issue can occur when the OAuth flow configuration is incorrect or when there are issues with the Provider Integration setup in Confluent Cloud.

Solution:

Verify that Provider Integration is correctly configured in your Confluent Cloud environment. See connector authentication for setup instructions.

Check that the Azure service principal credentials like client ID, client secret, and tenant ID are accurate and properly formatted in your Terraform configuration.

Ensure the Service Principal has the necessary API permissions in Azure Entra ID to access Event Hubs.

When using Terraform, verify that you’re using the correct resource attributes and that the provider integration resource is successfully created before the connector.

How do I optimize the connector’s throughput?

You can adjust the following configuration properties to optimize the connector’s throughput:

max.events: Increase this value up to the maximum of 499 to fetch more events per poll. The default is 50.

tasks.max: Increase the number of tasks based on the number of partitions in your Event Hub. The connector can parallelize consumption across partitions.

Transport type: If you’re experiencing network latency or firewall issues with AMQP using port 5671, try using

AMQP_WEB_SOCKETSwith port 443.

Monitor your connector’s performance metrics in the Confluent Cloud Console to identify bottlenecks.

Why is the connector RUNNING but not ingesting data?

If the connector appears to be running but no data flows into your Kafka topic:

Verify Event Hub has data: Check that your Azure Event Hub is receiving events using the Azure Portal metrics.

Check consumer group position: The connector may have already consumed all available events. Verify the offset/sequence number position in Azure Event Hubs.

Review partition count: Ensure your connector has enough tasks to cover all Event Hub partitions. If you have fewer tasks than partitions, some partitions won’t be consumed.

Examine connector logs: Look for warnings or errors in the connector logs that might indicate configuration issues.

Check topic existence: Verify that the target Kafka topic exists and the connector has permission to write to it.

Next Steps

For an example that shows fully-managed Confluent Cloud connectors in action with Confluent Cloud for Apache Flink, see the Cloud ETL Demo. This example also shows how to use Confluent CLI to manage your resources in Confluent Cloud.