Google Cloud BigQuery Sink Connector [End of Life] for Confluent Cloud

Important

This connector reached its end of life (EOL) on March 31, 2026. Confluent recommends migrating to Google BigQuery Sink V2 connector. For more information, see Deprecated and end of life connectors.

You can use the Kafka Connect Google BigQuery Sink connector for Confluent Cloud to export Avro, JSON Schema, Protobuf, or JSON (schemaless) data from Apache Kafka® topics to BigQuery. The BigQuery table schema is based upon information in the Apache Kafka® schema for the topic.

Note

This is a Quick Start for the fully-managed cloud connector. If you are installing the connector locally for Confluent Platform, see Google BigQuery Sink connector for Confluent Platform.

Features

The connector supports insert operations and attempts to detect duplicates. See BigQuery troubleshooting for additional information.

The connector uses the BigQuery insertAll streaming api. The records are immediately available in the table for querying.

The connector supports streaming from a list of topics into corresponding tables in BigQuery.

Note

Make sure to review BigQuery rate limits if you are planning to use multiple connectors with a high number of tasks.

Even though the connector streams records one at a time by default (as opposed to running in batch mode), the connector is scalable because it contains an internal thread pool that allows it to stream records in parallel. The internal thread pool defaults to 10 threads.

The connector supports several time-based table partitioning strategies using the property

partitioning.type.The connector supports routing invalid records to the DLQ. This includes any records having a

400code (invalid error message) from BigQuery.Note

DLQ routing does not work if Auto update schemas (

auto.update.schemas) is enabled and the connector detects that the failure is due to schema mismatch.The connector supports Avro, JSON Schema, Protobuf, or JSON (schemaless) input data formats. Schema Registry must be enabled to use a Schema Registry-based format (for example, Avro, JSON_SR (JSON Schema), or Protobuf).

For Avro, JSON_SR, and PROTOBUF, the connector provides the following configuration properties that support automated table creation and updates. You can select these properties in the UI or add them to the connector configuration, if using the Confluent CLI.

auto.create.tables: Automatically create BigQuery tables if they don’t already exist. The connector expects that the BigQuery table name is the same as the topic name. If you create the BigQuery tables manually, make sure the table name matches the topic name.sanitize.topics: Automatically sanitize topic names before using them as BigQuery table names. If not enabled, topic names are used as table names. If enabled, the table names created may be different from the topic names.auto.update.schemas: Automatically update BigQuery tables.sanitize.field.namesAutomatically sanitize field names before using them as column names in BigQuery. Note that Kafka field names become column names in BigQuery.

Note

New tables and schema updates may take a few minutes to be detected by the Google Client Library. For more information see the Google Cloud BigQuery API guide.

For more information and examples to use with the Confluent Cloud API for Connect, see the Confluent Cloud API for Connect Usage Examples section.

Limitations

Be sure to review the following information.

For connector limitations, see Google BigQuery Sink (Legacy) Connector limitations.

If you plan to use one or more Single Message Transforms (SMTs), see SMT Limitations.

Supported data types

The following list contains the supported BigQuery data types and the associated connector mapping. Note that this mapping applies when the connector creates a table automatically or updates schemas (that is, if either auto.create.tables or auto.update.schemas is set to true).

BigQuery Data Type | Connector Mapping |

|---|---|

STRING | String |

INTEGER | INT8 |

INTEGER | INT16 |

INTEGER | INT32 |

INTEGER | INT64 |

FLOAT | FLOAT32 |

FLOAT | FLOAT64 |

BOOLEAN | Boolean |

BYTES | Bytes |

TIMESTAMP | Logical TIMESTAMP |

TIME | Logical TIME |

DATE | Logical DATE |

FLOAT | Logical Decimal |

DATE | Debezium Date |

TIME | Debezium MicroTime |

TIME | Debezium Time |

TIMESTAMP | Debezium MicroTimestamp |

TIMESTAMP | Debezium TIMESTAMP |

TIMESTAMP | Debezium ZonedTimestamp |

Quick Start

Use this quick start to get up and running with the Confluent Cloud Google BigQuery Sink connector. The quick start provides the basics of selecting the connector and configuring it to stream events to a BigQuery data warehouse.

Tip

Confluent recommends using Version 2 of this connector. For more information, see Google BigQuery Sink V2 Connector for Confluent Cloud.

- Prerequisites

An active Google Cloud account with authorization to create resources.

A BigQuery project is required. The project can be created using the Google Cloud Console.

The data system the sink connector is connecting to should be in the same region as your Confluent Cloud cluster. If you use a different region or cloud platform, be aware that you may incur additional data transfer charges. Contact your Confluent account team or Confluent Support if you need to use Confluent Cloud and connect to a data system that is in a different region or on a different cloud platform.

A BigQuery dataset is required in the project.

A service account that can access the BigQuery project containing the dataset. You can create this service account in the Google Cloud Console.

The service account must have access to the BigQuery project containing the dataset. You create and download a key when creating a service account. The key must be downloaded as a JSON file. It resembles the example below:

{ "type": "service_account", "project_id": "confluent-842583", "private_key_id": "...omitted...", "private_key": "-----BEGIN PRIVATE ...omitted... =\n-----END PRIVATE KEY-----\n", "client_email": "confluent2@confluent-842583.iam.gserviceaccount.com", "client_id": "...omitted...", "auth_uri": "https://accounts.google.com/oauth2/auth", "token_uri": "https://oauth2.googleapis.com/token", "auth_provider_x509_cert_url": "https://www.googleapis.com/oauth2/certs", "client_x509_cert_url": "https://www.googleapis.com/robot/metadata/confluent2%40confluent-842583.iam.gserviceaccount.com" }

According to Google Cloud specifications, the service account will either have to have the BigQueryEditor primitive IAM role or the bigquery.dataEditor predefined IAM role. The minimum permissions are:

bigquery.datasets.get bigquery.tables.create bigquery.tables.get bigquery.tables.getData bigquery.tables.list bigquery.tables.update bigquery.tables.updateData

Kafka cluster credentials. The following lists the different ways you can provide credentials.

Enter an existing service account resource ID.

Create a Confluent Cloud service account for the connector. Make sure to review the ACL entries required in the service account documentation. Some connectors have specific ACL requirements.

Create a Confluent Cloud API key and secret. To create a key and secret, you can use confluent api-key create or you can autogenerate the API key and secret directly in the Cloud Console when setting up the connector.

The Confluent CLI installed and configured for the cluster. See Install the Confluent CLI.

Schema Registry must be enabled to use a Schema Registry-based format (for example, Avro, JSON_SR (JSON Schema), or Protobuf).

You must create a BigQuery table before using the connector, if you leave Auto create tables (

auto.create.tables) set tofalse(the default).You may need to create a schema in BigQuery, depending on how you set the Auto update schemas property (or

auto.update.schemas).

Using the Confluent Cloud Console

Step 1: Launch your Confluent Cloud cluster

To create and launch a Kafka cluster in Confluent Cloud, see Create a kafka cluster in Confluent Cloud.

Step 2: Add a connector

In the left navigation menu, click Connectors. If you already have connectors in your cluster, click + Add connector.

Step 3: Select your connector

Click the Google BigQuery Sink (Legacy) connector card.

Step 4: Enter the connector details

Note

Ensure you have all your prerequisites completed.

An asterisk ( * ) designates a required entry.

At the Add Google BigQuery Sink (Legacy) Connector screen, complete the following:

If you’ve already populated your Kafka topics, select the topics you want to connect from the Topics list.

To create a new topic, click +Add new topic.

Select the way you want to provide Kafka Cluster credentials. You can choose one of the following options:

My account: This setting allows your connector to globally access everything that you have access to. With a user account, the connector uses an API key and secret to access the Kafka cluster. This option is not recommended for production.

Service account: This setting limits the access for your connector by using a service account. This option is recommended for production.

Use an existing API key: This setting allows you to specify an API key and a secret pair. You can use an existing pair or create a new one. This method is not recommended for production environments.

Note

Freight clusters support only service accounts for Kafka authentication.

Click Continue.

Configure the authentication properties:

GCP credentials file: Upload your GCP credentials file, the Google Cloud service account JSON file with write permissions for BigQuery.

GCP Project ID: In the Project ID field, enter the ID for the Google Cloud project where BigQuery is located.

Dataset: Enter the name for the dataset Kafka topics write to your BigQuery in the Dataset field.

Click Continue.

Note

Configuration properties that are not shown in the Cloud Console use the default values. See Configuration Properties for all property values and definitions.

Input Kafka record value format: Select the input Kafka record value format (data coming from the Kafka topic). Valid entires AVRO, JSON_SR (JSON Schema), or PROTOBUF. A valid schema must be available in Schema Registry to use a schema-based message format (for example, Avro, JSON_SR (JSON Schema), or Protobuf).

Show advanced configurations

Schema context: Select a schema context to use for this connector, if using a schema-based data format. This property defaults to the Default context, which configures the connector to use the default schema set up for Schema Registry in your Confluent Cloud environment. A schema context allows you to use separate schemas (like schema sub-registries) tied to topics in different Kafka clusters that share the same Schema Registry environment. For example, if you select a non-default context, a Source connector uses only that schema context to register a schema and a Sink connector uses only that schema context to read from. For more information about setting up a schema context, see What are schema contexts and when should you use them?.

Input Kafka record key format: Sets the data format for incoming record keys. Valid entries are AVRO, BYTES, JSON, JSON_SR, PROTOBUF, or STRING. A valid schema must be available in Schema Registry to use a schema-based message format (for example, Avro, JSON_SR (JSON Schema), or Protobuf).

Partitioning type: The partitioning type to use.

INGESTION_TIME: To use this type, existing tables must be partitioned by ingestion time. The connector writes to the partition for the current wall clock time. When Auto create tables is enabled, the connector creates tables partitioned by ingestion time.

NONE: The connector relies only on how the existing tables are set up. When Auto create tables is enabled, the connector creates non-partitioned tables.

RECORD_TIME: To use this type, existing tables must be partitioned by ingestion time. The connector writes to the partition that corresponds to the Kafka record’s timestamp. When Auto create tables is enabled, the connector creates tables partitioned by ingestion time.

TIMESTAMP_COLUMN: The connector relies only on how existing tables are set up. When Auto create tables is enabled, the connector creates tables partitioned using a field in a Kafka record value.

Kafka Topic to BigQuery Table Map: Map of topics to tables (optional). The required format is comma-separated tuples.

Auto create tables: Designates whether or not to automatically create BigQuery tables. Supports AVRO, JSON_SR, and PROTOBUF message format only.

Auto update schemas: Designates whether or not to automatically update BigQuery schemas. New fields in record schemas must be nullable. Supports AVRO, JSON_SR, and PROTOBUF message format only.

Sanitize topics: Designates whether to automatically sanitize topic names before using them as table names in BigQuery. If not enabled, topic names are used as table names.

Sanitize field names: Whether to automatically sanitize field names before using them as field names in BigQuery. BigQuery specifies that field names can only contain letters, numbers, and underscores. The sanitizer replaces invalid symbols with underscores. If the field name starts with a digit, the sanitizer adds an underscore in front of field name. Caution: Key duplication errors can occur if different fields are named a.b and a_b, for instance. After being sanitized, field names a.b and a_b will have same value.

Time partitioning type: The time partitioning type to use when creating new tables for

partitioning.type-INGESTION_TIME,RECORD_TIMEorTIMESTAMP_COLUMN. Existing tables are not altered to use this partitioning type.Timestamp partition field name: The name of the field in the value that contains the timestamp to partition by in BigQuery. This enable timestamp partitioning for tables. The connector ignores this property if

partitioning.typeis notTIMESTAMP_COLUMNor ifauto.create.tablesisfalse.Allow schema unionization: If enabled, when performing schema updates, record schemas are combined with the current schema of the BigQuery table. This can be useful if there are Kafka records with schemas that are missing fields that correspond to columns already present in the BigQuery table schema. Note that when this is enabled, unrelated records could be produced to a topic consumed by the connector. The reason for this is instead of failing on invalid data, the connector appends the field for the unrelated record schema to the BigQuery table schema.

All BigQuery fields nullable: If

true, no fields in any produced BigQuery schema are required. All non-nullable Avro fields are translated as NULLABLE (or REPEATED, if arrays).Convert double special values: Designates whether +Infinity is converted to

Double.MAX_VALUEand whether -Infinity and NaN are converted toDouble.MIN_VALUEto ensure successful delivery to BigQuery.

Additional Configs

Value Converter Decimal Format: Specifies the

JSONorJSON_SRserialization format for ConnectDECIMALlogical type values with two allowed literals:BASE64to serializeDECIMALlogical types as base64 encoded binary data, andNUMERICto serializeDECIMALlogical type values inJSONorJSON_SRas a number representing the decimal value.Value Converter Replace Null With Default: Specifies whether to replace fields that have a default value and that are null to the default value. When set to

true, the connector uses the default value; otherwise, it usesnull. Applies to theJSONconverter.Schema GUID For Key Converter: Sets the schema GUID to use for deserialization when using

ConfigSchemaIdDeserializer. This lets you specify a fixed schema GUID for deserializing message keys. This property is applicable only whenkey.converter.key.schema.id.deserializeris set toConfigSchemaIdDeserializer.Value Converter Schema ID Deserializer: Sets the class name of the schema ID deserializer for values. The deserializer reads schema IDs from message headers.

Schema GUID For Value Converter: Sets the schema GUID to use for deserialization when using

ConfigSchemaIdDeserializer. This lets you specify a fixed schema GUID for deserializing message values. This property is applicable only whenvalue.converter.value.schema.id.deserializeris set toConfigSchemaIdDeserializer.Value Converter Reference Subject Name Strategy: Sets the subject reference name strategy for values. Valid entries are

DefaultReferenceSubjectNameStrategyorQualifiedReferenceSubjectNameStrategy. You can use this strategy only withPROTOBUFformat; the default strategy isDefaultReferenceSubjectNameStrategy.Schema ID For Value Converter: Sets the schema ID to use for deserialization when using

ConfigSchemaIdDeserializer. This lets you specify a fixed schema ID for deserializing message values. This property is applicable only whenvalue.converter.value.schema.id.deserializeris set toConfigSchemaIdDeserializer.Value Converter Schemas Enable: Includes schema within each of the serialized values. Input messages must contain

schemaandpayloadfields and must not contain additional fields. For plainJSONdata, set this tofalse. Applies to theJSONconverter.Errors Tolerance: Use this property to configure the connector’s error handling behavior.

Warning

Use this property with caution for sink connectors, as it can lead to data loss. If you set this property to

all, the connector does not fail on errant records, but logs them (and sends to DLQ for sink connectors) and continues processing. If you set this property tonone, the connector task fails on errant records.Value Converter Connect Meta Data: Enables the Connect converter to add its metadata to the output schema. Applies to Avro converters.

Value Converter Value Subject Name Strategy: Determines how to construct the subject name under which the value schema is registered with Schema Registry.

Key Converter Key Subject Name Strategy: Determines how to construct the subject name for key schema registration.

Value Converter Ignore Default For Nullables: When set to

true, this property ensures that the corresponding record in Kafka isnull, instead of showing the default column value. Applies to theAVRO,PROTOBUF, andJSON_SRconverters.Key Converter Schema ID Deserializer: Sets the class name of the schema ID deserializer for keys. The deserializer reads schema IDs from message headers.

Schema ID For Key Converter: Sets the schema ID to use for deserialization when using

ConfigSchemaIdDeserializer. This lets you specify a fixed schema ID for deserializing message keys. This property is applicable only whenkey.converter.key.schema.id.deserializeris set toConfigSchemaIdDeserializer.

Auto-restart policy

Enable Connector Auto-restart: Enables the auto-restart behavior of the connector and its task in the event of user-actionable errors. Defaults to

true, enabling the connector to automatically restart in case of user-actionable errors. Set this property tofalseto disable auto-restart for failed connectors. In such cases, you would need to manually restart the connector.

Consumer configuration

Max poll interval(ms): Sets the maximum delay between subsequent consume requests to Kafka. Use this property to improve connector performance in cases when the connector cannot send records to the sink system. The default is 300,000 milliseconds (5 minutes).

Max poll records: Sets the maximum number of records to consume from Kafka in a single request. Use this property to improve connector performance in cases when the connector cannot send records to the sink system. The default is 500 records.

Transforms

Single Message Transforms: To add a new SMT, see Add transforms. For more information about unsupported SMTs, see Unsupported transformations.

Processing position

Set offsets: Click Set offsets to define a specific offset for this connector to begin procession data from. For more information on managing offsets, see Manage offsets.

See Configuration Properties for all property values and definitions.

Click Continue.

Based on the number of topic partitions you select, you will be provided with a recommended number of tasks.

To change the number of recommended tasks, enter the number of tasks for the connector to use in the Tasks field.

Click Continue.

Step 5: Check the results in BigQuery

From the Google Cloud Console, go to your BigQuery project.

Query your datasets and verify that new records are being added.

For more information and examples to use with the Confluent Cloud API for Connect, see the Confluent Cloud API for Connect Usage Examples section.

Tip

When you launch a connector, a Dead Letter Queue topic is automatically created. See View Connector Dead Letter Queue Errors in Confluent Cloud for details.

Note

Make sure to review BigQuery rate limits if you are planning to use multiple connectors with a high number of tasks.

Using the Confluent CLI

Complete the following steps to set up and run the connector using the Confluent CLI.

Note

Make sure you have all your prerequisites completed.

Step 1: List the available connectors

Enter the following command to list available connectors:

confluent connect plugin list

Step 2: List the connector configuration properties

Enter the following command to show the connector configuration properties:

confluent connect plugin describe <connector-plugin-name>

The command output shows the required and optional configuration properties.

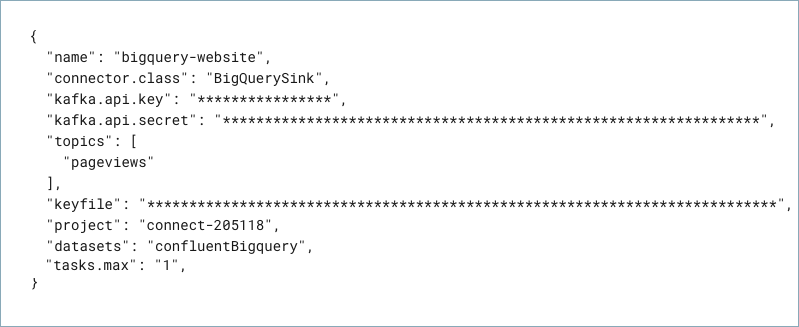

Step 3: Create the connector configuration file

Tip

Confluent recommends using Version 2 of this connector. For more information, see Google BigQuery Sink V2 Connector for Confluent Cloud.

Create a JSON file that contains the connector configuration properties. The following example shows the required connector properties.

{

"name" : "confluent-bigquery-sink",

"connector.class" : "BigQuerySink",

"kafka.auth.mode": "KAFKA_API_KEY",

"kafka.api.key" : "<my-kafka-api-key>",

"kafka.api.secret" : "<my-kafka-api-secret>",

"keyfile" : "omitted",

"project" : "<my-BigQuery-project>",

"datasets" : "<my-BigQuery-dataset>",

"input.data.format" : "AVRO",

"auto.create.tables" : "true"

"sanitize.topics" : "true"

"auto.update.schemas" : "true"

"sanitize.field.names" : "true"

"tasks.max" : "1"

"topics" : "pageviews",

}

Note the following property definitions:

"name": Sets a name for your new connector."connector.class": Identifies the connector plugin name.

"kafka.auth.mode": Identifies the connector authentication mode you want to use. There are two options:SERVICE_ACCOUNTorKAFKA_API_KEY(the default). To use an API key and secret, specify the configuration propertieskafka.api.keyandkafka.api.secret, as shown in the example configuration (above). To use a service account, specify the Resource ID in the propertykafka.service.account.id=<service-account-resource-ID>. To list the available service account resource IDs, use the following command:confluent iam service-account list

For example:

confluent iam service-account list Id | Resource ID | Name | Description +---------+-------------+-------------------+------------------- 123456 | sa-l1r23m | sa-1 | Service account 1 789101 | sa-l4d56p | sa-2 | Service account 2

"topics": Identifies the topic name or a comma-separated list of topic names."keyfile": This contains the contents of the downloaded JSON file. See Formatting keyfile credentials for details about how to format and use the contents of the downloaded credentials file."input.data.format": Sets the input Kafka record value format (data coming from the Kafka topic). Valid entries are AVRO, JSON_SR, PROTOBUF, or JSON. You must have Confluent Cloud Schema Registry configured if using a schema-based message format (for example, Avro, JSON_SR (JSON Schema), or Protobuf).

The following are additional properties you can use. See Configuration Properties for all property values and definitions.

"auto.create.tables": Designates whether to automatically create BigQuery tables. Defaults tofalse. The connector expects that the BigQuery table name is the same as the topic name. If you create the BigQuery tables manually, make sure the table name matches the topic name. Note that this property is available for AVRO only.Note

New tables and schema updates may take a few minutes to be detected by the Google Client Library. For more information see the Google Cloud BigQuery API guide.

"auto.update.schemas": Defaults tofalse. Designates whether or not to automatically update BigQuery schemas. Iftrueis selected, new fields are added with mode NULLABLE in the BigQuery schema. Note that this property is available for AVRO only."sanitize.topics": Designates whether to automatically sanitize topic names before using them as table names. If not enabled, topic names are used as table names. If enabled, the table names created may be different from the topic names. Source topic names must comply with BigQuery naming conventions even ifsanitize.topicsis set totrue."sanitize.field.names": Designates whether to automatically sanitize field names before using them as column names in BigQuery. BigQuery specifies that field names can only contain letters, numbers, and underscores. The sanitizer replaces invalid symbols with underscores. If the field name starts with a digit, the sanitizer adds an underscore in front of the field name.Caution

Fields

a.banda_bwill have the same value after sanitizing, which could cause a key duplication error. If not used, field names are used as column names."partitioning.type": Select a partitioning type to use:"INGESTION_TIME": To use this type, existing tables must be partitioned by ingestion time. The connector writes to the partition for the current wall clock time. When"auto.create.tables"istrue, the connector creates tables partitioned by ingestion time."NONE": The connector relies only on how the existing tables are set up. When"auto.create.tables"istrue, the connector creates non-partitioned tables."RECORD_TIME": To use this type, existing tables must be partitioned by record time. The connector writes to the partition that corresponds to the Kafka record’s timestamp. When"auto.create.tables"istrue, the connector creates tables partitioned by record time."TIMESTAMP_COLUMN": The connector relies only on how existing tables are set up. When"auto.create.tables"istrue, the connector creates tables partitioned using a field in a Kafka record value.

"time.partitioning.type": When usingINGESTION_TIME,RECORD_TIME, orTIMESTAMP_COLUMN, enter a time span for time partitioning. If you enterNONE, the connector honors the existing BigQuery table partitioning. When"auto.create.tables"istrue, the connector creates a table without a specific partitioning strategy."timestamp.partition.field.name": To use this property,"partitioning.type"must beTIMESTAMP_COLUMNand"auto.create.tables"must be set totrue. Enter the field name for the value that contains the timestamp to partition in BigQuery. This enables timestamp partitioning for each table.

Single Message Transforms: See the Single Message Transforms (SMT) documentation for details about adding SMTs using the CLI. See Unsupported transformations for a list of SMTs that are not supported with this connector.

See Configuration Properties for all property values and definitions.

Formatting keyfile credentials

The contents of the downloaded credentials file must be converted to string format before it can be used in the connector configuration.

Convert the JSON file contents into string format.

Add the escape character

\before all\nentries in the Private Key section so that each section begins with\\n(see the highlighted lines below). The example below has been formatted so that the\\nentries are easier to see. Most of the credentials key has been omitted.Tip

A script is available that converts the credentials to a string and also adds the additional escape characters where needed. See Stringify Google Cloud Credentials.

{ "name" : "confluent-bigquery-sink", "connector.class" : "BigQuerySink", "kafka.api.key" : "<my-kafka-api-key>", "kafka.api.secret" : "<my-kafka-api-secret>", "topics" : "pageviews", "keyfile" : "{\"type\":\"service_account\",\"project_id\":\"connect- 1234567\",\"private_key_id\":\"omitted\", \"private_key\":\"-----BEGIN PRIVATE KEY----- \\nMIIEvAIBADANBgkqhkiG9w0BA \\n6MhBA9TIXB4dPiYYNOYwbfy0Lki8zGn7T6wovGS5\opzsIh \\nOAQ8oRolFp\rdwc2cC5wyZ2+E+bhwn \\nPdCTW+oZoodY\\nOGB18cCKn5mJRzpiYsb5eGv2fN\/J \\n...rest of key omitted... \\n-----END PRIVATE KEY-----\\n\", \"client_email\":\"pub-sub@connect-123456789.iam.gserviceaccount.com\", \"client_id\":\"123456789\",\"auth_uri\":\"https:\/\/accounts.google.com\/o\/oauth2\/ auth\",\"token_uri\":\"https:\/\/oauth2.googleapis.com\/ token\",\"auth_provider_x509_cert_url\":\"https:\/\/ www.googleapis.com\/oauth2\/v1\/ certs\",\"client_x509_cert_url\":\"https:\/\/www.googleapis.com\/ robot\/v1\/metadata\/x509\/pub-sub%40connect- 123456789.iam.gserviceaccount.com\"}", "project": "<my-BigQuery-project>", "datasets":"<my-BigQuery-dataset>", "data.format":"AVRO", "tasks.max" : "1" }

Add all the converted string content to the

"keyfile"credentials section of your configuration file as shown in the example above.

Step 4: Load the configuration file and create the connector

Enter the following command to load the configuration and start the connector:

confluent connect cluster create --config-file <file-name>.json

For example:

confluent connect cluster create --config-file bigquery-sink-config.json

Example output:

Created connector confluent-bigquery-sink lcc-ix4dl

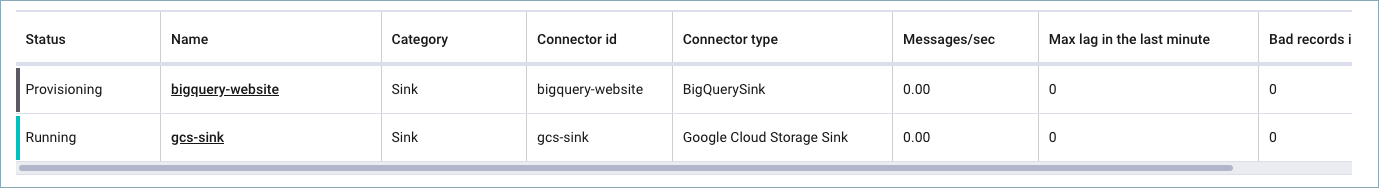

Step 5: Check the connector status

Enter the following command to check the connector status:

confluent connect cluster list

Example output:

ID | Name | Status | Type

+-----------+-------------------------+---------+------+

lcc-ix4dl | confluent-bigquery-sink | RUNNING | sink

Step 6: Check the results in BigQuery.

From the Google Cloud Console, go to your BigQuery project.

Query your datasets and verify that new records are being added.

For more information and examples to use with the Confluent Cloud API for Connect, see the Confluent Cloud API for Connect Usage Examples section.

Tip

When you launch a connector, a Dead Letter Queue topic is automatically created. See View Connector Dead Letter Queue Errors in Confluent Cloud for details.

Note

Make sure to review BigQuery rate limits if you are planning to use multiple connectors with a high number of tasks.

Configuration Properties

Use the following configuration properties with this connector.

Which topics do you want to get data from?

topicsIdentifies the topic name or a comma-separated list of topic names.

Type: list

Importance: high

errors.deadletterqueue.topic.nameThe name of the topic to be used as the dead letter queue (DLQ) for messages that result in an error when processed by this sink connector, or its transformations or converters. Defaults to ‘dlq-${connector}’ if not set. The DLQ topic will be created automatically if it does not exist. You can provide

${connector}in the value to use it as a placeholder for the logical cluster ID.Type: string

Default: dlq-${connector}

Importance: low

Schema Config

schema.context.nameAdd a schema context name. A schema context represents an independent scope in Schema Registry. It is a separate sub-schema tied to topics in different Kafka clusters that share the same Schema Registry instance. If not used, the connector uses the default schema configured for Schema Registry in your Confluent Cloud environment.

Type: string

Default: default

Importance: medium

Input messages

input.key.formatSets the input Kafka record key format. Valid entries are AVRO, BYTES, JSON, JSON_SR, PROTOBUF, or STRING. Note that you must have Confluent Cloud Schema Registry configured if using a schema-based message format like AVRO, JSON_SR, and PROTOBUF

Type: string

Default: BYTES

Valid Values: AVRO, BYTES, JSON, JSON_SR, PROTOBUF, STRING

Importance: high

input.data.formatSets the input Kafka record value format. Valid entries are AVRO, JSON_SR, PROTOBUF, and JSON. Note that you must have Confluent Cloud Schema Registry configured if using a schema-based message format like AVRO, JSON_SR, or PROTOBUF.

Type: string

Default: JSON

Importance: high

How should we connect to your data?

nameSets a name for your connector.

Type: string

Valid Values: A string at most 64 characters long

Importance: high

Kafka Cluster credentials

kafka.auth.modeKafka Authentication mode. It can be one of KAFKA_API_KEY or SERVICE_ACCOUNT. It defaults to KAFKA_API_KEY mode, whenever possible.

Type: string

Valid Values: SERVICE_ACCOUNT, KAFKA_API_KEY

Importance: high

kafka.api.keyKafka API Key. Required when kafka.auth.mode==KAFKA_API_KEY.

Type: password

Importance: high

kafka.service.account.idThe Service Account that will be used to generate the API keys to communicate with Kafka Cluster.

Type: string

Importance: high

kafka.api.secretSecret associated with Kafka API key. Required when kafka.auth.mode==KAFKA_API_KEY.

Type: password

Importance: high

GCP credentials

keyfileGCP service account JSON file with write permissions for BigQuery.

Type: password

Importance: high

BigQuery details

projectGCP Project ID where BigQuery is located.

Type: string

Importance: high

datasetsName for the BigQuery dataset that Kafka topics write to.

Type: string

Importance: high

SQL/DDL Support

partitioning.typeThe partitioning type to use.

NONE: The connector relies only on how the existing tables are set up. If the Auto create tables property is enabled, the connector creates non-partitioned tables.

INGESTION_TIME: Existing tables must be partitioned by ingestion time. The connector writes to the partition for the current wall clock time. If the Auto create tables property is enabled, the connector creates tables partitioned by ingestion time.

RECORD_TIME: Existing tables must be partitioned by ingestion time. The connector writes to the partition based on the Kafka record timestamp. If the Auto create tables property is enabled, the connector creates tables partitioned by ingestion time. The only supported

time.partitioning.typevalue for RECORD_TIME is DAY.TIMESTAMP_COLUMN: The connector relies only on how the existing tables are set up. If the Auto create tables property is enabled, the connector creates tables partitioned by the

timestamp.partition.field.nameused.Type: string

Default: INGESTION_TIME

Importance: high

topic2table.mapMap of topics to tables (optional). The required format is comma-separated tuples. For example, <topic-1>:<table-1>,<topic-2>:<table-2>,… Note that a topic name must not be modified using a regex SMT while using this option. Note that if this property is used,

sanitize.topicsis ignored. Also, if the topic-to-table map doesn’t contain the topic for a record, the connector creates a table with the same name as the topic name.Type: string

Default: “”

Importance: medium

auto.create.tablesDesignates whether or not to automatically create BigQuery tables. Note: Supports AVRO, JSON_SR, and PROTOBUF message format only.

Type: boolean

Default: false

Importance: high

auto.update.schemasDesignates whether or not to automatically update BigQuery schemas. New fields in record schemas must be nullable. Note: Supports AVRO, JSON_SR, and PROTOBUF message format only.

Type: boolean

Default: false

Importance: high

sanitize.topicsDesignates whether to automatically sanitize topic names before using them as table names in BigQuery. If not enabled, topic names are used as table names.

Type: boolean

Default: true

Importance: high

sanitize.field.namesWhether to automatically sanitize field names before using them as field names in BigQuery. BigQuery specifies that field names can only contain letters, numbers, and underscores. The sanitizer replaces invalid symbols with underscores. If the field name starts with a digit, the sanitizer adds an underscore in front of field name. Caution: Key duplication errors can occur if different fields are named a.b and a_b, for instance. After being sanitized, field names a.b and a_b will have same value.

Type: boolean

Default: false

Importance: high

time.partitioning.typeThe time partitioning type to use when creating new tables for

partitioning.typeINGESTION_TIME, RECORD_TIME or TIMESTAMP_COLUMN. Existing tables are not altered to use this partitioning type.Type: string

Default: DAY

Importance: low

timestamp.partition.field.nameThe name of the field in the value that contains the timestamp to partition by in BigQuery. This enable timestamp partitioning for tables. The connector ignores this property if

partitioning.typeis not TIMESTAMP_COLUMN or ifauto.create.tablesis false.Type: string

Importance: low

allow.schema.unionizationIf enabled, when performing schema updates, record schemas are combined with the current schema of the BigQuery table. This can be useful if there are Kafka records with schemas that are missing fields that correspond to columns already present in the BigQuery table schema. Note that when this is enabled, unrelated records could be produced to a topic consumed by the connector. The reason for this is instead of failing on invalid data, the connector appends the field for the unrelated record schema to the BigQuery table schema.

Type: boolean

Default: false

Importance: low

all.bq.fields.nullableIf true, no fields in any produced BigQuery schema are required. All non-nullable Avro fields are translated as NULLABLE (or REPEATED, if arrays).

Type: boolean

Default: false

Importance: low

convert.double.special.valuesDesignates whether +Infinity is converted to Double.MAX_VALUE and whether -Infinity and NaN are converted to Double.MIN_VALUE to ensure successful delivery to BigQuery.

Type: boolean

Default: false

Importance: low

Consumer configuration

max.poll.interval.msThe maximum delay between subsequent consume requests to Kafka. This configuration property may be used to improve the performance of the connector, if the connector cannot send records to the sink system. Defaults to 300000 milliseconds (5 minutes).

Type: long

Default: 300000 (5 minutes)

Valid Values: [60000,…,1800000] for non-dedicated clusters and [60000,…] for dedicated clusters

Importance: low

max.poll.recordsThe maximum number of records to consume from Kafka in a single request. This configuration property may be used to improve the performance of the connector, if the connector cannot send records to the sink system. Defaults to 500 records.

Type: long

Default: 500

Valid Values: [1,…,500] for non-dedicated clusters and [1,…] for dedicated clusters

Importance: low

Additional Configs

key.converter.use.schema.guidThe schema GUID to use for deserialization when using ConfigSchemaIdDeserializer. This allows you to specify a fixed schema GUID to be used for deserializing message keys. Only applicable when key.converter.key.schema.id.deserializer is set to ConfigSchemaIdDeserializer.

Type: string

Importance: low

key.converter.use.schema.idThe schema ID to use for deserialization when using ConfigSchemaIdDeserializer. This allows you to specify a fixed schema ID to be used for deserializing message keys. Only applicable when key.converter.key.schema.id.deserializer is set to ConfigSchemaIdDeserializer.

Type: int

Importance: low

value.converter.connect.meta.dataAllow the Connect converter to add its metadata to the output schema. Applicable for Avro Converters.

Type: boolean

Importance: low

value.converter.use.schema.guidThe schema GUID to use for deserialization when using ConfigSchemaIdDeserializer. This allows you to specify a fixed schema GUID to be used for deserializing message values. Only applicable when value.converter.value.schema.id.deserializer is set to ConfigSchemaIdDeserializer.

Type: string

Importance: low

value.converter.use.schema.idThe schema ID to use for deserialization when using ConfigSchemaIdDeserializer. This allows you to specify a fixed schema ID to be used for deserializing message values. Only applicable when value.converter.value.schema.id.deserializer is set to ConfigSchemaIdDeserializer.

Type: int

Importance: low

errors.toleranceUse this property if you would like to configure the connector’s error handling behavior. WARNING: This property should be used with CAUTION for SOURCE CONNECTORS as it may lead to dataloss. If you set this property to ‘all’, the connector will not fail on errant records, but will instead log them (and send to DLQ for Sink Connectors) and continue processing. If you set this property to ‘none’, the connector task will fail on errant records.

Type: string

Default: all

Importance: low

key.converter.key.schema.id.deserializerThe class name of the schema ID deserializer for keys. This is used to deserialize schema IDs from the message headers.

Type: string

Default: io.confluent.kafka.serializers.schema.id.DualSchemaIdDeserializer

Importance: low

key.converter.key.subject.name.strategyHow to construct the subject name for key schema registration.

Type: string

Default: TopicNameStrategy

Importance: low

value.converter.decimal.formatSpecify the JSON/JSON_SR serialization format for Connect DECIMAL logical type values with two allowed literals:

BASE64 to serialize DECIMAL logical types as base64 encoded binary data and

NUMERIC to serialize Connect DECIMAL logical type values in JSON/JSON_SR as a number representing the decimal value.

Type: string

Default: BASE64

Importance: low

value.converter.ignore.default.for.nullablesWhen set to true, this property ensures that the corresponding record in Kafka is NULL, instead of showing the default column value. Applicable for AVRO,PROTOBUF and JSON_SR Converters.

Type: boolean

Default: false

Importance: low

value.converter.reference.subject.name.strategySet the subject reference name strategy for value. Valid entries are DefaultReferenceSubjectNameStrategy or QualifiedReferenceSubjectNameStrategy. Note that the subject reference name strategy can be selected only for PROTOBUF format with the default strategy being DefaultReferenceSubjectNameStrategy.

Type: string

Default: DefaultReferenceSubjectNameStrategy

Importance: low

value.converter.replace.null.with.defaultWhether to replace fields that have a default value and that are null to the default value. When set to true, the default value is used, otherwise null is used. Applicable for JSON Converter.

Type: boolean

Default: true

Importance: low

value.converter.schemas.enableInclude schemas within each of the serialized values. Input messages must contain schema and payload fields and may not contain additional fields. For plain JSON data, set this to false. Applicable for JSON Converter.

Type: boolean

Default: false

Importance: low

value.converter.value.schema.id.deserializerThe class name of the schema ID deserializer for values. This is used to deserialize schema IDs from the message headers.

Type: string

Default: io.confluent.kafka.serializers.schema.id.DualSchemaIdDeserializer

Importance: low

value.converter.value.subject.name.strategyDetermines how to construct the subject name under which the value schema is registered with Schema Registry.

Type: string

Default: TopicNameStrategy

Importance: low

Number of tasks for this connector

tasks.maxMaximum number of tasks for the connector.

Type: int

Valid Values: [1,…]

Importance: high

Auto-restart policy

auto.restart.on.user.errorEnable connector to automatically restart on user-actionable errors.

Type: boolean

Default: true

Importance: medium

Next Steps

For an example that shows fully-managed Confluent Cloud connectors in action with Confluent Cloud for Apache Flink, see the Cloud ETL Demo. This example also shows how to use Confluent CLI to manage your resources in Confluent Cloud.