Azure Cognitive Search Sink Connector for Confluent Cloud

The fully-managed Azure Cognitive Search Sink connector for Confluent Cloud can move data from Apache Kafka® to Azure Cognitive Search. The connector writes each event from a Kafka topic (as a document) to an index in Azure Cognitive Search. The connector uses the Azure Cognitive Search REST API to send records as documents.

Confluent Cloud is available through Azure Marketplace or directly from Confluent.

Note

This Quick Start is for the fully-managed Confluent Cloud connector. If you are installing the connector locally for Confluent Platform, see Azure Cognitive Search Sink connector for Confluent Platform.

If you require private networking for fully-managed connectors, make sure to set up the proper networking beforehand. For more information, see Manage Networking for Confluent Cloud Connectors.

Features

The Azure Cognitive Search Sink connector supports the following features:

At least once delivery: This connector guarantees that records from the Kafka topic are delivered at least once.

Supports multiple tasks: The connector supports running one or more tasks. More tasks may improve performance.

Ordered writes: The connector writes records in exactly the same order that it receives them. And for uniqueness, the Kafka coordinates (topic, partition, and offset) can be used as the document key. Otherwise, the connector uses the record key as the document key.

Automatically creates topics: The following three topics are automatically created when the connector starts:

Success topic

Error topic

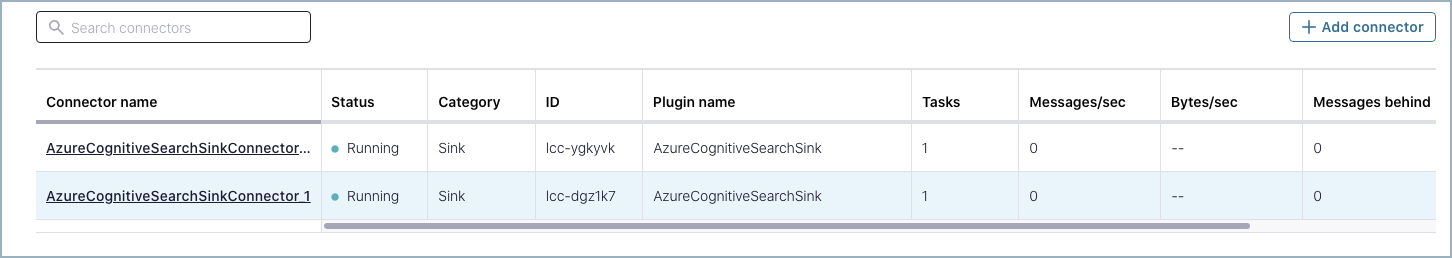

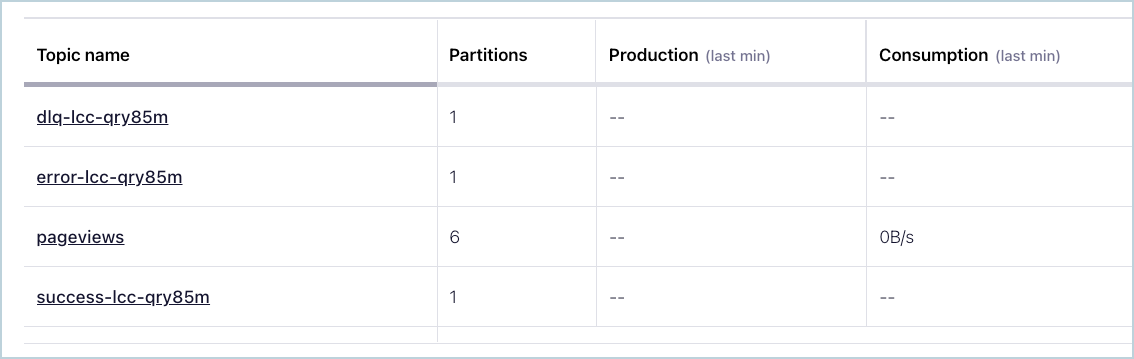

The suffix for each topic name is the connector’s logical ID. In the example below, there are the three connector topics and one pre-existing Kafka topic named pageviews.

Connector Topics

If the records sent to the topic are not in the correct format, or if important fields are missing in the record, the errors are recorded in the error topic, and the connector continues to run.

Automatic retries: The connector will retry all requests (that can be retried) when the Azure Cognitive Search service is unavailable. The maximum amount of time that the connector spends retrying can be specified by the

max.retry.msconfiguration property.Supported data formats: The connector supports Avro, JSON Schema (JSON-SR), and Protobuf input formats. Schema Registry must be enabled to use these Schema Registry-based formats.

For more information and examples to use with the Confluent Cloud API for Connect, see the Confluent Cloud API for Connect Usage Examples section.

Limitations

Be sure to review the following information.

For connector limitations, see Azure Cognitive Search Sink Connector limitations.

If you plan to use one or more Single Message Transforms (SMTs), see SMT Limitations.

Azure service principal

You need an Azure RBAC service principal to run the connector. When you create the service principal, the output from the Azure CLI command provides the necessary authentication and authorization details that you add to the connector configuration.

Note

If you want to assign roles to an existing service principal using the Azure portal instead of the CLI, see Assign Azure roles using the Azure portal.

Complete the following steps to create the service principal using the Azure CLI.

Log in to the Azure CLI.

az login

Enter the following command to create the service principal:

az ad sp create-for-rbac --name <Name of service principal> --scopes \ /subscriptions/<SubscriptionID>/resourceGroups/<Resource_Group>

For example:

az ad sp create-for-rbac --name azure_search --scopes /subscriptions/ d92eeba4-...omitted...-37c2bd9259d0/resourceGroups/connect-azure Creating 'Contributor' role assignment under scope '/subscriptions/ d92eeba4-...omitted...-37c2bd9259d0/resourceGroups/connect-azure' The output includes credentials that you must protect. Be sure that you do not include these credentials in your code or check the credentials into your source control. { "appId": "8ec186f9-...omitted...-e575b928b00a", "displayName": "azure_search", "name": "8ec186f9-...omitted...-e575b928b00a", "password": "jdGzGTwCKQ...omitted...QwE3hx", "tenant": "0893715b-...omitted...-2789e1ead045" }Save the following details to use in the connector configuration:

Use the

"appId"output for the connector UI field named Azure Client ID (CLI propertyazure.search.client.id).Use the

"password"output for the connector UI field named Azure Client Secret (CLI propertyazure.search.client.secret).Use the

"tenant"output for the connector UI field named Azure Tenant ID (CLI propertyazure.search.tenant.id).Tip

You can create a more granular service principal if needed. For example, the following command creates the contributor role assignment specifically to access Azure search services.

az ad sp create-for-rbac --name <Name of service principal> --scopes /subscriptions/<SubscriptionID>/resourceGroups/<Resource Group> /providers/Microsoft.Search/searchServices/<Search Service Name> --role Reader

Quick Start

Use this quick start to get up and running with the Confluent Cloud Azure Cognitive Search Sink connector. The quick start provides the basics of selecting the connector and configuring it to stream events.

- Prerequisites

Authorized access to a Confluent Cloud cluster on Microsoft Azure (Azure).

An Azure service principal, an Azure Cognitive Search API key, and subscription details for the connector configuration.

The Confluent CLI installed and configured for the cluster. See Install the Confluent CLI.

Schema Registry must be enabled to use a Schema Registry-based format (for example, Avro, JSON_SR (JSON Schema), or Protobuf).

At least one index must exist in Azure Cognitive Search.

All record schema fields must be present as Azure search service index fields.

At least one source Kafka topic must exist in your Confluent Cloud cluster before creating the sink connector.

Using the Confluent Cloud Console

Step 1: Launch your Confluent Cloud cluster

To create and launch a Kafka cluster in Confluent Cloud, see Create a kafka cluster in Confluent Cloud.

Step 2: Add a connector

In the left navigation menu, click Connectors. If you already have connectors in your cluster, click + Add connector.

Step 3: Select your connector

Click the Azure Cognitive Search Sink connector card.

Step 4: Enter the connector details

Note

Ensure you have all your prerequisites completed.

An asterisk ( * ) designates a required entry.

At the Add Azure Cognitive Search Sink Connector screen, complete the following:

If you’ve already populated your Kafka topics, select the topics you want to connect from the Topics list.

To create a new topic, click +Add new topic.

Select the way you want to provide Kafka Cluster credentials. You can choose one of the following options:

My account: This setting allows your connector to globally access everything that you have access to. With a user account, the connector uses an API key and secret to access the Kafka cluster. This option is not recommended for production.

Service account: This setting limits the access for your connector by using a service account. This option is recommended for production.

Use an existing API key: This setting allows you to specify an API key and a secret pair. You can use an existing pair or create a new one. This method is not recommended for production environments.

Note

Freight clusters support only service accounts for Kafka authentication.

Click Continue.

Configure the authentication properties:

Azure Search Service Name: The name of the Azure Search service.

Azure Search Api Key: The API key for the Azure Search service

Azure Client ID: Client ID of service principal of your subscription.

Azure Client Secret: Client secret of service principal of your subscription.

Azure Tenant ID: Tenant ID of service principal of your subscription.

Azure Subscription ID: Azure subscription ID for your Azure account.

ResourceGroup Name:

ResourceGroupin which Azure Search service exists.

Click Continue.

Note

Configuration properties that are not shown in the Cloud Console use the default values. See Configuration Properties for all property values and descriptions.

Input Kafka record value format: Select an input Kafka record value format (data coming from the Kafka topic). Valid entries are AVRO, JSON_SR (JSON Schema), or PROTOBUF. A valid schema must be available in Schema Registry to use a schema-based message format (for example, AVRO, JSON_SR, or PROTOBUF.

Index Name Pattern: Enter the Index Pattern Name, which is the name of the index to write records as documents to. Use

${topic}within the pattern to specify the topic of the record.

Show advanced configurations

Write Method: The method used to write Kafka records to an index. Available methods are

Uploadwhich functions likeupsertandMergeOrUpload, which updates an existing document with the specified fields. If the document doesn’t exist, it behaves likeUpload.Delete Enabled: Whether documents will be deleted if the record value is null.

Key Mode: Determines what will be used for the document key id. The available modes are:

KEY: The Kafka record key is used as the document key.COORDINATES: The Kafka coordinates (topic, partition, and offset) are concatenated to form the document key. This allows for unique document keys.

Max Batch Size: The maximum number of Kafka records that will be sent per request. To disable batching of records, set this value to 1.

Maximum Retry Time (ms): The maximum amount of time in milliseconds that the connector will attempt its request before aborting it.

Auto-restart policy

Enable Connector Auto-restart: Enables the auto-restart behavior of the connector and its task in the event of user-actionable errors. Defaults to

true, enabling the connector to automatically restart in case of user-actionable errors. Set this property tofalseto disable auto-restart for failed connectors. In such cases, you would need to manually restart the connector.

Additional Configs

Value Converter Decimal Format: Specifies the

JSONorJSON_SRserialization format for ConnectDECIMALlogical type values with two allowed literals:BASE64to serializeDECIMALlogical types as base64 encoded binary data, andNUMERICto serializeDECIMALlogical type values inJSONorJSON_SRas a number representing the decimal value.Schema GUID For Key Converter: Sets the schema GUID to use for deserialization when using

ConfigSchemaIdDeserializer. This lets you specify a fixed schema GUID for deserializing message keys. This property is applicable only whenkey.converter.key.schema.id.deserializeris set toConfigSchemaIdDeserializer.Value Converter Schema ID Deserializer: Sets the class name of the schema ID deserializer for values. The deserializer reads schema IDs from message headers.

Schema GUID For Value Converter: Sets the schema GUID to use for deserialization when using

ConfigSchemaIdDeserializer. This lets you specify a fixed schema GUID for deserializing message values. This property is applicable only whenvalue.converter.value.schema.id.deserializeris set toConfigSchemaIdDeserializer.Value Converter Reference Subject Name Strategy: Sets the subject reference name strategy for values. Valid entries are

DefaultReferenceSubjectNameStrategyorQualifiedReferenceSubjectNameStrategy. You can use this strategy only withPROTOBUFformat; the default strategy isDefaultReferenceSubjectNameStrategy.Schema ID For Value Converter: Sets the schema ID to use for deserialization when using

ConfigSchemaIdDeserializer. This lets you specify a fixed schema ID for deserializing message values. This property is applicable only whenvalue.converter.value.schema.id.deserializeris set toConfigSchemaIdDeserializer.Value Converter Connect Meta Data: Enables the Connect converter to add its metadata to the output schema. Applies to Avro converters.

Value Converter Value Subject Name Strategy: Determines how to construct the subject name under which the value schema is registered with Schema Registry.

Key Converter Key Subject Name Strategy: Determines how to construct the subject name for key schema registration.

Key Converter Schema ID Deserializer: Sets the class name of the schema ID deserializer for keys. The deserializer reads schema IDs from message headers.

Schema ID For Key Converter: Sets the schema ID to use for deserialization when using

ConfigSchemaIdDeserializer. This lets you specify a fixed schema ID for deserializing message keys. This property is applicable only whenkey.converter.key.schema.id.deserializeris set toConfigSchemaIdDeserializer.

Schema Config

Schema context: Select a schema context to use for this connector, if using a schema-based data format. This property defaults to the Default context, which configures the connector to use the default schema set up for Schema Registry in your Confluent Cloud environment. A schema context allows you to use separate schemas (like schema sub-registries) tied to topics in different Kafka clusters that share the same Schema Registry environment. For example, if you select a non-default context, a Source connector uses only that schema context to register a schema and a Sink connector uses only that schema context to read from. For more information about setting up a schema context, see What are schema contexts and when should you use them?.

Consumer configuration

Max poll interval(ms): Sets the maximum delay between subsequent consume requests to Kafka. Use this property to improve connector performance in cases when the connector cannot send records to the sink system. The default is 300,000 milliseconds (5 minutes).

Max poll records: Sets the maximum number of records to consume from Kafka in a single request. Use this property to improve connector performance in cases when the connector cannot send records to the sink system. The default is 500 records.

Transforms

Single Message Transforms: To add a new SMT, see Add transforms. For more information about unsupported SMTs, see Unsupported transformations.

Processing position

Set offsets: Click Set offsets to define a specific offset for this connector to begin procession data from. For more information on managing offsets, see Manage offsets.

Click Continue.

Based on the number of topic partitions you select, you will be provided with a recommended number of tasks.

To change the number of recommended tasks, enter the number of tasks for the connector to use in the Tasks field.

Click Continue.

Step 5: Check for documents.

Verify that documents are populating the search index.

For more information and examples to use with the Confluent Cloud API for Connect, see the Confluent Cloud API for Connect Usage Examples section.

Tip

When you launch a connector, a Dead Letter Queue topic is automatically created. See View Connector Dead Letter Queue Errors in Confluent Cloud for details.

Using the Confluent CLI

Complete the following steps to set up and run the connector using the Confluent CLI.

Note

Make sure you have all your prerequisites completed.

Step 1: List the available connectors

Enter the following command to list available connectors:

confluent connect plugin list

Step 2: List the connector configuration properties

Enter the following command to show the connector configuration properties:

confluent connect plugin describe <connector-plugin-name>

The command output shows the required and optional configuration properties.

Step 3: Create the connector configuration file

Create a JSON file that contains the connector configuration properties. The following example shows the required connector properties.

{

"connector.class": "AzureCognitiveSearchSink",

"input.data.format": "AVRO",

"name": "AzureCognitiveSearchSink_0",

"kafka.api.key": "****************",

"kafka.api.secret": "************************************************",

"azure.search.service.name": "<service_name>",

"azure.search.api.key": "<api_key>",

"azure.search.client.id": "<client_id>",

"azure.search.client.secret": "<client_secret>",

"azure.search.tenant.id": "<tenant_id>",

"azure.search.subscription.id": "<subscription_id>",

"azure.search.resourcegroup.name": "<resource_group>",

"index.name": "<index_name>",

"tasks.max": "1",

"topics": "<topic_name>"

}

Note the following property definitions:

"connector.class": Identifies the connector plugin name."input.data.format": Sets the input Kafka record value format (data coming from the Kafka topic). Valid entries are AVRO, JSON_SR, and PROTOBUF. You must have Confluent Cloud Schema Registry configured if using a schema-based message format (for example, Avro, JSON_SR (JSON Schema), or Protobuf)."name": Sets a name for your new connector."kafka.api.key"and""kafka.api.secret": These credentials are either the cluster API key and secret or the service account API key and secret.azure.search.<...>Required Azure and Azure search connection details. See Azure service principal and Azure Cognitive Search API key for property details."index.name": The name of the search index to write records to (as documents)."tasks.max": Enter the maximum number of tasks for the connector to use. More tasks may improve performance."topics": Enter the topic name or a comma-separated list of topic names.

Single Message Transforms: See the Single Message Transforms (SMT) documentation for details about adding SMTs using the CLI. See Unsupported transformations for a list of SMTs that are not supported with this connector.

See Configuration Properties for all property values and descriptions.

Step 4: Load the properties file and create the connector

Enter the following command to load the configuration and start the connector:

confluent connect cluster create --config-file <file-name>.json

For example:

confluent connect cluster create --config-file azure-search-sink-config.json

Example output:

Created connector AzureCognitiveSearchSink_0 lcc-do6vzd

Step 5: Check the connector status

Enter the following command to check the connector status:

confluent connect cluster list

Example output:

ID | Name | Status | Type | Trace

+------------+------------------------------+---------+------+-------+

lcc-do6vzd | AzureCognitiveSearchSink_0 | RUNNING | sink | |

Step 6: Check for documents.

Verify that the Azure search index is being populated.

For more information and examples to use with the Confluent Cloud API for Connect, see the Confluent Cloud API for Connect Usage Examples section.

Tip

When you launch a connector, a Dead Letter Queue topic is automatically created. See View Connector Dead Letter Queue Errors in Confluent Cloud for details.

Configuration Properties

Use the following configuration properties with the fully-managed connector. For self-managed connector property definitions and other details, see the connector docs in Self-managed connectors for Confluent Platform.

Which topics do you want to get data from?

topics.regexA regular expression that matches the names of the topics to consume from. This is useful when you want to consume from multiple topics that match a certain pattern without having to list them all individually.

Type: string

Importance: low

topicsIdentifies the topic name or a comma-separated list of topic names.

Type: list

Importance: high

Schema Config

schema.context.nameAdd a schema context name. A schema context represents an independent scope in Schema Registry. It is a separate sub-schema tied to topics in different Kafka clusters that share the same Schema Registry instance. If not used, the connector uses the default schema configured for Schema Registry in your Confluent Cloud environment.

Type: string

Default: default

Importance: medium

Input messages

input.data.formatSets the input Kafka record value format. Valid entries are AVRO, JSON_SR and PROTOBUF. Note that you need to have Confluent Cloud Schema Registry configured

Type: string

Importance: high

How should we connect to your data?

nameSets a name for your connector.

Type: string

Valid Values: A string at most 64 characters long

Importance: high

Kafka Cluster credentials

kafka.auth.modeKafka Authentication mode. It can be one of KAFKA_API_KEY or SERVICE_ACCOUNT. It defaults to KAFKA_API_KEY mode, whenever possible.

Type: string

Valid Values: SERVICE_ACCOUNT, KAFKA_API_KEY

Importance: high

kafka.api.keyKafka API Key. Required when kafka.auth.mode==KAFKA_API_KEY.

Type: password

Importance: high

kafka.service.account.idThe Service Account that will be used to generate the API keys to communicate with Kafka Cluster.

Type: string

Importance: high

kafka.api.secretSecret associated with Kafka API key. Required when kafka.auth.mode==KAFKA_API_KEY.

Type: password

Importance: high

How should we connect to your Azure Search Service

azure.search.service.nameThe name of the Azure Search service

Type: string

Importance: high

azure.search.api.keyThe api key for the Azure Search service

Type: password

Importance: high

azure.search.client.idClient ID of service principal of your subscription

Type: password

Importance: high

azure.search.client.secretClient Secret of service principal of your subscription

Type: password

Importance: high

azure.search.tenant.idTenant ID of service principal of your subscription

Type: password

Importance: high

azure.search.subscription.idAzure Subscription ID for your Azure Account

Type: password

Importance: high

azure.search.resourcegroup.nameResourceGroup in which Azure Search Service exists

Type: string

Importance: high

Search Service Write Details

index.nameThe name of the index to write records as documents to. Use

${topic}within the pattern to specify the topic of the recordType: string

Importance: high

write.methodThe method used to write Kafka records to an index. Available methods are

Upload- Functions like upsert. A document is inserted if it does not existed and updated/replaced if it doesMergeOrUpload- Updates an existing document with the specified fields. If the document doesn’t exist, behaves likeUploadType: string

Default: Upload

Importance: high

delete.enabledWhether documents will be deleted if the record value is null

Type: boolean

Default: false

Importance: high

key.modeDetermines what will be used for the document key id. The available modes are:

KEY- the Kafka record key is used as the document keyCOORDINATES- the Kafka coordinates (topic, partition, and offset) are concatenated to form the document key. This allows for unique document keysType: string

Default: KEY

Importance: medium

max.batch.sizeThe maximum number of Kafka records that will be sent per request. To disable batching of records, set this value to 1

Type: int

Default: 1

Valid Values: [1,…,1000]

Importance: high

max.retry.msThe maximum amount of time in ms that the connector will attempt its request before aborting it

Type: int

Default: 300000 (5 minutes)

Valid Values: [0,…]

Importance: low

Consumer configuration

max.poll.interval.msThe maximum delay between subsequent consume requests to Kafka. This configuration property may be used to improve the performance of the connector, if the connector cannot send records to the sink system. Defaults to 300000 milliseconds (5 minutes).

Type: long

Default: 300000 (5 minutes)

Valid Values: [60000,…,1800000] for non-dedicated clusters and [60000,…] for dedicated clusters

Importance: low

max.poll.recordsThe maximum number of records to consume from Kafka in a single request. This configuration property may be used to improve the performance of the connector, if the connector cannot send records to the sink system. Defaults to 500 records.

Type: long

Default: 500

Valid Values: [1,…,500] for non-dedicated clusters and [1,…] for dedicated clusters

Importance: low

Number of tasks for this connector

tasks.maxMaximum number of tasks for the connector.

Type: int

Valid Values: [1,…]

Importance: high

Auto-restart policy

auto.restart.on.user.errorEnable connector to automatically restart on user-actionable errors.

Type: boolean

Default: true

Importance: medium

Additional Configs

consumer.override.auto.offset.resetDefines the behavior of the consumer when there is no committed position (which occurs when the group is first initialized) or when an offset is out of range. You can choose either to reset the position to the “earliest” offset (the default) or the “latest” offset. You can also select “none” if you would rather set the initial offset yourself and you are willing to handle out of range errors manually. More details: https://docs.confluent.io/platform/current/installation/configuration/consumer-configs.html#auto-offset-reset

Type: string

Importance: low

consumer.override.isolation.levelControls how to read messages written transactionally. If set to read_committed, consumer.poll() will only return transactional messages which have been committed. If set to read_uncommitted (the default), consumer.poll() will return all messages, even transactional messages which have been aborted. Non-transactional messages will be returned unconditionally in either mode. More details: https://docs.confluent.io/platform/current/installation/configuration/consumer-configs.html#isolation-level

Type: string

Importance: low

header.converterThe converter class for the headers. This is used to serialize and deserialize the headers of the messages.

Type: string

Importance: low

key.converter.use.schema.guidThe schema GUID to use for deserialization when using ConfigSchemaIdDeserializer. This allows you to specify a fixed schema GUID to be used for deserializing message keys. Only applicable when key.converter.key.schema.id.deserializer is set to ConfigSchemaIdDeserializer.

Type: string

Importance: low

key.converter.use.schema.idThe schema ID to use for deserialization when using ConfigSchemaIdDeserializer. This allows you to specify a fixed schema ID to be used for deserializing message keys. Only applicable when key.converter.key.schema.id.deserializer is set to ConfigSchemaIdDeserializer.

Type: int

Importance: low

value.converter.allow.optional.map.keysAllow optional string map key when converting from Connect Schema to Avro Schema. Applicable for Avro Converters.

Type: boolean

Importance: low

value.converter.auto.register.schemasSpecify if the Serializer should attempt to register the Schema.

Type: boolean

Importance: low

value.converter.connect.meta.dataAllow the Connect converter to add its metadata to the output schema. Applicable for Avro Converters.

Type: boolean

Importance: low

value.converter.enhanced.avro.schema.supportEnable enhanced schema support to preserve package information and Enums. Applicable for Avro Converters.

Type: boolean

Importance: low

value.converter.enhanced.protobuf.schema.supportEnable enhanced schema support to preserve package information. Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.flatten.unionsWhether to flatten unions (oneofs). Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.generate.index.for.unionsWhether to generate an index suffix for unions. Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.generate.struct.for.nullsWhether to generate a struct variable for null values. Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.int.for.enumsWhether to represent enums as integers. Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.latest.compatibility.strictVerify latest subject version is backward compatible when use.latest.version is true.

Type: boolean

Importance: low

value.converter.object.additional.propertiesWhether to allow additional properties for object schemas. Applicable for JSON_SR Converters.

Type: boolean

Importance: low

value.converter.optional.for.nullablesWhether nullable fields should be specified with an optional label. Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.optional.for.proto2Whether proto2 optionals are supported. Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.use.latest.versionUse latest version of schema in subject for serialization when auto.register.schemas is false.

Type: boolean

Importance: low

value.converter.use.optional.for.nonrequiredWhether to set non-required properties to be optional. Applicable for JSON_SR Converters.

Type: boolean

Importance: low

value.converter.use.schema.guidThe schema GUID to use for deserialization when using ConfigSchemaIdDeserializer. This allows you to specify a fixed schema GUID to be used for deserializing message values. Only applicable when value.converter.value.schema.id.deserializer is set to ConfigSchemaIdDeserializer.

Type: string

Importance: low

value.converter.use.schema.idThe schema ID to use for deserialization when using ConfigSchemaIdDeserializer. This allows you to specify a fixed schema ID to be used for deserializing message values. Only applicable when value.converter.value.schema.id.deserializer is set to ConfigSchemaIdDeserializer.

Type: int

Importance: low

value.converter.wrapper.for.nullablesWhether nullable fields should use primitive wrapper messages. Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.wrapper.for.raw.primitivesWhether a wrapper message should be interpreted as a raw primitive at root level. Applicable for Protobuf Converters.

Type: boolean

Importance: low

key.converter.key.schema.id.deserializerThe class name of the schema ID deserializer for keys. This is used to deserialize schema IDs from the message headers.

Type: string

Default: io.confluent.kafka.serializers.schema.id.DualSchemaIdDeserializer

Importance: low

key.converter.key.subject.name.strategyHow to construct the subject name for key schema registration.

Type: string

Default: TopicNameStrategy

Importance: low

value.converter.decimal.formatSpecify the JSON/JSON_SR serialization format for Connect DECIMAL logical type values with two allowed literals:

BASE64 to serialize DECIMAL logical types as base64 encoded binary data and

NUMERIC to serialize Connect DECIMAL logical type values in JSON/JSON_SR as a number representing the decimal value.

Type: string

Default: BASE64

Importance: low

value.converter.flatten.singleton.unionsWhether to flatten singleton unions. Applicable for Avro and JSON_SR Converters.

Type: boolean

Default: false

Importance: low

value.converter.reference.subject.name.strategySet the subject reference name strategy for value. Valid entries are DefaultReferenceSubjectNameStrategy or QualifiedReferenceSubjectNameStrategy. Note that the subject reference name strategy can be selected only for PROTOBUF format with the default strategy being DefaultReferenceSubjectNameStrategy.

Type: string

Default: DefaultReferenceSubjectNameStrategy

Importance: low

value.converter.value.schema.id.deserializerThe class name of the schema ID deserializer for values. This is used to deserialize schema IDs from the message headers.

Type: string

Default: io.confluent.kafka.serializers.schema.id.DualSchemaIdDeserializer

Importance: low

value.converter.value.subject.name.strategyDetermines how to construct the subject name under which the value schema is registered with Schema Registry.

Type: string

Default: TopicNameStrategy

Importance: low

Frequently asked questions

Find answers to frequently asked questions about the Azure Cognitive Search Sink connector.

Why does the connector fail with a duplicate properties error from Azure Search?

You see an error similar to:

The connector failed because duplicate properties were detected and Azure Search does not support that. Please make sure that your data does not contain properties with the same name.

This error occurs when:

The Azure index defines a key field (for example,

HotelId).The connector is configured with

key.mode(KEYorCOORDINATES), which automatically populates the key field from the record key.The same field is also present in the record value, causing Azure Search to receive the key property twice.

To avoid duplicate properties, use one of the following methods:

Use Single Message Transforms (SMTs) to move the key field out of the value. For example, to move

HotelIdfrom the value to the key only, add these properties to your connector configuration:{ "transforms": "valToKey,extract,removeField", "transforms.valToKey.type": "org.apache.kafka.connect.transforms.ValueToKey", "transforms.valToKey.fields": "HotelId", "transforms.extract.type": "org.apache.kafka.connect.transforms.ExtractField$Key", "transforms.extract.field": "HotelId", "transforms.removeField.type": "org.apache.kafka.connect.transforms.ReplaceField$Value", "transforms.removeField.exclude": "HotelId" }

This transform chain does the following:

Moves

HotelIdfrom the record value to the key structure.Extracts

HotelIdas the key value.Removes

HotelIdfrom the record value to prevent duplication.

Change the Azure index to use a different key field and adjust

key.modeaccordingly.

Why does validation fail with errors such as Invalid Azure Search Service values or azure.search.service.name: Could not validate region?

During connector validation, Confluent Cloud verifies your Azure Search configuration. Validation fails with messages such as:

Invalid Azure Search Service values

or

azure.search.service.name: Could not validate region. Please check your credentials and try again

This error commonly occurs because:

azure.search.service.namedoes not match the actual Azure Cognitive Search service name.The service is not deployed in a region that is compatible with, or properly mapped to, your Confluent Cloud cluster region.

The Azure credentials used by the connector (API key or service principal) lack sufficient permissions on:

The search service

Its resource group

The associated subscription

To troubleshoot this error, complete the following steps:

Verify all the Azure identifiers:

azure.search.service.nameazure.search.subscription.idazure.search.resourcegroup.nameazure.search.tenant.id(for service principal auth)

Verify that the Azure Cognitive Search service is reachable and in the expected region.

Verify that the principal or API key has appropriate roles (for example, Contributor on the search service and Reader or Contributor on the resource group or subscription, as required by your security model).

Re-run connector validation after you fix configuration or permissions. If the error persists, contact Confluent Support with the full validation output.

Does the Azure Cognitive Search Sink connector support client-side field level encryption (CSFLE)?

Check the latest Client-Side Field Level Encryption documentation for Confluent Cloud in Manage CSFLE for connectors, or contact your Confluent account team to confirm current availability and limitations for the Azure Cognitive Search Sink connector.

Why does the connector fail with schema or field mismatch errors?

The connector fails when your Kafka record schema does not match the Azure Cognitive Search index definition. Common issues include:

Missing fields in the Azure index: All fields in your Kafka record schema must exist in the Azure Search index. The connector fails when a record contains a field not defined in the index.

Data type mismatches: Field types in your schema must be compatible with the corresponding Azure Search field types (for example,

Edm.String,Edm.Int32).Required fields not populated: When the Azure index defines required fields, ensure all records include those fields.

To resolve field mismatch errors, complete the following steps:

Review the Azure Search index schema and compare it with your Kafka record schema.

Add any missing fields to the Azure index, or use Single Message Transforms (SMTs) to filter out fields not needed in the index.

Verify that field data types are compatible between your records and the Azure index.

How do I monitor the connector performance and throughput?

Monitor the Azure Cognitive Search Sink connector using Confluent Cloud metrics and observability features:

Connector metrics in the Cloud Console: View real-time metrics including throughput (records per second), lag, and error rates on the connector’s detail page.

Success and error topics: The connector automatically creates success and error topics. Monitor these topics to track successfully processed records and records that failed to write.

Dead letter queue (DLQ): Check the DLQ topic for records that could not be processed due to errors.

Azure Search service metrics: Use the Azure Portal to monitor search service health, indexing operations, throttling, and storage utilization.

For optimal performance, increase tasks.max to run more tasks in parallel, especially when you process high-volume topics.

What happens when the connector fails to write to Azure Search?

The connector includes automatic retry logic for transient failures:

Automatic retries: When Azure Search is temporarily unavailable or returns retryable errors (for example, timeouts, HTTP 429 throttling), the connector retries the request.

Maximum retry duration: Configure

max.retry.msto control the maximum time the connector spends retrying failed requests. The default behavior retries until the request succeeds or exceeds this duration.Non-retryable errors: For permanent failures (for example, HTTP 502 Bad Gateway, HTTP 504 Gateway Timeout, invalid data, schema mismatches, authentication errors), the connector behavior depends on the

behavior.on.errorsetting. When set toFAIL, the connector throws aConnectExceptionand stops the task. Otherwise, the connector writes the failed record to the error topic and continues processing.Error topic and DLQ: Failed records are sent to the connector’s error topic or dead letter queue, depending on the failure type.

To handle failures effectively, complete the following steps:

Monitor the error topic and DLQ for failed records.

Review Azure Search service quotas and ensure adequate capacity to handle your indexing load.

Verify that your Azure credentials and permissions remain valid.

Next Steps

For an example that shows fully-managed Confluent Cloud connectors in action with Confluent Cloud for Apache Flink, see the Cloud ETL Demo. This example also shows how to use Confluent CLI to manage your resources in Confluent Cloud.