Salesforce Bulk API Source Connector for Confluent Cloud

The fully-managed Salesforce Bulk API Source connector for Confluent Cloud integrates Salesforce.com with Apache Kafka®. This connector pulls records and captures changes from Salesforce.com using the Salesforce Bulk Query API.

Note

This Quick Start is for the fully-managed Confluent Cloud connector. If you are installing the connector locally for Confluent Platform, see Salesforce Bulk API Source Connector for Confluent Platform.

If you are using Salesforce Bulk API 2.0, see the Salesforce Bulk API 2.0 Source Connector for Confluent Cloud.

If you require private networking for fully-managed connectors, make sure to set up the proper networking beforehand. For more information, see Manage Networking for Confluent Cloud Connectors.

The connector supports Salesforce up to API version 64.0.

Features

The Salesforce Bulk API Source connector provides the following features:

At least once delivery: The connector guarantees that records are delivered at least once to the Kafka topic. If the connector restarts, there could be duplicate records in the Kafka topic.

Supported data formats: The connector supports Avro, JSON Schema, Protobuf, or JSON (schemaless) output data. Schema Registry must be enabled to use a Schema Registry-based format (for example, Avro, JSON_SR (JSON Schema), or Protobuf).

Tasks per connector: Organizations can run multiple connectors with a limit of one task per connector (that is,

"tasks.max": "1").Supported SObjects: See the following lists for supported and unsupported Salesforce objects. See Confluent Cloud connector limitations for additional information.

The following Salesforce objects are supported by this connector:

Account

Campaign

CampaignMember

Case

Contact

Contract

Event

Group

Lead

Opportunity

OpportunityContactRole

OpportunityLineItem

Period

PricebookEntry

Product2

Task

TaskFeed

TaskRelation

User

UserRole

The following Salesforce objects are not supported by this connector:

Feed (for example, AccountFeed and AssetFeed, etc.)

Share (for example, AccountBrandShare and ChannelProgramLevelShare, etc.)

History (for example, AccountHistory, ActivityHistory, etc.)

EventRelation (for example, AcceptedEventRelation, DeclinedEventRelation, etc.)

AggregateResult

AttachedContentDocument

CaseStatus

CaseTeamMember

CaseTeamRole

CaseTeamTemplate

CaseTeamTemplateMember

CaseTeamTemplateRecord

CombinedAttachment

ContentFolderItem

ContractStatus

EventWhoRelation

FolderedContentDocument

KnowledgeArticleViewStat

KnowledgeArticleVoteStat

LookedUpFromActivity

Name

NoteAndAttachment

OpenActivity

OwnedContentDocument

PartnerRole

RecentlyViewed

ServiceAppointmentStatus

SolutionStatus

TaskPriority

TaskStatus

TaskWhoRelation

UserRecordAccess

WorkOrderLineItemStatus

WorkOrderStatus

For more information and examples to use with the Confluent Cloud API for Connect, see the Confluent Cloud API for Connect Usage Examples section.

Limitations

Be sure to review the following information.

For connector limitations, see Salesforce Bulk API Source Connector limitations.

If you plan to use one or more Single Message Transforms (SMTs), see SMT Limitations.

Quick Start

Use this quick start to get up and running with the Salesforce Bulk API Source connector. The quick start provides the basics of selecting the connector and configuring it to capture records and record changes from Salesforce.

- Prerequisites

Authorized access to a Confluent Cloud cluster on Amazon Web Services (AWS), Microsoft Azure (Azure), or Google Cloud.

Salesforce account credentials.

The Confluent CLI installed and configured for the cluster. See Install the Confluent CLI.

Schema Registry must be enabled to use a Schema Registry-based format (for example, Avro, JSON_SR (JSON Schema), or Protobuf).

At least one topic must exist in your Confluent Cloud cluster before creating the connector.

For networking considerations, see Networking and DNS. To use a set of public egress IP addresses, see Public Egress IP Addresses for Confluent Cloud Connectors.

Kafka cluster credentials. The following lists the different ways you can provide credentials.

Enter an existing service account resource ID.

Create a Confluent Cloud service account for the connector. Make sure to review the ACL entries required in the service account documentation. Some connectors have specific ACL requirements.

Create a Confluent Cloud API key and secret. To create a key and secret, you can use confluent api-key create or you can autogenerate the API key and secret directly in the Cloud Console when setting up the connector.

Using the Confluent Cloud Console

Step 1: Launch your Confluent Cloud cluster

To create and launch a Kafka cluster in Confluent Cloud, see Create a kafka cluster in Confluent Cloud.

Step 2: Add a connector

In the left navigation menu, click Connectors. If you already have connectors in your cluster, click + Add connector.

Step 3: Select your connector

Click the Salesforce Bulk API Source connector card.

Important

At least one topic must exist in your Confluent Cloud cluster before creating the connector.

Step 4: Enter the connector details

Note

Make sure you have all your prerequisites completed.

An asterisk ( * ) designates a required entry.

At the Add Salesforce Bulk API Source Connector screen, complete the following:

Select the topic you want to send data to from the Topics list. To create a new topic, click +Add new topic.

Select the way you want to provide Kafka Cluster credentials. You can choose one of the following options:

My account: This setting allows your connector to globally access everything that you have access to. With a user account, the connector uses an API key and secret to access the Kafka cluster. This option is not recommended for production.

Service account: This setting limits the access for your connector by using a service account. This option is recommended for production.

Use an existing API key: This setting allows you to specify an API key and a secret pair. You can use an existing pair or create a new one. This method is not recommended for production environments.

Note

Freight clusters support only service accounts for Kafka authentication.

Click Continue.

Configure the authentication properties:

Salesforce details

Salesforce instance: The URL of the Salesforce endpoint to use. The default is https://login.salesforce.com. This directs the connector to use the endpoint specified in the authentication response.

Salesforce username: The Salesforce username for the connector to use.

Salesforce password: The Salesforce password for the connector to use.

Salesforce password token: The Salesforce security token associated with the username.

Click Continue.

Salesforce Object: The Salesforce object to create a topic for.

Salesforce since:

CreatedDateafter which the records should be pulled. The time is in UTC and has the required format:yyyy-MM-dd.

Output messages

Select output record value format: Select the output record value format (data going to the Kafka topic). Valid values are AVRO, JSON, JSON_SR (JSON Schema), or PROTOBUF. Schema Registry must be enabled to use a Schema Registry-based format (for example, Avro, JSON Schema, or Protobuf).

Show advanced configurations

Schema context: Select a schema context to use for this connector, if using a schema-based data format. This property defaults to the Default context, which configures the connector to use the default schema set up for Schema Registry in your Confluent Cloud environment. A schema context allows you to use separate schemas (like schema sub-registries) tied to topics in different Kafka clusters that share the same Schema Registry environment. For example, if you select a non-default context, a Source connector uses only that schema context to register a schema and a Sink connector uses only that schema context to read from. For more information about setting up a schema context, see What are schema contexts and when should you use them?.

Poll interval (ms): Set the time in milliseconds to wait for new change events when no data is returned. Default is

500ms.Enable Batching: Enables batching by applying PK-chunking. The default value is TRUE.

Additional Configs

Value Converter Decimal Format: Specifies the

JSONorJSON_SRserialization format for ConnectDECIMALlogical type values with two allowed literals:BASE64to serializeDECIMALlogical types as base64 encoded binary data, andNUMERICto serializeDECIMALlogical type values inJSONorJSON_SRas a number representing the decimal value.value.converter.replace.null.with.default: Whether to replace fields that have a default value and that are null to the default value. When set to true, the default value is used, otherwise null is used. Applicable for JSON Converter.

Key Converter Schema ID Serializer: The class name of the schema ID serializer for keys. This is used to serialize schema IDs in the message headers.

Value Converter Reference Subject Name Strategy: Sets the subject reference name strategy for values. Valid entries are

DefaultReferenceSubjectNameStrategyorQualifiedReferenceSubjectNameStrategy. You can use this strategy only withPROTOBUFformat; the default strategy isDefaultReferenceSubjectNameStrategy.value.converter.schemas.enable: Include schemas within each of the serialized values. Input messages must contain schema and payload fields and may not contain additional fields. For plain JSON data, set this to false. Applicable for JSON Converter.

errors.tolerance: Use this property if you would like to configure the connector’s error handling behavior. WARNING: This property should be used with CAUTION for SOURCE CONNECTORS as it may lead to dataloss. If you set this property to ‘all’, the connector will not fail on errant records, but will instead log them (and send to DLQ for Sink Connectors) and continue processing. If you set this property to ‘none’, the connector task will fail on errant records.

Value Converter Connect Meta Data: Enables the Connect converter to add its metadata to the output schema. Applies to Avro converters.

Value Converter Value Subject Name Strategy: Determines how to construct the subject name under which the value schema is registered with Schema Registry.

Key Converter Key Subject Name Strategy: Determines how to construct the subject name for key schema registration.

value.converter.ignore.default.for.nullables: When set to true, this property ensures that the corresponding record in Kafka is NULL, instead of showing the default column value. Applicable for AVRO,PROTOBUF and JSON_SR Converters.

Value Converter Schema ID Serializer: The class name of the schema ID serializer for values. This is used to serialize schema IDs in the message headers.

Auto-restart policy

Enable Connector Auto-restart: Enables the auto-restart behavior of the connector and its task in the event of user-actionable errors. Defaults to

true, enabling the connector to automatically restart in case of user-actionable errors. Set this property tofalseto disable auto-restart for failed connectors. In such cases, you would need to manually restart the connector.

Connection details

Max Retry Time in Milliseconds: In case of error when making a request to Salesforce, the connector retries until this time (in milliseconds) elapses.

Transforms

Single Message Transforms: To add a new SMT, see Add transforms. For more information about unsupported SMTs, see Unsupported transformations.

Processing position

Set offsets: Click Set offsets to define a specific offset for this connector to begin procession data from. For more information on managing offsets, see Manage offsets.

For all property values and definitions, see Configuration Properties.

Click Continue.

Based on the number of topic partitions you select, you will be provided with a recommended number of tasks.

To change the number of tasks, use the Range Slider to select the desired number of tasks.

Click Continue.

Verify the connection details by previewing the running configuration.

Tip

For information about previewing your connector output, see Data Previews for Confluent Cloud Connectors.

After you’ve validated that the properties are configured to your satisfaction, click Launch.

The status for the connector should go from Provisioning to Running.

Step 5: Check the Kafka topic

After the connector is running, verify that messages are populating your Kafka topic.

For more information and examples to use with the Confluent Cloud API for Connect, see the Confluent Cloud API for Connect Usage Examples section.

Using the Confluent CLI

Complete the following steps to set up and run the connector using the Confluent CLI.

Important

Make sure you have all your prerequisites completed.

At least one topic must exist in your Confluent Cloud cluster before creating the connector.

Step 1: List the available connectors

Enter the following command to list available connectors:

confluent connect plugin list

Step 2: List the connector configuration properties

Enter the following command to show the connector configuration properties:

confluent connect plugin describe <connector-plugin-name>

The command output shows the required and optional configuration properties.

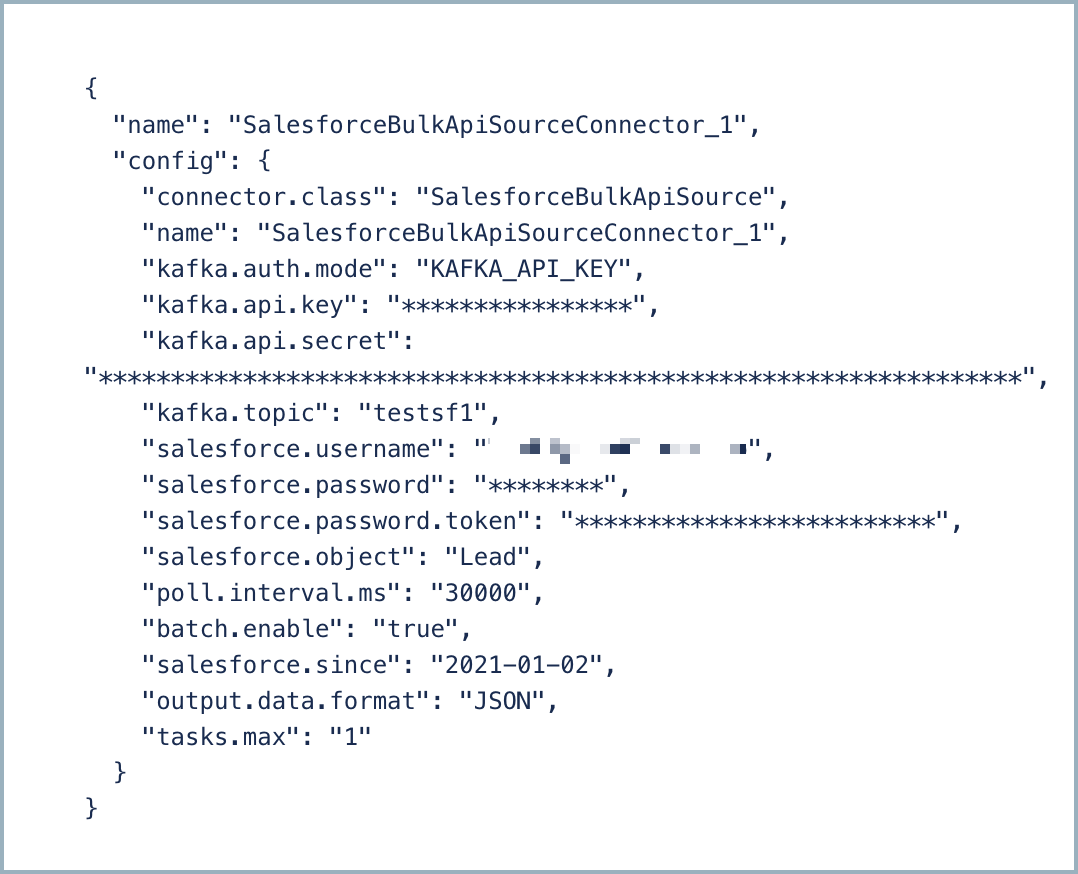

Step 3: Create the connector configuration file

Create a JSON file that contains the connector configuration properties. The following example shows the required connector properties.

{

"connector.class": "SalesforceBulkApiSource",

"name": "SalesforceBulkApiSource_0",

"kafka.auth.mode": "KAFKA_API_KEY",

"kafka.api.key": "<my-kafka-api-key>",

"kafka.api.secret": "<my-kafka-api-secret>",

"kafka.topic": "TestBulkAPI",

"salesforce.instance": "https://login.salesforce.com",

"salesforce.username": "<my-username>",

"salesforce.password": "**************",

"salesforce.password.token": "************************",

"salesforce.object": "<SObject-name>",

"output.data.format": "JSON",

"tasks.max": "1"

}

Note the following property definitions:

"connector.class": Identifies the connector plugin name."name": Sets a name for your new connector.

"kafka.auth.mode": Identifies the connector authentication mode you want to use. There are two options:SERVICE_ACCOUNTorKAFKA_API_KEY(the default). To use an API key and secret, specify the configuration propertieskafka.api.keyandkafka.api.secret, as shown in the example configuration (above). To use a service account, specify the Resource ID in the propertykafka.service.account.id=<service-account-resource-ID>. To list the available service account resource IDs, use the following command:confluent iam service-account list

For example:

confluent iam service-account list Id | Resource ID | Name | Description +---------+-------------+-------------------+------------------- 123456 | sa-l1r23m | sa-1 | Service account 1 789101 | sa-l4d56p | sa-2 | Service account 2

""kafka.topic": Enter a Kafka topic name. A topic must exist before launching the connector.""salesforce.<...>"": Enter the required Salesforce connection details.""salesforce.object"": The SObject that the connector polls for new and changed records."output.data.format": Sets the output Kafka record value format (data coming from the connector). Valid entries are AVRO, JSON_SR, PROTOBUF, or JSON. You must have Confluent Cloud Schema Registry configured if using a schema-based message format (for example, Avro, JSON_SR (JSON Schema), or Protobuf)."tasks.max": Enter the number of tasks in use by the connector. Organizations can run multiple connectors with a limit of one task per connector (that is,"tasks.max": "1").

Single Message Transforms: See the Single Message Transforms (SMT) documentation for details about adding SMTs using the CLI.

See Configuration Properties for all property values and descriptions.

Step 4: Load the properties file and create the connector

Enter the following command to load the configuration and start the connector:

confluent connect cluster create --config-file <file-name>.json

For example:

confluent connect cluster create --config-file salesforce-bulk-api-source.json

Example output:

Created connector SalesforceBulkApiSource_0 lcc-aj3qr

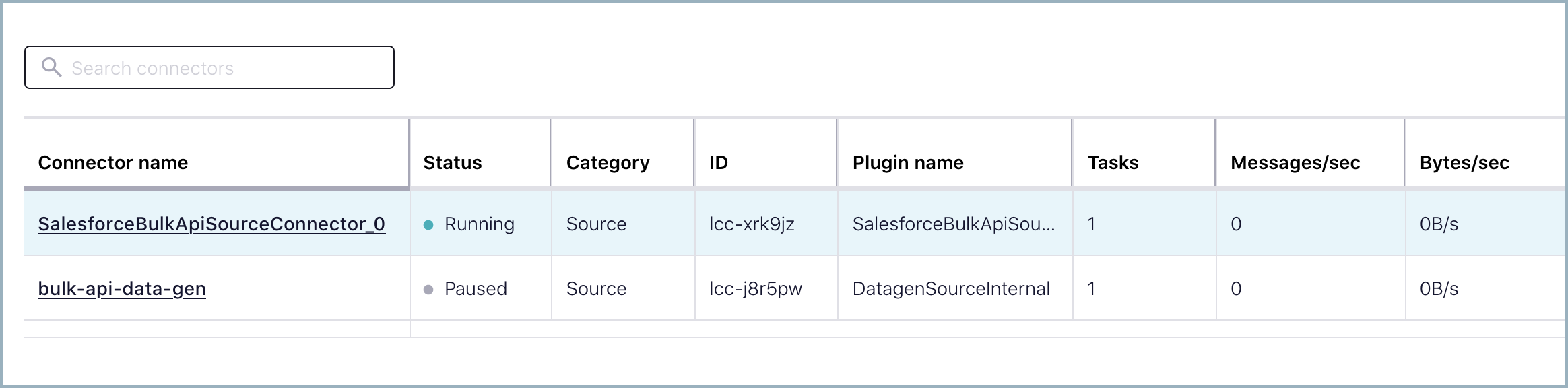

Step 5: Check the connector status

Enter the following command to check the connector status:

confluent connect cluster list

Example output:

ID | Name | Status | Type

+-----------+------------------------------+---------+-------+

lcc-aj3qr | SalesforceBulkApiSource_0 | RUNNING | source

Step 6: Check the Kafka topic.

After the connector is running, verify that messages are populating your Kafka topic.

For more information and examples to use with the Confluent Cloud API for Connect, see the Confluent Cloud API for Connect Usage Examples section.

Configuration Properties

Use the following configuration properties with the fully-managed connector. For self-managed connector property definitions and other details, see the connector docs in Self-managed connectors for Confluent Platform.

How should we connect to your data?

nameSets a name for your connector.

Type: string

Valid Values: A string at most 64 characters long

Importance: high

Kafka Cluster credentials

kafka.auth.modeKafka Authentication mode. It can be one of KAFKA_API_KEY or SERVICE_ACCOUNT. It defaults to KAFKA_API_KEY mode, whenever possible.

Type: string

Valid Values: SERVICE_ACCOUNT, KAFKA_API_KEY

Importance: high

kafka.api.keyKafka API Key. Required when kafka.auth.mode==KAFKA_API_KEY.

Type: password

Importance: high

kafka.service.account.idThe Service Account that will be used to generate the API keys to communicate with Kafka Cluster.

Type: string

Importance: high

kafka.api.secretSecret associated with Kafka API key. Required when kafka.auth.mode==KAFKA_API_KEY.

Type: password

Importance: high

Which topic do you want to send data to?

kafka.topicIdentifies the topic name to write the data to.

Type: string

Importance: high

Schema Config

schema.context.nameAdd a schema context name. A schema context represents an independent scope in Schema Registry. It is a separate sub-schema tied to topics in different Kafka clusters that share the same Schema Registry instance. If not used, the connector uses the default schema configured for Schema Registry in your Confluent Cloud environment.

Type: string

Default: default

Importance: medium

How should we connect to Salesforce?

salesforce.instanceThe URL of the Salesforce endpoint to use. The default is https://login.salesforce.com. This directs the connector to use the endpoint specified in the authentication response.

Type: string

Default: https://login.salesforce.com

Importance: high

salesforce.usernameThe Salesforce username the connector should use.

Type: string

Importance: high

salesforce.passwordThe Salesforce password the connector should use.

Type: password

Importance: high

salesforce.password.tokenThe Salesforce security token associated with the username.

Type: password

Importance: high

salesforce.objectThe Salesforce object to create topic for.

Type: string

Importance: high

poll.interval.msHow often to query Salesforce for new records.

Type: int

Default: 30000 (30 seconds)

Valid Values: [8700,…,300000]

Importance: medium

salesforce.sinceCreatedDate after which the records should be pulled. Note that the time is in UTC and has required format: yyyy-MM-dd.

Type: string

Importance: medium

batch.enableEnable batching by applying PK-chunking. The default value is TRUE.

Type: boolean

Default: true

Importance: low

Connection details

request.max.retries.time.msIn case of error when making a request to Salesforce, the connector will retry until this time (in ms) elapses. The default value is 30000 (30 seconds). Minimum value is 1 sec

Type: long

Default: 30000 (30 seconds)

Valid Values: [1000,…,250000]

Importance: low

Output messages

output.data.formatSets the output Kafka record value format. Valid entries are AVRO, JSON_SR, PROTOBUF, or JSON. Note that you need to have Confluent Cloud Schema Registry configured if using a schema-based message format like AVRO, JSON_SR, and PROTOBUF

Type: string

Default: JSON

Importance: high

Number of tasks for this connector

tasks.maxMaximum number of tasks for the connector.

Type: int

Valid Values: [1,…]

Importance: high

Additional Configs

header.converterThe converter class for the headers. This is used to serialize and deserialize the headers of the messages.

Type: string

Importance: low

producer.override.compression.typeThe compression type for all data generated by the producer. Valid values are none, gzip, snappy, lz4, and zstd.

Type: string

Importance: low

producer.override.linger.msThe producer groups together any records that arrive in between request transmissions into a single batched request. More details can be found in the documentation: https://docs.confluent.io/platform/current/installation/configuration/producer-configs.html#linger-ms.

Type: long

Valid Values: [100,…,1000]

Importance: low

value.converter.allow.optional.map.keysAllow optional string map key when converting from Connect Schema to Avro Schema. Applicable for Avro Converters.

Type: boolean

Importance: low

value.converter.auto.register.schemasSpecify if the Serializer should attempt to register the Schema.

Type: boolean

Importance: low

value.converter.connect.meta.dataAllow the Connect converter to add its metadata to the output schema. Applicable for Avro Converters.

Type: boolean

Importance: low

value.converter.enhanced.avro.schema.supportEnable enhanced schema support to preserve package information and Enums. Applicable for Avro Converters.

Type: boolean

Importance: low

value.converter.enhanced.protobuf.schema.supportEnable enhanced schema support to preserve package information. Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.flatten.unionsWhether to flatten unions (oneofs). Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.generate.index.for.unionsWhether to generate an index suffix for unions. Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.generate.struct.for.nullsWhether to generate a struct variable for null values. Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.int.for.enumsWhether to represent enums as integers. Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.latest.compatibility.strictVerify latest subject version is backward compatible when use.latest.version is true.

Type: boolean

Importance: low

value.converter.object.additional.propertiesWhether to allow additional properties for object schemas. Applicable for JSON_SR Converters.

Type: boolean

Importance: low

value.converter.optional.for.nullablesWhether nullable fields should be specified with an optional label. Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.optional.for.proto2Whether proto2 optionals are supported. Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.scrub.invalid.namesWhether to scrub invalid names by replacing invalid characters with valid characters. Applicable for Avro and Protobuf Converters.

Type: boolean

Importance: low

value.converter.use.latest.versionUse latest version of schema in subject for serialization when auto.register.schemas is false.

Type: boolean

Importance: low

value.converter.use.optional.for.nonrequiredWhether to set non-required properties to be optional. Applicable for JSON_SR Converters.

Type: boolean

Importance: low

value.converter.wrapper.for.nullablesWhether nullable fields should use primitive wrapper messages. Applicable for Protobuf Converters.

Type: boolean

Importance: low

value.converter.wrapper.for.raw.primitivesWhether a wrapper message should be interpreted as a raw primitive at root level. Applicable for Protobuf Converters.

Type: boolean

Importance: low

errors.toleranceUse this property if you would like to configure the connector’s error handling behavior. WARNING: This property should be used with CAUTION for SOURCE CONNECTORS as it may lead to dataloss. If you set this property to ‘all’, the connector will not fail on errant records, but will instead log them (and send to DLQ for Sink Connectors) and continue processing. If you set this property to ‘none’, the connector task will fail on errant records.

Type: string

Default: none

Importance: low

key.converter.key.schema.id.serializerThe class name of the schema ID serializer for keys. This is used to serialize schema IDs in the message headers.

Type: string

Default: io.confluent.kafka.serializers.schema.id.PrefixSchemaIdSerializer

Importance: low

key.converter.key.subject.name.strategyHow to construct the subject name for key schema registration.

Type: string

Default: TopicNameStrategy

Importance: low

value.converter.decimal.formatSpecify the JSON/JSON_SR serialization format for Connect DECIMAL logical type values with two allowed literals:

BASE64 to serialize DECIMAL logical types as base64 encoded binary data and

NUMERIC to serialize Connect DECIMAL logical type values in JSON/JSON_SR as a number representing the decimal value.

Type: string

Default: BASE64

Importance: low

value.converter.flatten.singleton.unionsWhether to flatten singleton unions. Applicable for Avro and JSON_SR Converters.

Type: boolean

Default: false

Importance: low

value.converter.ignore.default.for.nullablesWhen set to true, this property ensures that the corresponding record in Kafka is NULL, instead of showing the default column value. Applicable for AVRO,PROTOBUF and JSON_SR Converters.

Type: boolean

Default: false

Importance: low

value.converter.reference.subject.name.strategySet the subject reference name strategy for value. Valid entries are DefaultReferenceSubjectNameStrategy or QualifiedReferenceSubjectNameStrategy. Note that the subject reference name strategy can be selected only for PROTOBUF format with the default strategy being DefaultReferenceSubjectNameStrategy.

Type: string

Default: DefaultReferenceSubjectNameStrategy

Importance: low

value.converter.replace.null.with.defaultWhether to replace fields that have a default value and that are null to the default value. When set to true, the default value is used, otherwise null is used. Applicable for JSON Converter.

Type: boolean

Default: true

Importance: low

value.converter.schemas.enableInclude schemas within each of the serialized values. Input messages must contain schema and payload fields and may not contain additional fields. For plain JSON data, set this to false. Applicable for JSON Converter.

Type: boolean

Default: false

Importance: low

value.converter.value.schema.id.serializerThe class name of the schema ID serializer for values. This is used to serialize schema IDs in the message headers.

Type: string

Default: io.confluent.kafka.serializers.schema.id.PrefixSchemaIdSerializer

Importance: low

value.converter.value.subject.name.strategyDetermines how to construct the subject name under which the value schema is registered with Schema Registry.

Type: string

Default: TopicNameStrategy

Importance: low

Auto-restart policy

auto.restart.on.user.errorEnable connector to automatically restart on user-actionable errors.

Type: boolean

Default: true

Importance: medium

Frequently asked questions

Find answers to frequently asked questions about the Salesforce Bulk API Source connector for Confluent Cloud.

Authentication and connection

Data ingestion and schema

Why is my connector running but not processing any data?

The Salesforce Bulk API Source connector requires both CreatedDate and LastModifiedDate fields to incrementally query data from Salesforce objects. If your Salesforce object lacks these fields, the connector runs without processing any data.

Common objects without required fields:

GroupMember: Missing bothCreatedDateandLastModifiedDateUserRole: MissingCreatedDatePermissionSetAssignment: MissingLastModifiedDateCaseHistory: MissingLastModifiedDate

Resolution:

Verify object support: Verify that your Salesforce object has both

CreatedDateandLastModifiedDatefields before configuring the connector. See Supported and Unsupported SObjects for a list of supported and unsupported SObjects.Alternative approaches: For objects without these fields, consider:

Using the Salesforce CDC Source connector if change data capture is enabled for the object.

Using the Salesforce Platform Event Source connector for event-based data.

Using the Salesforce Bulk API 2.0 Source connector, which may offer additional support for custom queries in future releases.

Note

Detailed log analysis at the TRACE level identifies this limitation, rather than explicit error messages.

Why are some fields missing after I updated my Salesforce object definition?

When you add new fields to a Salesforce object, the connector might not immediately recognize them. The connector caches the Salesforce object metadata during the authentication and handshake phase at connector startup.

To ensure the connector processes new fields:

Restart the connector: This refreshes the cached Salesforce object metadata and ensures the connector recognizes newly added fields.

Verify field visibility: Ensure the Salesforce user has field-level security permissions to access the new fields.

Check topic messages: Review messages in your Kafka topic to confirm all expected fields are being ingested.

Note

The connector does not automatically detect schema changes. You must manually restart the connector after modifying the Salesforce object definition. For fully-managed connectors, make any minor configuration change ,such as batch size or timeout, and re-deploy the connector to restart the tasks.

Can I query multiple Salesforce objects with a single connector instance?

No, the Salesforce Bulk API Source connector currently supports only one Salesforce object (SObject) per connector instance.

Workaround:

Deploy multiple connector instances, one for each Salesforce object you want to query. Each connector can write to a different Kafka topic.

Performance and configuration

How do I avoid hitting Salesforce API limits?

Salesforce enforces daily API limits based on your organization’s edition and license type. Be aware of the following Bulk API specific limits:

Daily API limit: Varies by Salesforce edition (typically 15,000 to 1,000,000 API calls per 24 hours)

Concurrent Bulk API job limit: Up to 5 concurrent jobs per user (may vary based on Salesforce edition)

Best practices:

Configure polling interval: Increase the

poll.interval.msproperty to reduce the frequency of API calls. The default is30000 ms(30 seconds). Consider setting it to300000 ms(five minutes) or higher based on your data freshness requirements.Monitor API usage: Use Salesforce Setup Console (Setup > System Overview > API Usage) to monitor your current API usage and remaining limits.

Use appropriate connector types: For real-time data changes, consider using Salesforce CDC Source connector which uses the Streaming API instead of REST/Bulk API.

Limit the number of connectors: Since each connector instance can only handle one Salesforce object, deploying many connectors can quickly exhaust API limits. Plan your connector deployments accordingly.

Common error:

Organization total events daily limit exceeded

This error occurs when the Salesforce organization exceeds its daily API limit. Restart the connector once the limit resets.

For more information, see Salesforce API Limits.

Why am I getting Read timed out (SocketTimeoutException) errors?

Read timeout errors occur when the connector cannot retrieve data from Salesforce within the configured timeout period:

java.net.SocketTimeoutException: Read timed out

Common causes:

The Salesforce object contains a very large number of records.

Complex Salesforce object relationships or triggers that slow down query execution.

Network latency between Confluent Cloud and Salesforce.

Salesforce server-side performance issues.

Resolution:

Increase polling interval: Increase the

poll.interval.msproperty to give Salesforce more time between queries.Verify Salesforce performance: Check with your Salesforce administrators to ensure the object and any associated triggers or workflows are performing optimally.

Compare with other objects: If you have multiple connectors querying different Salesforce objects from the same organization, compare their performance. If only one object experiences timeouts, the issue is likely specific to that object’s configuration or data volume.

Consider Bulk API 2.0: The Salesforce Bulk API 2.0 Source connector may offer better performance for large data volumes. It includes a configurable

result.max.rowsproperty that can help reduce the amount of data retrieved per API call.

Troubleshooting

How do I troubleshoot connector failures or check connector logs?

For fully-managed connectors in Confluent Cloud:

Check connector status: In the Confluent Cloud Console, navigate to your connector and check its status and any error messages displayed.

Review connector metrics: Monitor metrics such as records processed, errors, and throughput in the Confluent Cloud Console.

Access connector logs: Connector logs are available through the Confluent Cloud Console. Navigate to your connector and click on the Logs tab to view detailed error messages and stack traces.

Common log locations: For API access to logs, see the Confluent Cloud API documentation.

For assistance, contact Confluent Support with your connector ID, cluster ID, and relevant error messages.

Next Steps

For an example that shows fully-managed Confluent Cloud connectors in action with Confluent Cloud for Apache Flink, see the Cloud ETL Demo. This example also shows how to use Confluent CLI to manage your resources in Confluent Cloud.